Shallow Diffuse: Robust and Invisible Watermarking through Low-Dimensional Subspaces in Diffusion Models

The widespread use of AI-generated content from diffusion models has raised significant concerns regarding misinformation and copyright infringement. Watermarking is a crucial technique for identifying these AI-generated images and preventing their misuse. In this paper, we introduce Shallow Diffuse, a new watermarking technique that embeds robust and invisible watermarks into diffusion model outputs. Unlike existing approaches that integrate watermarking throughout the entire diffusion sampling process, Shallow Diffuse decouples these steps by leveraging the presence of a low-dimensional subspace in the image generation process. This method ensures that a substantial portion of the watermark lies in the null space of this subspace, effectively separating it from the image generation process. Our theoretical and empirical analyses show that this decoupling strategy greatly enhances the consistency of data generation and the detectability of the watermark. Extensive experiments further validate that our Shallow Diffuse outperforms existing watermarking methods in terms of robustness and consistency. The codes are released at https://github.com/liwd190019/Shallow-Diffuse.

💡 Research Summary

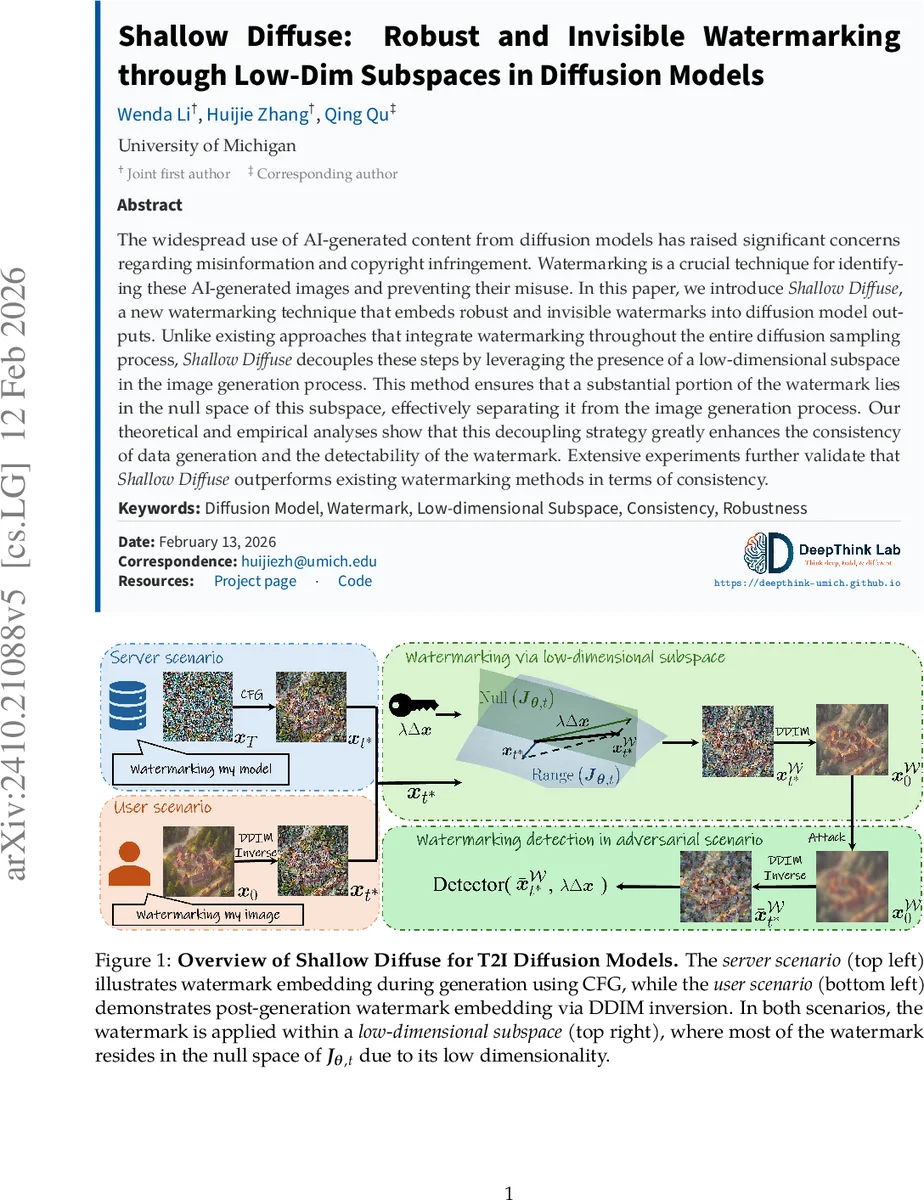

The paper introduces “Shallow Diffuse,” a novel watermarking scheme for diffusion‑based generative models that leverages an intrinsic low‑dimensional subspace present in the image generation process. Existing watermarking approaches either embed the watermark directly into the initial random seed or tightly couple it with the entire sampling trajectory, which often leads to inconsistent outputs, visual degradation, and dependence on the seed. In contrast, Shallow Diffuse decouples watermark insertion from sampling by inserting the watermark at a carefully chosen intermediate timestep (t*), where the Jacobian of the posterior‑mean predictor (PMP) is provably low‑rank. Because the Jacobian’s rank r is far smaller than the ambient dimension d, a large portion of a randomly oriented watermark vector Δx lies in the null space of the Jacobian; consequently, the term J·Δx is approximately zero. This property ensures that the PMP’s prediction of the clean image remains virtually unchanged (preserving consistency), while the watermark survives as a residual that can be detected later.

The method works in both server‑side and user‑side scenarios. In the server scenario, the watermark is added after a few DDIM steps (or directly at t* = 0) without ever touching the initial seed, eliminating seed‑dependency. In the user scenario, an already generated image is inverted back to the chosen timestep using DDIM‑Inv, the watermark is added, and then the image is re‑forwarded to the final output. Watermark design follows a frequency‑domain masking strategy: the image at timestep t* is transformed with a discrete Fourier transform, a binary mask M selects a set of frequencies, and a watermark pattern W is injected only in those frequencies. The resulting perturbation λ·Δx is added to the latent at t*, where λ controls strength.

The authors provide two theoretical guarantees. Theorem 1 (Consistency) bounds the ℓ₂ distance between the watermarked and original images under a low‑rank Jacobian assumption, showing that visual quality metrics (PSNR, SSIM, LPIPS) remain essentially unchanged. Theorem 2 (Detectability) proves that, because most of Δx resides in the Jacobian’s null space, any reasonable attack (noise, blur, compression, geometric transforms) cannot fully erase the watermark; the inner product between the recovered latent and Δx stays above a provable threshold, yielding high true‑positive rates at low false‑positive rates.

Extensive experiments validate these claims. The authors evaluate Shallow Diffuse on several state‑of‑the‑art text‑to‑image models (Stable Diffusion v1.5, DALL·E 2, Imagen) and compare against prior methods such as Tree‑Ring and RingID. Across server and user scenarios, Shallow Diffuse achieves comparable or better image quality (PSNR differences <0.2 dB, SSIM differences <0.01) while delivering superior robustness: TPR@1 % FPR exceeds 0.95 under Gaussian noise, JPEG compression, blurring, and moderate geometric transformations. Ablation studies show that inserting at t* = 0 and using a modest λ (≈0.02) provides the best trade‑off between invisibility and detectability. Multi‑key watermarking, various pattern designs (grid, random, frequency‑band specific), and attacks are also examined, confirming that the method scales gracefully.

The paper further discusses generalization to classifier‑free guided (CFG) text‑to‑image pipelines, showing that the low‑rank property holds across different guidance strengths. Additional analyses demonstrate that the low‑dimensional subspace is a pervasive phenomenon across diverse architectures (UNet, VAE) and datasets, implying broad applicability. Limitations include reduced detection under extreme compression (JPEG quality <30) or aggressive geometric warping, and the need to keep λ small to avoid perceptible artifacts.

In conclusion, Shallow Diffuse offers a principled, training‑free watermarking technique that separates watermark information from the core generative dynamics of diffusion models. By exploiting the null space of a low‑rank Jacobian, it achieves high consistency, seed‑independence, and robustness simultaneously—properties that were previously mutually exclusive. The authors release code and pretrained models, facilitating reproducibility and encouraging future work on secure, accountable generative AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment