Same Feedback, Different Source: How AI vs. Human Feedback Shapes Learner Engagement

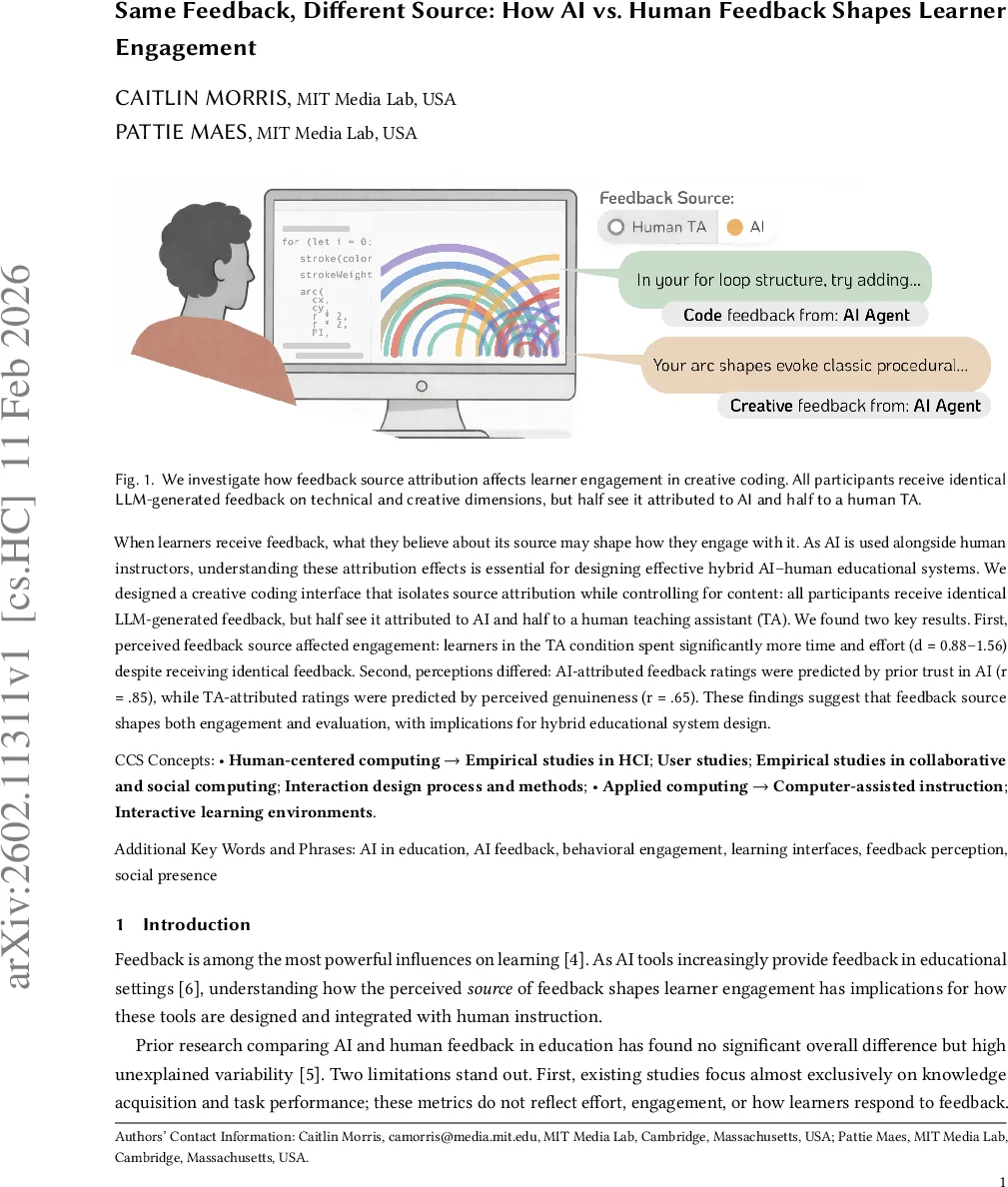

When learners receive feedback, what they believe about its source may shape how they engage with it. As AI is used alongside human instructors, understanding these attribution effects is essential for designing effective hybrid AI-human educational systems. We designed a creative coding interface that isolates source attribution while controlling for content: all participants receive identical LLM-generated feedback, but half see it attributed to AI and half to a human teaching assistant (TA). We found two key results. First, perceived feedback source affected engagement: learners in the TA condition spent significantly more time and effort (d = 0.88-1.56) despite receiving identical feedback. Second, perceptions differed: AI-attributed feedback ratings were predicted by prior trust in AI (r = 0.85), while TA-attributed ratings were predicted by perceived genuineness (r = 0.65). These findings suggest that feedback source shapes both engagement and evaluation, with implications for hybrid educational system design.

💡 Research Summary

The paper investigates how the perceived source of feedback—artificial intelligence (AI) versus a human teaching assistant (TA)—influences learner engagement and evaluation when the feedback content is held constant. To isolate source attribution effects, the authors built a web‑based creative coding platform (p5.js) that delivers identical LLM‑generated feedback to all participants. The only manipulation is the label (“AI‑Generated Feedback” versus “Your TA: Cass”) and a simulated delay for the TA condition (2 minutes) to mimic realistic human response times.

A within‑subjects factor (feedback type: technical vs. creative) and a between‑subjects factor (source: AI vs. TA) create a 2 × 2 mixed design. Twenty‑five participants (median age 41, mixed coding experience) completed four tutorial modules, each ending with a checkpoint where they received both technical and creative feedback and rated its helpfulness on a 5‑point Likert scale. Behavioral metrics captured time on task, code execution frequency, lines of code added, and click‑away events (reference checking).

Key findings: 1) Learners in the TA condition spent substantially more time on the later modules (e.g., 18.9 min vs. 7.0 min on the color module, d = 1.56) and showed higher effort across multiple behavioral indicators (code runs, code length, reference checks). The effect grew after participants received at least one round of feedback, suggesting a cumulative motivational boost from perceiving a human presence. 2) No interaction emerged between source and feedback type; both technical and creative feedback received similar helpfulness ratings (M ≈ 4.2) regardless of attribution, and there was no main effect of source on ratings. 3) Predictors of feedback ratings diverged by condition. In the AI condition, prior trust in AI (both for technical and creative tasks) strongly predicted higher ratings (r ≈ 0.8, p < .01), whereas perceived genuineness of the feedback did not. In the TA condition, perceived authenticity—how genuine the feedback felt and whether the TA understood the learner’s goals—predicted ratings (r ≈ 0.65, p < .05), while attitudes toward AI were irrelevant.

The authors interpret these results through social presence theory: even minimal cues that a human is attending to one’s work can increase motivation, effort, and persistence, independent of the objective quality of the feedback. Conversely, AI feedback can be judged favorably if learners already trust AI, but this positive perception does not translate into deeper engagement.

Limitations include the small sample size (N = 25), a single‑session design that cannot capture long‑term attribution effects, and the deceptive nature of the TA condition (participants believed they were interacting with a real human). Despite these constraints, the study provides a rigorous methodological contribution by controlling feedback content while varying only source attribution.

Implications for educational technology are clear: designers of hybrid AI‑human learning environments should not assume that high‑quality AI feedback will automatically elicit the same level of learner effort as human feedback. Building trust in AI systems and strategically integrating human presence—perhaps through periodic human‑authored comments or visible human oversight—may be necessary to sustain engagement. Future work should expand sample sizes, test longitudinal effects, and explore authentic human‑AI collaborations to validate and extend these findings.

Comments & Academic Discussion

Loading comments...

Leave a Comment