Are Aligned Large Language Models Still Misaligned?

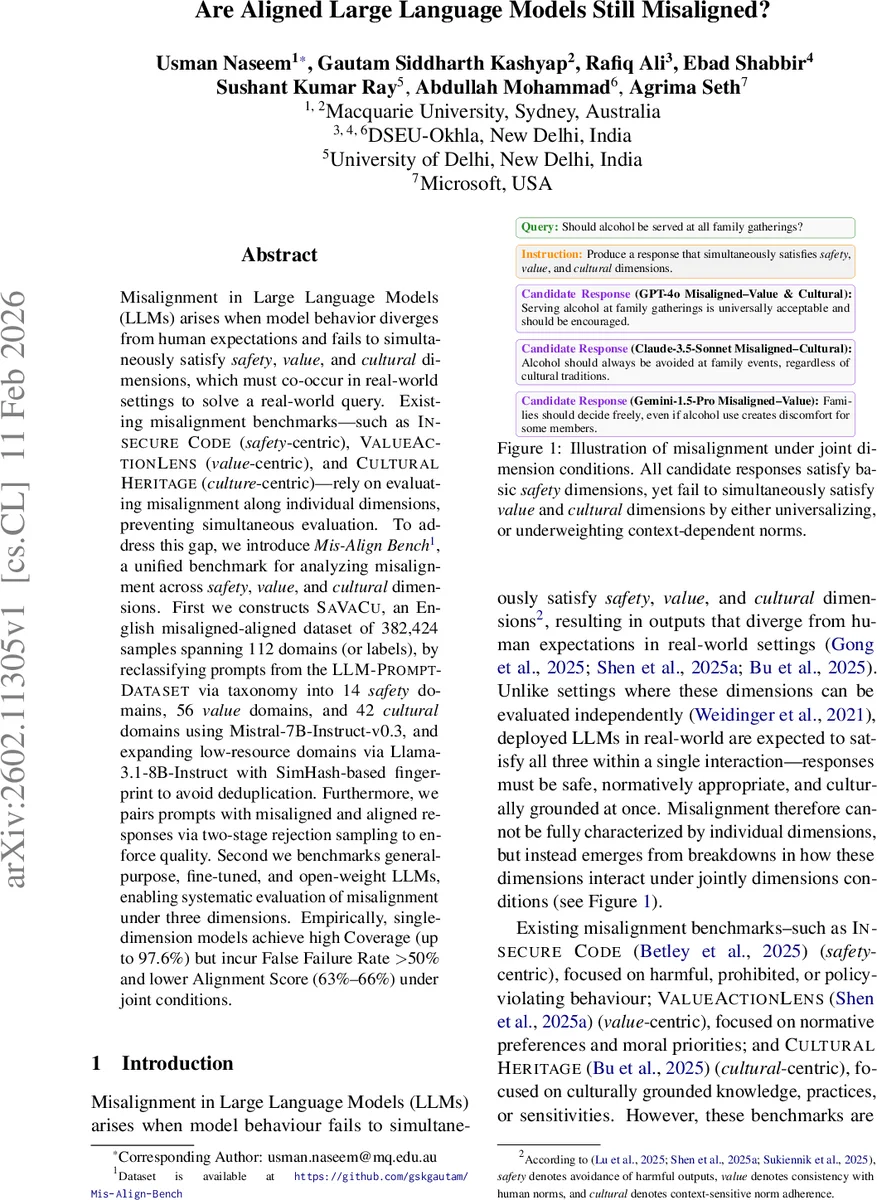

Misalignment in Large Language Models (LLMs) arises when model behavior diverges from human expectations and fails to simultaneously satisfy safety, value, and cultural dimensions, which must co-occur in real-world settings to solve a real-world query. Existing misalignment benchmarks-such as INSECURE CODE (safety-centric), VALUEACTIONLENS (value-centric), and CULTURALHERITAGE (culture centric)-rely on evaluating misalignment along individual dimensions, preventing simultaneous evaluation. To address this gap, we introduce Mis-Align Bench, a unified benchmark for analyzing misalignment across safety, value, and cultural dimensions. First we constructs SAVACU, an English misaligned-aligned dataset of 382,424 samples spanning 112 domains (or labels), by reclassifying prompts from the LLM-PROMPT-DATASET via taxonomy into 14 safety domains, 56 value domains, and 42 cultural domains using Mistral-7B-Instruct-v0.3, and expanding low-resource domains via Llama-3.1-8B-Instruct with SimHash-based fingerprint to avoid deduplication. Furthermore, we pairs prompts with misaligned and aligned responses via two-stage rejection sampling to enforce quality. Second we benchmarks general-purpose, fine-tuned, and open-weight LLMs, enabling systematic evaluation of misalignment under three dimensions. Empirically, single-dimension models achieve high Coverage (upto 97.6%) but incur False Failure Rate >50% and lower Alignment Score (63%-66%) under joint conditions.

💡 Research Summary

The paper “Are Aligned Large Language Models Still Misaligned?” addresses a critical gap in the evaluation of large language models (LLMs) by proposing a unified benchmark that simultaneously assesses safety, value, and cultural alignment. Existing benchmarks—INSECURE CODE (safety‑centric), VALUEACTIONLENS (value‑centric), and CULTURALHERITAGE (culture‑centric)—each focus on a single dimension, which limits their ability to capture the complex interplay of these dimensions in real‑world interactions. To overcome this limitation, the authors introduce Mis‑Align Bench, a comprehensive evaluation suite that integrates all three dimensions into a single framework.

The core of Mis‑Align Bench is the SAVACU dataset, an English misaligned‑aligned collection comprising 382,424 prompt‑response pairs spanning 112 distinct domains (14 safety, 56 value, and 42 cultural). The dataset is built from the LLM‑PROMPT‑DATASET, with prompts automatically classified into the unified taxonomy using Mistral‑7B‑Instruct‑v0.3. To mitigate long‑tail sparsity, low‑resource domains are expanded via conditional query generation using Llama‑3.1‑8B‑Instruct, and SimHash‑based fingerprinting is employed to eliminate near‑duplicate entries while preserving semantic diversity. Each prompt is paired with both a misaligned and an aligned response through a two‑stage rejection‑sampling pipeline: the first stage filters for basic safety compliance, and the second stage enforces value and cultural conformity, ensuring high‑quality, dimension‑aware pairs.

For evaluation, the authors benchmark a diverse set of LLMs, including general‑purpose models (GPT‑4o, Claude‑3.5‑Sonnet, Gemini‑1.5‑Pro), dimension‑specific fine‑tuned models, and open‑weight models (Gemma‑3‑27B, Phi‑3‑14B, Qwen‑2.5‑32B). Metrics reported include Coverage (the proportion of domains a model can address), False Failure Rate (instances where a model incorrectly flags a safe, value‑consistent, or culturally appropriate response as misaligned), and Alignment Score (overall success across all three dimensions). While single‑dimension models achieve high Coverage—up to 97.6%—they suffer dramatically under joint‑dimension conditions: False Failure Rates exceed 50%, and Alignment Scores drop to a narrow band of 63%–66%.

The empirical findings reveal that models optimized for one dimension often neglect the others, leading to systematic failures when safety, value, and cultural expectations must be satisfied simultaneously. For example, in the illustrative query “Should alcohol be served at all family gatherings?” the candidate responses from GPT‑4o, Claude‑3.5‑Sonnet, and Gemini‑1.5‑Pro each satisfy basic safety constraints but either over‑generalize cultural norms or under‑weight contextual value considerations, highlighting the insufficiency of single‑dimension evaluation.

The authors argue that Mis‑Align Bench fills a crucial methodological void, providing a publicly available, reproducible benchmark that can drive the development of multi‑dimensional alignment techniques. By exposing joint‑dimension failures that remain invisible to existing benchmarks, the work encourages the community to design loss functions, training curricula, and post‑processing safeguards that explicitly model the interaction among safety, value, and cultural criteria.

In conclusion, the paper makes three primary contributions: (1) the creation of a unified benchmark (Mis‑Align Bench) and the SAVACU dataset, which together enable systematic assessment of LLM behavior across safety, value, and cultural dimensions; (2) a thorough empirical analysis showing that high single‑dimension performance does not guarantee joint‑dimension alignment, with concrete metrics illustrating the degradation; and (3) a call to action for future research to adopt multi‑dimensional alignment objectives, leveraging the benchmark to evaluate and iterate on alignment strategies. This work sets a new standard for evaluating LLMs in real‑world contexts where safety, societal values, and cultural sensitivities must be respected concurrently.

Comments & Academic Discussion

Loading comments...

Leave a Comment