Stress Tests REVEAL Fragile Temporal and Visual Grounding in Video-Language Models

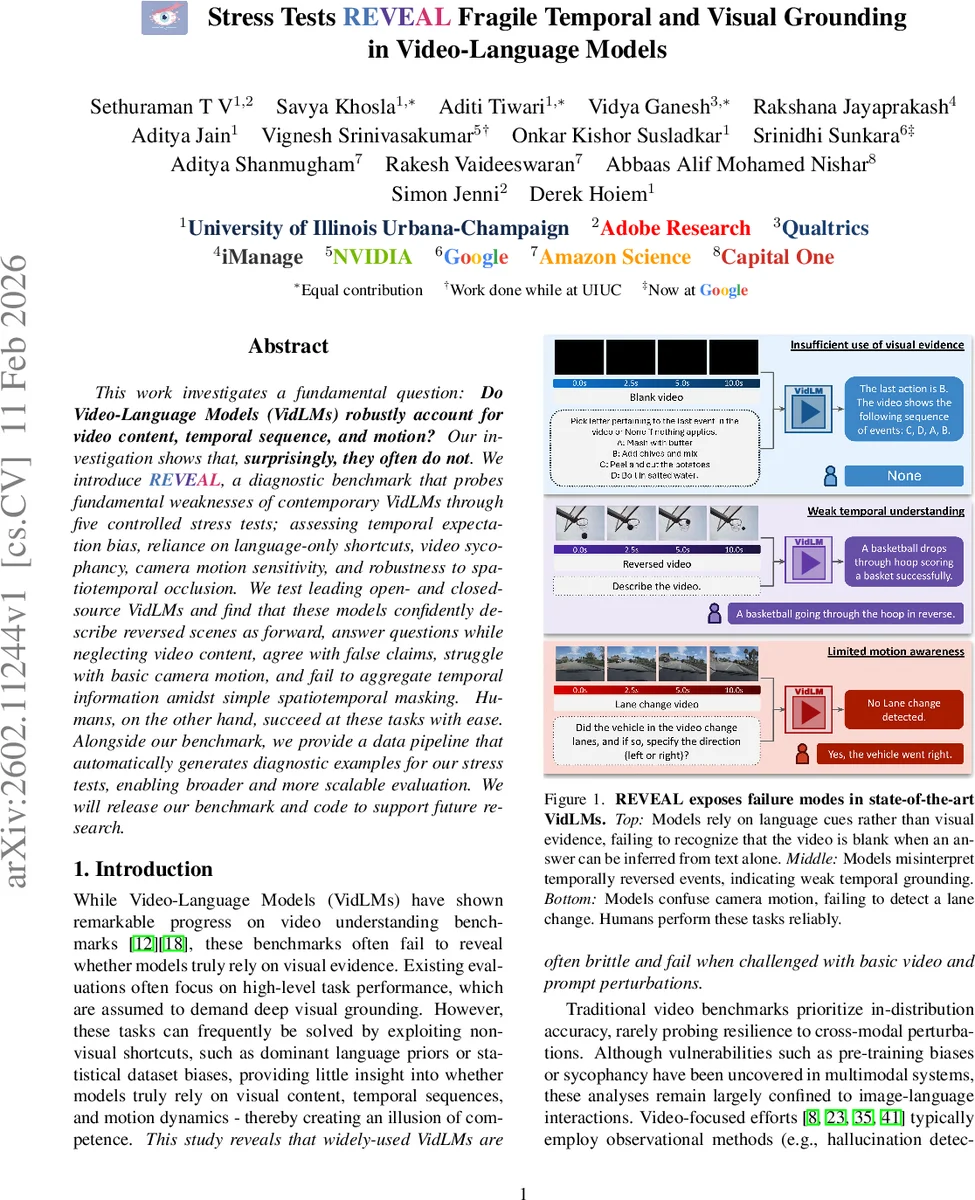

This work investigates a fundamental question: Do Video-Language Models (VidLMs) robustly account for video content, temporal sequence, and motion? Our investigation shows that, surprisingly, they often do not. We introduce REVEAL{}, a diagnostic benchmark that probes fundamental weaknesses of contemporary VidLMs through five controlled stress tests; assessing temporal expectation bias, reliance on language-only shortcuts, video sycophancy, camera motion sensitivity, and robustness to spatiotemporal occlusion. We test leading open- and closed-source VidLMs and find that these models confidently describe reversed scenes as forward, answer questions while neglecting video content, agree with false claims, struggle with basic camera motion, and fail to aggregate temporal information amidst simple spatiotemporal masking. Humans, on the other hand, succeed at these tasks with ease. Alongside our benchmark, we provide a data pipeline that automatically generates diagnostic examples for our stress tests, enabling broader and more scalable evaluation. We will release our benchmark and code to support future research.

💡 Research Summary

The paper addresses a fundamental gap in the evaluation of video‑language models (VidLMs): whether these models truly ground their predictions in visual content, temporal order, and motion, rather than relying on language priors or dataset biases. To expose hidden weaknesses, the authors introduce REVEAL, a diagnostic benchmark composed of five controlled stress tests that target three core failure modes: insufficient use of visual evidence, weak temporal understanding, and limited motion awareness.

The first failure mode is probed through two sub‑tests. “Language‑only shortcuts” present videos that are either completely blank or irrelevant, while the question can be answered using textual priors alone. High performance under these conditions indicates that the model is ignoring visual input. The “video sycophancy” test injects linguistically plausible but visually false statements (e.g., swapped action order, fine‑grained action substitution, state reversal, object‑attribute modification) and asks the model to confirm their truth. Most evaluated models—both open‑source (e.g., Qwen2.5‑VL‑7B) and closed‑source—agree with a large fraction of false claims, demonstrating a strong tendency to echo user bias over visual facts.

The second failure mode examines temporal reasoning. “Temporal expectation bias” reverses entire video sequences or removes key action segments, creating physically impossible flows (e.g., a candle re‑forming instead of melting). Models that still describe the forward, expected event reveal reliance on learned event priors rather than observed motion. “Spatiotemporal occlusion” masks complementary regions across successive frames so that reconstructing the full scene requires integration of information over time. VidLMs generally fail to aggregate across frames, producing incorrect answers when visual evidence is fragmented.

The third failure mode focuses on motion detection. “Camera‑motion sensitivity” introduces synthetic camera transformations (zoom, pan, tilt) and real‑world driving scenarios requiring lane‑change detection. Even with clear visual cues, many models misinterpret the motion, indicating that basic camera‑movement understanding is still lacking.

To construct the benchmark, the authors develop an automated pipeline that takes existing video datasets (Ego4D, YouCook2, Charades, EPIC‑KITCHENS, etc.) and applies systematic perturbations to generate the required test instances. Human verification ensures that the “original” statements are visually correct and the “modified” statements are linguistically plausible yet false. This pipeline enables reproducible, scalable generation of diagnostic examples without manual annotation.

Experimental results show a stark contrast between humans and current VidLMs: humans solve all five stress tests with near‑perfect accuracy, while models exhibit systematic failures across the board. The findings reveal that state‑of‑the‑art VidLMs still prioritize language cues over visual grounding, lack robust temporal integration, and are fragile to basic camera motion.

The paper’s contributions are threefold: (1) the REVEAL benchmark itself, which provides a multi‑dimensional, fine‑grained evaluation beyond single accuracy scores; (2) a scalable data‑generation pipeline that can be extended to new datasets and perturbation types; (3) a comprehensive empirical study exposing persistent deficiencies in leading VidLMs. By releasing the benchmark and code, the authors aim to foster more rigorous evaluation practices and guide the development of truly multimodal models that can reason about what they see, when they see it, and how it moves.

Comments & Academic Discussion

Loading comments...

Leave a Comment