SurfPhase: 3D Interfacial Dynamics in Two-Phase Flows from Sparse Videos

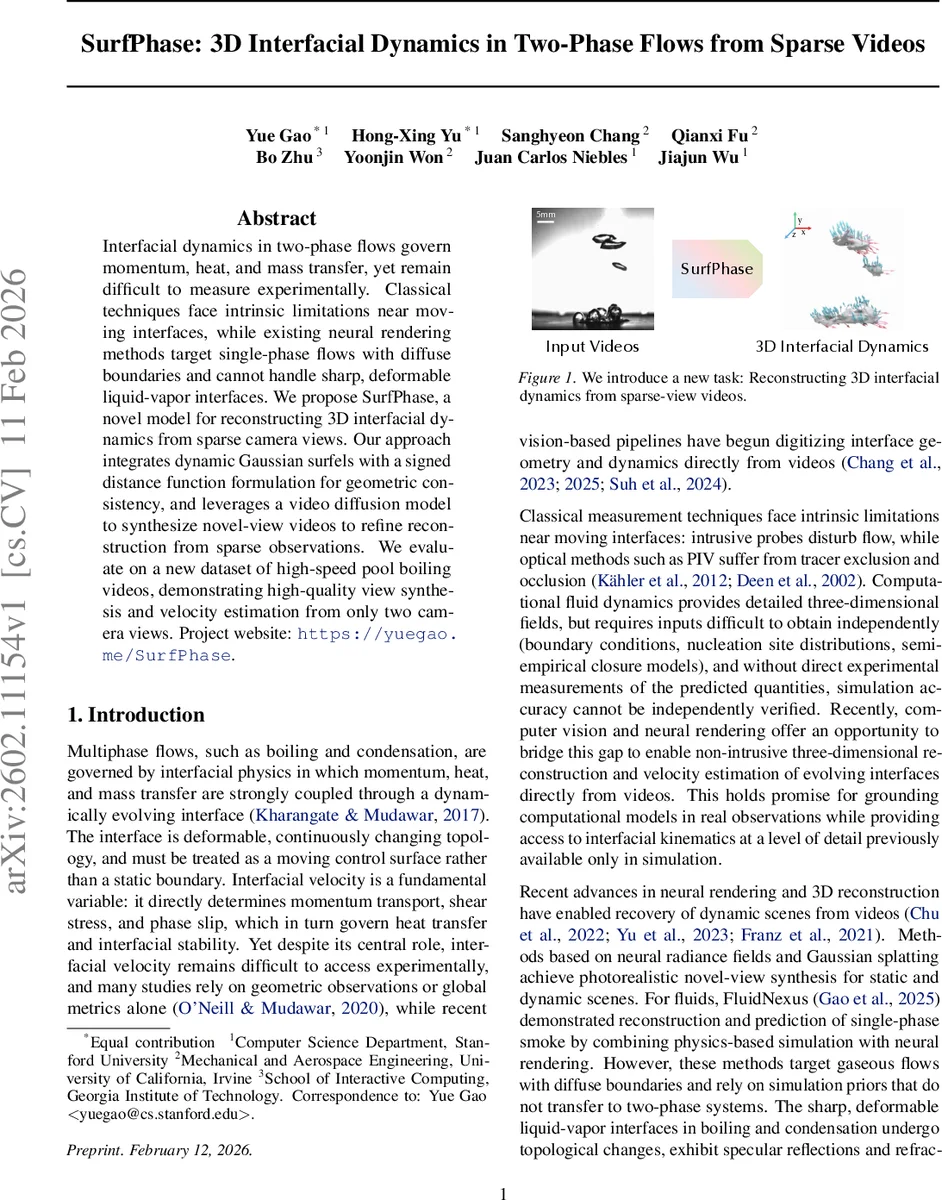

Interfacial dynamics in two-phase flows govern momentum, heat, and mass transfer, yet remain difficult to measure experimentally. Classical techniques face intrinsic limitations near moving interfaces, while existing neural rendering methods target single-phase flows with diffuse boundaries and cannot handle sharp, deformable liquid-vapor interfaces. We propose SurfPhase, a novel model for reconstructing 3D interfacial dynamics from sparse camera views. Our approach integrates dynamic Gaussian surfels with a signed distance function formulation for geometric consistency, and leverages a video diffusion model to synthesize novel-view videos to refine reconstruction from sparse observations. We evaluate on a new dataset of high-speed pool boiling videos, demonstrating high-quality view synthesis and velocity estimation from only two camera views. Project website: https://yuegao.me/SurfPhase.

💡 Research Summary

SurfPhase tackles the long‑standing challenge of measuring the rapidly evolving liquid‑vapor interface in two‑phase flows such as boiling, using only a handful of synchronized camera views (as few as two). The authors observe that classical experimental techniques (intrusive probes, PIV) fail near moving interfaces, while existing neural rendering approaches are designed for single‑phase, diffuse media and rely on dense multi‑view coverage that is rarely available in high‑temperature, constrained laboratory setups.

The proposed pipeline consists of two main reconstruction stages followed by a physics‑informed velocity estimation module. In the first stage the scene at each time step is represented by a set of dynamic Gaussian surfels—oriented disk‑shaped surface elements. Each surfel stores a 3‑D position, scale, rotation, color, and a learnable signed distance function (SDF) value. The SDF is transformed into opacity through a logistic function whose sharpness parameter γ is adaptively driven by the median absolute SDF across all surfels, gradually tightening the surface representation. Geometric consistency is enforced by (i) a depth‑projection loss that aligns the rendered depth of each surfel with its zero‑level set projection, (ii) a normal‑consistency term, and (iii) the median‑γ regularizer. Appearance fidelity is ensured by a combination of L1 and SSIM losses measured against the sparse input views.

Because sparse viewpoints leave large blind spots, the second stage introduces a video diffusion model fine‑tuned on a newly collected high‑speed pool‑boiling dataset. The initially optimized surfels are rendered from many synthetic camera positions, producing rough novel‑view videos. These are fed into the diffusion model using an SDEdit‑style refinement, which injects learned priors about specular reflections, refractions, and plausible interface dynamics. The refined videos serve as additional supervision; during a second round of optimization the same surfel‑SDF losses are applied, but with lower weight on the generated views to account for their inherent uncertainty. This two‑stage scheme yields a surfel field that faithfully reproduces both the observed and the synthesized viewpoints.

Estimating interfacial velocity requires point‑wise temporal correspondence, which pure differentiable rendering cannot guarantee. To address this, SurfPhase binds each surfel to an individual vapor bubble identified by the Segment Anything Model (SAM). Bubbles are segmented in each frame, triangulated across the two calibrated cameras, and tracked over time to compute a 3‑D velocity vector for each bubble. All surfels associated with a given bubble inherit this velocity, providing a strong temporal prior that drives the surfels to move consistently rather than merely adjusting color or scale. Consequently, a dense velocity field on the reconstructed interface can be extracted in metric units.

The authors release a new dataset comprising 200 single‑view high‑speed boiling videos and a synchronized dual‑view pair with precise metric calibration. Quantitative comparisons show that SurfPhase outperforms state‑of‑the‑art dynamic NeRF and Gaussian‑splatting baselines in PSNR, SSIM, and LPIPS, especially for distant novel views where baseline methods produce severe artifacts. Qualitatively, the diffusion‑refined videos capture realistic specular highlights and refraction patterns that match the physics of boiling. Velocity evaluation demonstrates that bubble‑guided tracking reduces average absolute error by over 30 % compared to a naïve surfel‑only approach, and aligns well with ground‑truth high‑speed measurements.

In summary, SurfPhase introduces (1) a geometry‑aware surfel‑SDF representation suitable for sharp, topologically changing liquid‑vapor interfaces, (2) a diffusion‑based novel‑view synthesis that compensates for extreme view sparsity, and (3) a bubble‑binding strategy that yields accurate interfacial velocity fields. This combination opens the door to non‑intrusive, high‑resolution 3‑D diagnostics of two‑phase flows, and suggests future extensions to other phase‑change phenomena (condensation, deposition) and real‑time implementations.

Comments & Academic Discussion

Loading comments...

Leave a Comment