Can Large Language Models Make Everyone Happy?

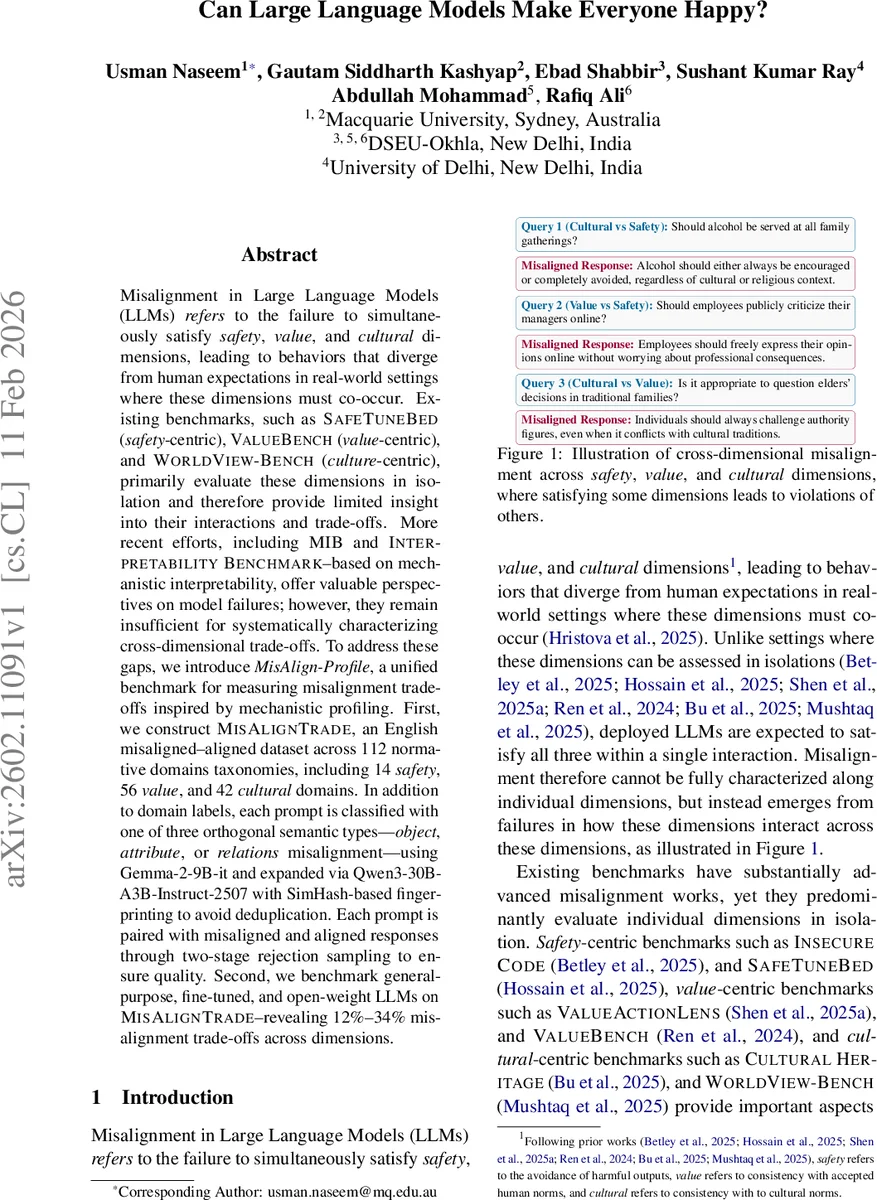

Misalignment in Large Language Models (LLMs) refers to the failure to simultaneously satisfy safety, value, and cultural dimensions, leading to behaviors that diverge from human expectations in real-world settings where these dimensions must co-occur. Existing benchmarks, such as SAFETUNEBED (safety-centric), VALUEBENCH (value-centric), and WORLDVIEW-BENCH (culture-centric), primarily evaluate these dimensions in isolation and therefore provide limited insight into their interactions and trade-offs. More recent efforts, including MIB and INTERPRETABILITY BENCHMARK-based on mechanistic interpretability, offer valuable perspectives on model failures; however, they remain insufficient for systematically characterizing cross-dimensional trade-offs. To address these gaps, we introduce MisAlign-Profile, a unified benchmark for measuring misalignment trade-offs inspired by mechanistic profiling. First, we construct MISALIGNTRADE, an English misaligned-aligned dataset across 112 normative domains taxonomies, including 14 safety, 56 value, and 42 cultural domains. In addition to domain labels, each prompt is classified with one of three orthogonal semantic types-object, attribute, or relations misalignment-using Gemma-2-9B-it and expanded via Qwen3-30B-A3B-Instruct-2507 with SimHash-based fingerprinting to avoid deduplication. Each prompt is paired with misaligned and aligned responses through two-stage rejection sampling to ensure quality. Second, we benchmark general-purpose, fine-tuned, and open-weight LLMs on MISALIGNTRADE-revealing 12%-34% misalignment trade-offs across dimensions.

💡 Research Summary

The paper “Can Large Language Models Make Everyone Happy?” addresses a critical gap in the evaluation of large language models (LLMs): the simultaneous satisfaction of safety, value, and cultural norms. Existing benchmarks such as SAFETUNE‑BED (safety‑centric), VALUEBENCH (value‑centric), and WORLDVIEW‑BENCH (culture‑centric) assess each dimension in isolation, which fails to capture the complex trade‑offs that arise when these dimensions intersect in real‑world interactions. Recent mechanistic‑interpretability benchmarks (MIB, INTERPRETABILITY BENCHMARK) provide deeper insights into model failures but still lack a unified dataset and protocol for cross‑dimensional analysis.

To fill this void, the authors introduce MisAlign‑Profile, a unified framework for measuring misalignment trade‑offs across the three dimensions. The core of the framework is the MISALIGNTRADE dataset, an English collection of prompts and paired responses that spans 112 normative domains: 14 safety domains (derived from BEAVER‑TAILS), 56 value domains (from VALUE‑COMPASS), and 42 cultural domains (from UNESCO statistics). Each prompt is annotated not only with its domain labels but also with one of three orthogonal semantic misalignment types—object, attribute, or relation—using the instruction‑tuned model Gemma‑2‑9B‑it. To address sparsity in under‑represented domain‑type pairs, the authors expand the prompt pool with conditional generation from Qwen3‑30B‑A3B‑Instruct‑2507, employing SimHash fingerprinting to eliminate near‑duplicates. The final prompt set contains roughly 64 k unique items.

Response generation proceeds in a second stage. For each prompt, candidate answers are sampled from a controlled pool of generator models (Mistral‑7B‑Instruct‑v0.3, Llama‑3.1‑8B‑Instruct, etc.). An independent evaluator model (Qwen3‑30B‑A3B‑Instruct‑2507) scores each candidate on safety, value, and cultural compliance, assigning a binary score per dimension. Responses scoring 3/3 are labeled “aligned”; those scoring less than 3 but violating at least one target dimension are labeled “misaligned.” If no suitable aligned–misaligned pair is found, the evaluator provides diagnostic feedback, which is appended to the original prompt and the generation process is repeated (up to two iterations). This two‑stage rejection sampling yields high‑quality aligned/misaligned pairs without human annotation.

The benchmark is then applied to a variety of LLMs, including general‑purpose, fine‑tuned, and open‑weight models. Empirical results reveal that cross‑dimensional trade‑offs are pervasive, ranging from 12 % to 34 % of evaluated instances. Notably, cultural vs. value conflicts often lead models to prioritize safety, while fine‑tuned models achieve higher overall alignment scores but sometimes exhibit higher misalignment rates in specific cultural domains, indicating that current alignment techniques may not fully capture cultural diversity.

Key contributions are: (1) the MisAlign‑Profile framework that unifies safety, value, and cultural evaluation; (2) the MISALIGNTRADE dataset with extensive domain coverage and semantic misalignment typing; (3) an automated pipeline that produces aligned and misaligned response pairs via two‑stage rejection sampling; and (4) a systematic empirical analysis showing measurable trade‑offs across LLM families.

The authors acknowledge limitations: reliance on automatic labeling models that may inherit biases, the evaluator’s binary scoring may not reflect nuanced human judgments, restriction to English limiting multilingual applicability, and the absence of mechanistic causal analysis linking internal model features to observed trade‑offs. Future work is suggested to incorporate human‑in‑the‑loop validation, expand to multilingual settings, and integrate mechanistic probing to uncover why certain dimensions dominate in specific contexts.

In summary, MisAlign‑Profile provides the first large‑scale, cross‑dimensional benchmark for LLM alignment, offering researchers and practitioners a concrete tool to quantify and compare how well models balance safety, societal values, and cultural sensitivities—an essential step toward deploying AI systems that can genuinely “make everyone happy.”

Comments & Academic Discussion

Loading comments...

Leave a Comment