Reality Copilot: Voice-First Human-AI Collaboration in Mixed Reality Using Large Multimodal Models

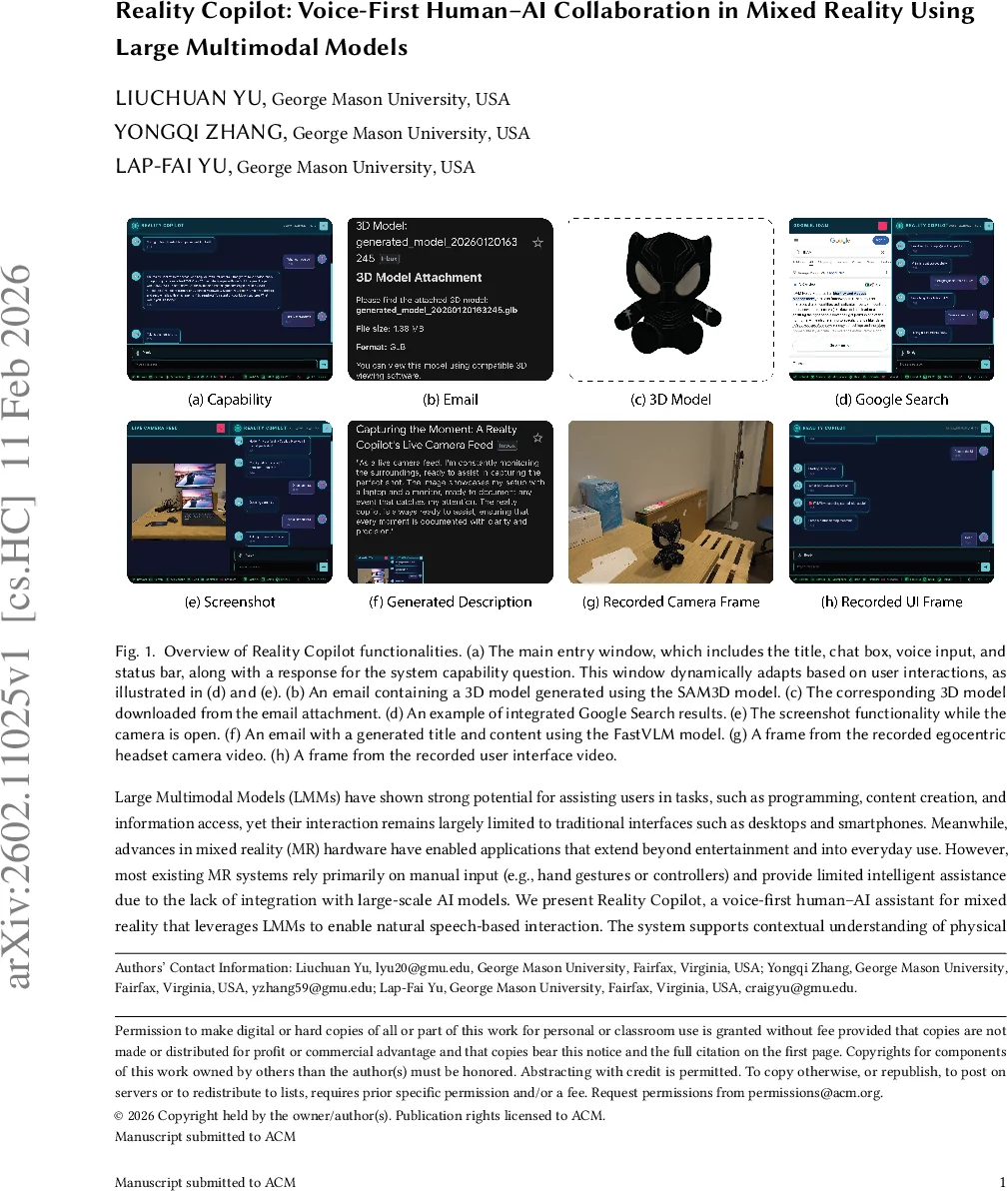

Large Multimodal Models (LMMs) have shown strong potential for assisting users in tasks, such as programming, content creation, and information access, yet their interaction remains largely limited to traditional interfaces such as desktops and smartphones. Meanwhile, advances in mixed reality (MR) hardware have enabled applications that extend beyond entertainment and into everyday use. However, most existing MR systems rely primarily on manual input (e.g., hand gestures or controllers) and provide limited intelligent assistance due to the lack of integration with large-scale AI models. We present Reality Copilot, a voice-first human-AI assistant for mixed reality that leverages LMMs to enable natural speech-based interaction. The system supports contextual understanding of physical environments, realistic 3D content generation, and real-time information retrieval. In addition to in-headset interaction, Reality Copilot facilitates cross-platform workflows by generating context-aware textual content and exporting generated assets. This work explores the design space of LMM-powered human-AI collaboration in mixed reality.

💡 Research Summary

Reality Copilot is a voice‑first human‑AI collaboration prototype designed for mixed‑reality (MR) headsets such as the Meta Quest 3. The authors argue that current MR interfaces rely heavily on manual input (hand gestures, controllers) and provide only limited AI assistance, while large multimodal models (LMMs) have demonstrated powerful capabilities across text, image, audio, and video domains. To bridge this gap, Reality Copilot places spoken language at the core of the interaction loop, enabling hands‑free operation in immersive environments.

The system architecture consists of three layers. The input layer uses TEN VAD for real‑time voice activity detection, eliminating the need for a push‑to‑talk button. Captured speech is sent to commercial large language models (LLMs) – OpenAI’s ChatGPT and Google Gemini – which, guided by a custom system prompt, produce a structured response that includes a spoken reply and a set of actions. Visual, video, and 3‑D data are processed locally by open‑source models (FastVLM for image captioning, SAM for segmentation, SAM3D for 3‑D reconstruction) running on a self‑hosted GPU server, thereby preserving user privacy.

Action execution is tightly coupled with headset capabilities. For example, a command “open the camera” triggers the headset’s passthrough camera; “describe this image” invokes FastVLM; “generate a 3‑D model of this object” calls SAM3D, which returns a GLB file that can be emailed automatically. The system also supports traditional UI functions such as screenshots, screen recordings, and email composition via the Gmail API.

A key novelty is the stack‑based context manager. The current UI state (e.g., a photo is displayed), system status (network connectivity, backend service availability), and recent interaction history are stored as metadata on a stack. When a new voice input arrives, the most recent context is popped, combined with the user utterance and the system prompt, and fed to the LLM. The LLM’s output updates the context, which is then pushed back onto the stack. Users can explicitly request a stack‑pop via voice, allowing seamless transitions such as “email the image to me” while preserving prior conversational context.

Hardware‑accelerated video recording is another contribution. An Android plugin activates the headset’s hardware encoder, capturing video in H.264 and dual‑track audio (microphone and speaker) in AAC. After recording stops, MediaMuxer combines the streams into a single MP4 file with separate audio tracks, enabling egocentric video creation with synchronized narration and system feedback.

Implementation details: the front‑end runs on Windows 11 using Unity 6000.2.6f2, with Vuplex 3D WebView for hybrid HTML‑Unity UI. The backend services run on Ubuntu 24.04 equipped with an NVIDIA GTX 5090 GPU. The authors integrate the Mixed Reality Utility Kit’s Passthrough Camera Access for real‑time scene capture and extend the EmbARDiment toolkit to support both Google Gemini and OpenAI APIs.

Three illustrative applications demonstrate the system’s versatility. (1) Real‑time educational assistance: a student learning electronics can ask for step‑by‑step guidance on circuit design, soldering, and wiring, receiving spoken instructions while keeping both hands free. (2) Egocentric video creation: a craftsperson can record a hands‑on tutorial with automatic dual‑track audio, facilitating live streaming or post‑production narration. (3) 3‑D modeling workflow: a designer can request a 3‑D model of a physical object; SAM3D generates the model, which is emailed as a GLB file for immediate import into CAD tools like Blender or Fusion 360.

The paper’s contributions are: (i) proposing a voice‑first MR human‑AI collaboration framework that blends commercial and open‑source LMMs, (ii) detailing a privacy‑preserving hybrid architecture with local processing of visual data, (iii) introducing stack‑based context handling and hardware‑accelerated recording pipelines, and (iv) validating the approach through three concrete scenarios. Limitations include dependence on external LLM services (network latency, cost), variability in open‑source model quality, and the current single‑user focus. Future work is suggested in areas such as on‑device LMM compression, personalized prompt learning, and multi‑user collaborative MR environments.

In summary, Reality Copilot represents a pioneering step toward voice‑driven, multimodal AI assistance in mixed reality, showcasing how large multimodal models can be harnessed to create context‑aware, hands‑free productivity tools that bridge the gap between traditional desktop AI interfaces and the emerging immersive computing paradigm.

Comments & Academic Discussion

Loading comments...

Leave a Comment