Training-Free Stimulus Encoding for Retinal Implants via Sparse Projected Gradient Descent

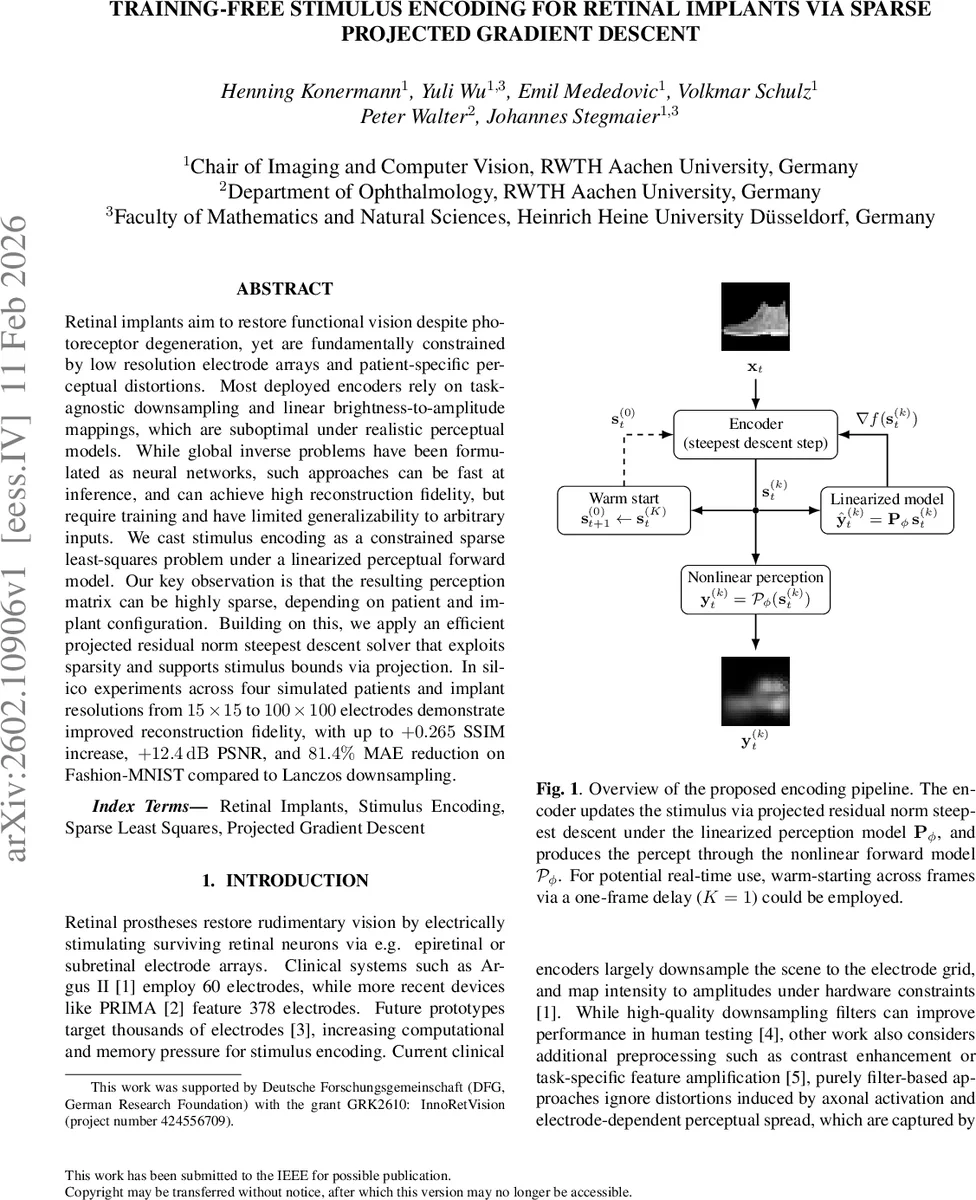

Retinal implants aim to restore functional vision despite photoreceptor degeneration, yet are fundamentally constrained by low resolution electrode arrays and patient-specific perceptual distortions. Most deployed encoders rely on task-agnostic downsampling and linear brightness-to-amplitude mappings, which are suboptimal under realistic perceptual models. While global inverse problems have been formulated as neural networks, such approaches can be fast at inference, and can achieve high reconstruction fidelity, but require training and have limited generalizability to arbitrary inputs. We cast stimulus encoding as a constrained sparse least-squares problem under a linearized perceptual forward model. Our key observation is that the resulting perception matrix can be highly sparse, depending on patient and implant configuration. Building on this, we apply an efficient projected residual norm steepest descent solver that exploits sparsity and supports stimulus bounds via projection. In silico experiments across four simulated patients and implant resolutions from $15\times15$ to $100\times100$ electrodes demonstrate improved reconstruction fidelity, with up to $+0.265$ SSIM increase, $+12.4,\mathrm{dB}$ PSNR, and $81.4%$ MAE reduction on Fashion-MNIST compared to Lanczos downsampling.

💡 Research Summary

The paper tackles the problem of generating stimulation patterns for retinal prostheses without any training phase. Conventional encoders simply down‑sample the visual scene to the electrode grid and map pixel intensity linearly to stimulus amplitude, ignoring patient‑specific perceptual distortions such as axonal activation and current spread. While learning‑based end‑to‑end encoders can improve quality, they require costly retraining for each patient or device and often fail to generalize to arbitrary inputs.

The authors formulate stimulus encoding as a constrained least‑squares problem under a linearized perceptual forward model. Let x∈

Comments & Academic Discussion

Loading comments...

Leave a Comment