Resource-Efficient Model-Free Reinforcement Learning for Board Games

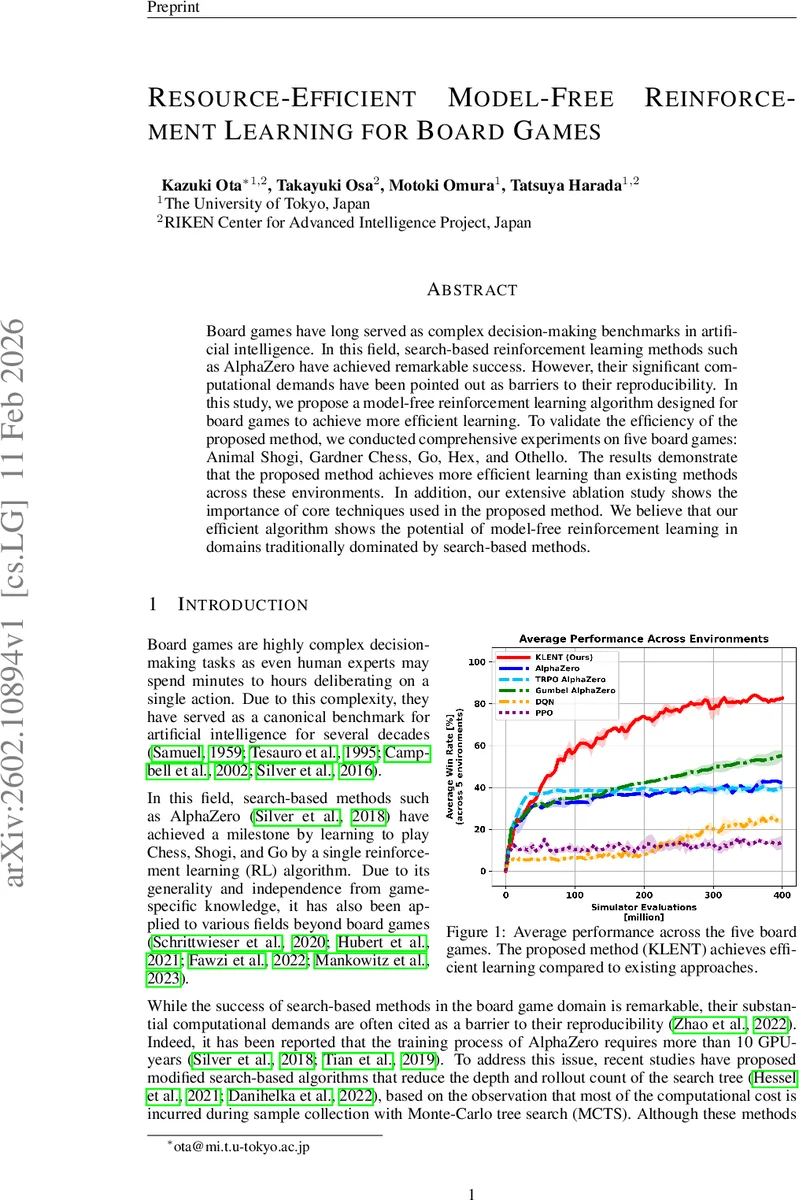

Board games have long served as complex decision-making benchmarks in artificial intelligence. In this field, search-based reinforcement learning methods such as AlphaZero have achieved remarkable success. However, their significant computational demands have been pointed out as barriers to their reproducibility. In this study, we propose a model-free reinforcement learning algorithm designed for board games to achieve more efficient learning. To validate the efficiency of the proposed method, we conducted comprehensive experiments on five board games: Animal Shogi, Gardner Chess, Go, Hex, and Othello. The results demonstrate that the proposed method achieves more efficient learning than existing methods across these environments. In addition, our extensive ablation study shows the importance of core techniques used in the proposed method. We believe that our efficient algorithm shows the potential of model-free reinforcement learning in domains traditionally dominated by search-based methods.

💡 Research Summary

The paper addresses the high computational cost of search‑based reinforcement learning (RL) methods such as AlphaZero, which dominate the board‑game domain. The authors propose a fully model‑free algorithm called KLENT (Kullback‑Leibler and Entropy Regularized Policy Optimization) that completely eliminates look‑ahead tree search during training while retaining competitive performance.

KLENT is built on a regularized policy‑optimization framework. The objective combines three terms: (1) the expected Q‑value under a new policy π′, (2) a reverse KL divergence penalty β D_KL(π′‖π) that forces gradual updates, and (3) an entropy bonus α H(π′) that encourages exploration. Because board games have a finite action space, the optimal π′ can be derived analytically:

π′(a|s) ∝ exp

Comments & Academic Discussion

Loading comments...

Leave a Comment