Interactive LLM-assisted Curriculum Learning for Multi-Task Evolutionary Policy Search

Multi-task policy search is a challenging problem because policies are required to generalize beyond training cases. Curriculum learning has proven to be effective in this setting, as it introduces complexity progressively. However, designing effective curricula is labor-intensive and requires extensive domain expertise. LLM-based curriculum generation has only recently emerged as a potential solution, but was limited to operate in static, offline modes without leveraging real-time feedback from the optimizer. Here we propose an interactive LLM-assisted framework for online curriculum generation, where the LLM adaptively designs training cases based on real-time feedback from the evolutionary optimization process. We investigate how different feedback modalities, ranging from numeric metrics alone to combinations with plots and behavior visualizations, influence the LLM ability to generate meaningful curricula. Through a 2D robot navigation case study, tackled with genetic programming as optimizer, we evaluate our approach against static LLM-generated curricula and expert-designed baselines. We show that interactive curriculum generation outperforms static approaches, with multimodal feedback incorporating both progression plots and behavior visualizations yielding performance competitive with expert-designed curricula. This work contributes to understanding how LLMs can serve as interactive curriculum designers for embodied AI systems, with potential extensions to broader evolutionary robotics applications.

💡 Research Summary

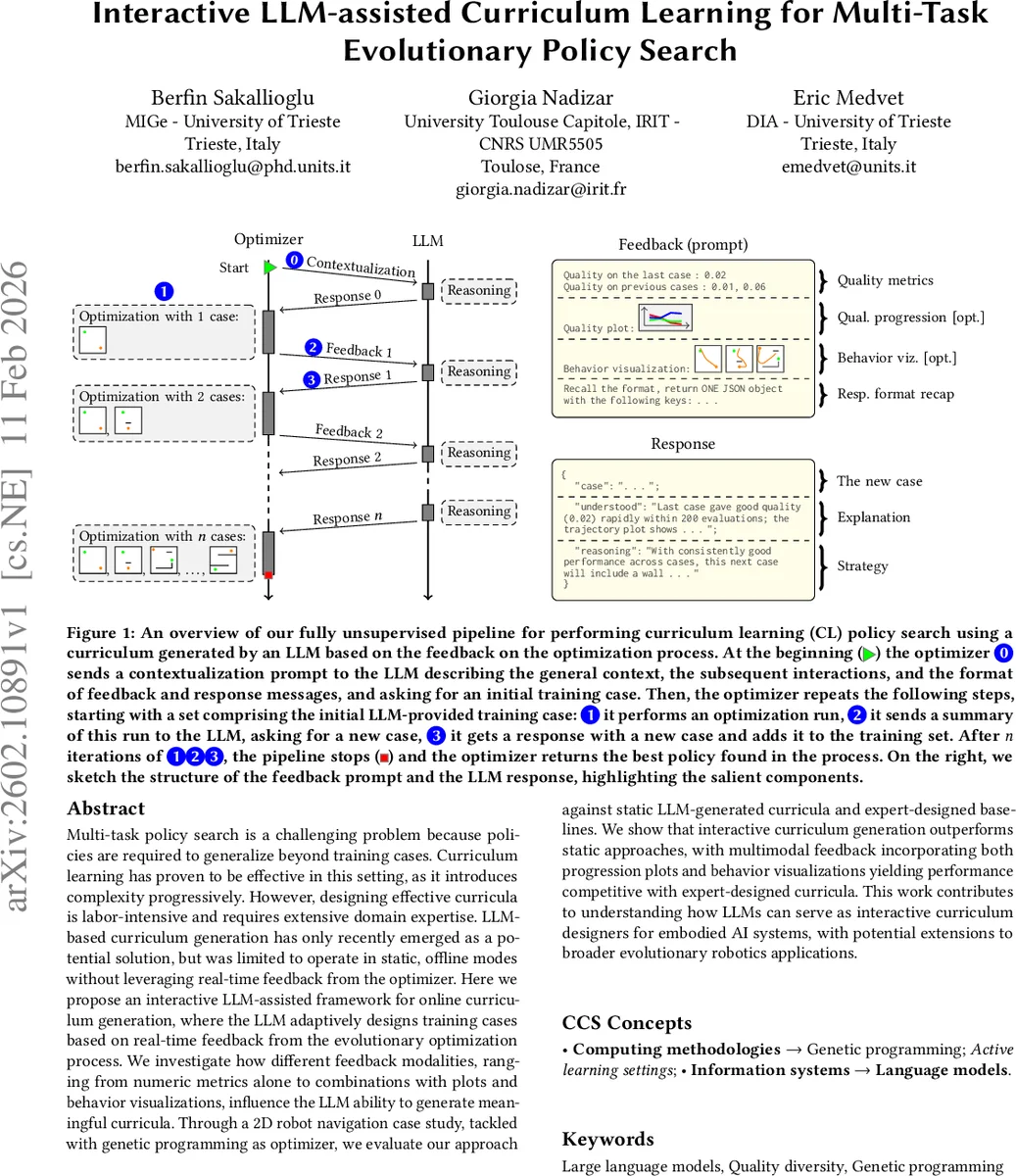

The paper introduces an interactive framework that couples large language models (LLMs) with evolutionary policy search to automatically generate curricula for multi‑task reinforcement learning. Traditional curriculum learning in evolutionary robotics relies on manually crafted task sequences or static LLM‑generated curricula, both of which demand substantial domain expertise and cannot adapt to the learner’s progress. In contrast, the proposed pipeline continuously feeds the LLM with real‑time feedback from the optimizer, allowing the LLM to propose new training cases that are tailored to the current capabilities of the evolving population.

The interaction proceeds as follows: an initial “contextualisation” prompt from the optimizer describes the problem, the expected feedback format, and requests an initial case. The optimizer then iteratively (i) runs a genetic programming (GP) based evolutionary search on the accumulated set of cases C, (ii) sends a feedback message containing performance metrics (and optionally visualisations) to the LLM, and (iii) receives a newly generated case which is added to C. This loop repeats for a predefined number of stages. Three feedback modalities are examined: (N) numeric quality scores only, (N+P) numeric scores plus a quality‑progress plot, and (N+P+B) numeric scores, progress plot, and a behavioural visualization (the robot’s trajectory overlaid on the arena).

The experimental testbed is a 2D navigation problem. The simulated differential‑drive robot is equipped with five proximity sensors, a distance sensor, and a bearing sensor. Observations are normalised and enriched with short‑term trends, yielding a 21‑dimensional vector. The controller consists of a stateful pre‑processor and a stateless post‑processor that maps a single real‑valued output of a GP‑evolved symbolic expression to wheel speeds. The GP trees use arithmetic, protected division, protected logarithm, max/min, tanh, relational, and ternary operators, with terminals drawn from the observation vector and constants in

Comments & Academic Discussion

Loading comments...

Leave a Comment