Reinforcing Chain-of-Thought Reasoning with Self-Evolving Rubrics

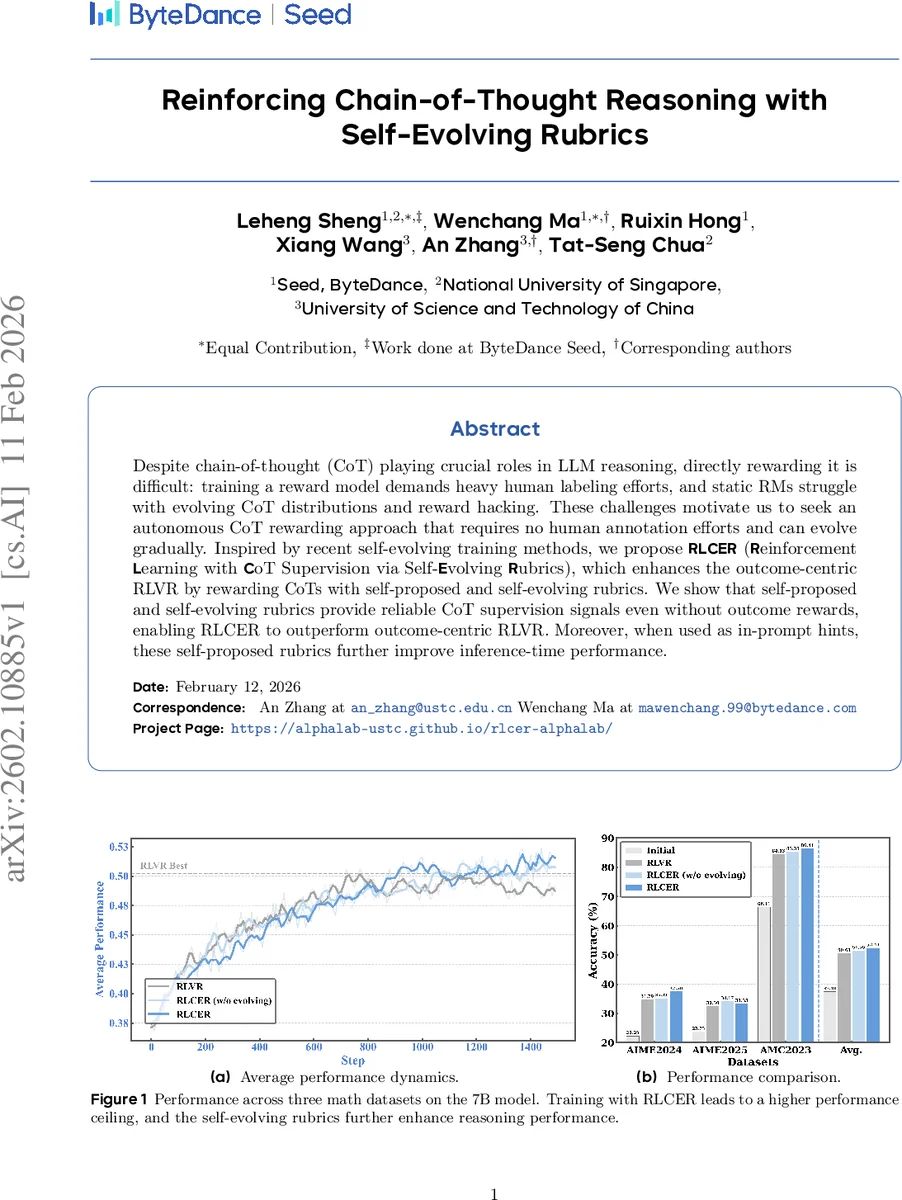

Despite chain-of-thought (CoT) playing crucial roles in LLM reasoning, directly rewarding it is difficult: training a reward model demands heavy human labeling efforts, and static RMs struggle with evolving CoT distributions and reward hacking. These challenges motivate us to seek an autonomous CoT rewarding approach that requires no human annotation efforts and can evolve gradually. Inspired by recent self-evolving training methods, we propose \textbf{RLCER} (\textbf{R}einforcement \textbf{L}earning with \textbf{C}oT Supervision via Self-\textbf{E}volving \textbf{R}ubrics), which enhances the outcome-centric RLVR by rewarding CoTs with self-proposed and self-evolving rubrics. We show that self-proposed and self-evolving rubrics provide reliable CoT supervision signals even without outcome rewards, enabling RLCER to outperform outcome-centric RLVR. Moreover, when used as in-prompt hints, these self-proposed rubrics further improve inference-time performance.

💡 Research Summary

The paper introduces RLCER (Reinforcement Learning with Chain‑of‑Thought Supervision via Self‑Evolving Rubrics), a novel framework that enables large language models (LLMs) to improve not only the correctness of their final answers but also the quality of the reasoning process (CoT) without any human‑annotated data. Traditional RL with verifiable rewards (RL VR) focuses solely on outcome rewards (answer correctness) and leaves the CoT un‑rewarded, which leads to under‑constrained learning and the emergence of shortcut or brittle reasoning strategies. Moreover, building separate reward models for CoT supervision is costly and static models quickly become mismatched as the model’s CoT distribution shifts during training.

RLCER tackles these issues by letting a single policy model πθ play two roles through different prompts: a reasoner (π_Rea) that generates a CoT and a final answer, and a rubricator (π_Rub) that, given the same question and the generated CoT, produces a set of K natural‑language rubrics. Each rubric τ_k consists of a textual criterion c_k and a scalar weight s_k. An external verifier πϕ (a frozen, fine‑tuned model) evaluates whether a CoT satisfies each criterion, yielding a binary indicator.

Crucially, rubrics are not taken at face value; they are deemed “valid” only if their satisfaction signals correlate positively with answer correctness across N sampled rollouts for the same question (corr(v_k, z) > α, with α = 0.2) and exhibit sufficient variance. This correlation‑based validation ensures that rewarding a rubric actually promotes correct reasoning rather than reinforcing spurious patterns.

The reasoner’s CoT reward r_Rea^cot aggregates the weighted satisfaction of all valid rubrics and is normalized to

Comments & Academic Discussion

Loading comments...

Leave a Comment