RePO: Bridging On-Policy Learning and Off-Policy Knowledge through Rephrasing Policy Optimization

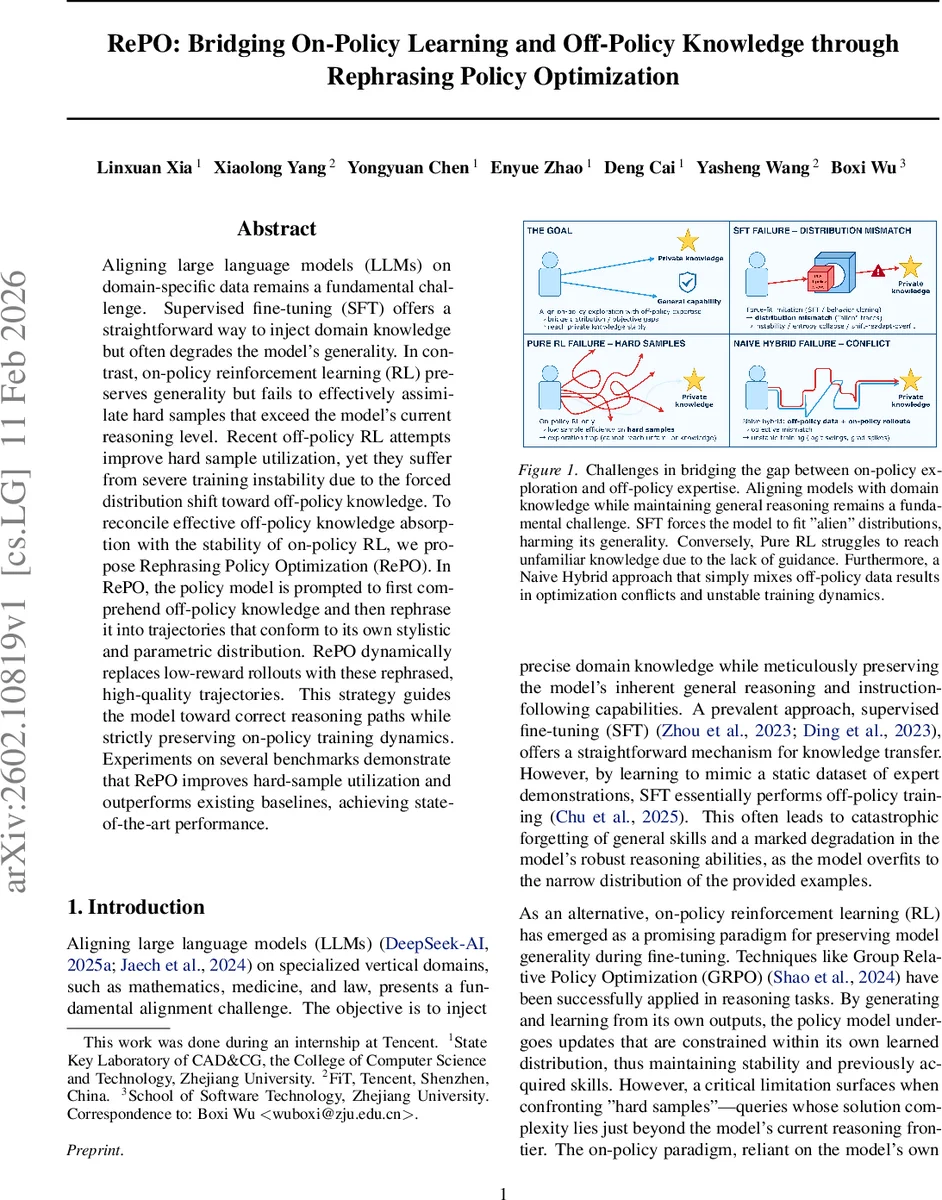

Aligning large language models (LLMs) on domain-specific data remains a fundamental challenge. Supervised fine-tuning (SFT) offers a straightforward way to inject domain knowledge but often degrades the model’s generality. In contrast, on-policy reinforcement learning (RL) preserves generality but fails to effectively assimilate hard samples that exceed the model’s current reasoning level. Recent off-policy RL attempts improve hard sample utilization, yet they suffer from severe training instability due to the forced distribution shift toward off-policy knowledge. To reconcile effective off-policy knowledge absorption with the stability of on-policy RL, we propose Rephrasing Policy Optimization (RePO). In RePO, the policy model is prompted to first comprehend off-policy knowledge and then rephrase it into trajectories that conform to its own stylistic and parametric distribution. RePO dynamically replaces low-reward rollouts with these rephrased, high-quality trajectories. This strategy guides the model toward correct reasoning paths while strictly preserving on-policy training dynamics. Experiments on several benchmarks demonstrate that RePO improves hard-sample utilization and outperforms existing baselines, achieving state-of-the-art performance.

💡 Research Summary

The paper addresses a central challenge in adapting large language models (LLMs) to domain‑specific tasks: how to inject specialized knowledge without sacrificing the model’s broad reasoning abilities. Supervised fine‑tuning (SFT) can directly teach domain data but often leads to catastrophic forgetting of general skills, while on‑policy reinforcement learning (RL) methods such as Group Relative Policy Optimization (GRPO) preserve generality by learning only from the model’s own outputs. However, on‑policy RL struggles with “hard samples” – queries whose correct solutions lie just beyond the current policy’s reasoning frontier – because the model cannot generate high‑quality trajectories on its own, causing a performance plateau.

Off‑policy approaches (e.g., LUFFY) attempt to overcome this by feeding the learner expert demonstrations or high‑level model outputs. Although theoretically promising, in practice they introduce a severe distribution shift: the learner must fit an external token distribution that may be mismatched with its own vocabulary and parameter space. This mismatch often manifests as exploding gradient norms, unstable entropy, and even training collapse.

Rephrasing Policy Optimization (RePO) is proposed to reconcile the strengths of both paradigms while mitigating their weaknesses. RePO consists of two sequential mechanisms: (1) Knowledge Internalization and (2) Dynamic Guidance based on Group Reward Distribution.

-

Knowledge Internalization: For each query q, an off‑policy knowledge artifact k (e.g., an expert reasoning trace) is supplied. A specially crafted prompt P(q, k) asks the current policy to “understand” k and then “rephrase” it using its own stylistic and lexical conventions. The model generates a rephrased rollout o_rep by sampling from πθ conditioned on P(q, k). Because the rephrasing is performed by the model itself, the resulting trajectory uses the model’s native vocabulary and distribution, eliminating the need for forced token mapping.

-

Dynamic Guidance Strategy: The standard on‑policy training loop samples a group G of rollouts { o₁,…,o_G } from πθ given q. Their binary rewards R(o_i) are used to compute a failure rate γ_fail = (1/G)∑ 1_{R(o_i)<δ}, where δ is a reward threshold. If γ_fail exceeds a preset difficulty threshold ρ, the lowest‑reward rollout o_min is replaced by o_rep, injecting a high‑quality learning signal precisely when the model is struggling. If γ_fail < ρ, the original group is retained, preserving pure on‑policy dynamics.

The final training set O_final is thus either the original group or the group with the replacement. The policy is then updated using the GRPO objective, which computes a normalized advantage A_i,t = (R(o_i) − mean(G))/std(G) + ε and applies the PPO‑style clipped surrogate loss with an optional KL penalty. Because o_rep shares the same token distribution as the other rollouts, the gradient estimates remain consistent, and the KL divergence stays bounded.

Stability Analysis: The authors compare three methods—GRPO, LUFFY, and RePO—using three in‑training metrics: entropy (exploration), gradient norm (optimization health), and final reward (performance). LUFFY shows high variance in gradient norm and entropy due to forced vocabulary alignment, often leading to divergence. GRPO maintains stable entropy and gradient norms but suffers from low utilization when all rollouts receive identical rewards (e.g., all zeros). RePO achieves both stable training dynamics and higher reward growth, especially on hard‑sample‑rich benchmarks.

Empirical Results: Experiments span mathematical reasoning (e.g., arithmetic, symbolic manipulation) and factual knowledge tasks. Across all datasets, RePO outperforms GRPO and LUFFY in terms of final accuracy and sample efficiency. The advantage is most pronounced on datasets with a high proportion of hard queries, confirming that the dynamic replacement mechanism effectively supplies the missing high‑quality signal without destabilizing training.

Key Contributions:

- Introduces a rephrasing step that converts off‑policy expert knowledge into on‑policy‑compatible trajectories, preserving lexical consistency.

- Proposes a difficulty‑aware gating mechanism that selectively injects rephrased rollouts only when the model’s own group performance indicates struggle.

- Demonstrates that this hybrid approach retains the stability of on‑policy RL while markedly improving hard‑sample utilization, achieving state‑of‑the‑art results on multiple benchmarks.

In summary, RePO offers a principled, practically effective solution for domain adaptation of LLMs: it leverages external expertise without incurring the instability traditionally associated with off‑policy corrections, thereby advancing both the methodology and performance of reinforcement‑learning‑based LLM fine‑tuning.

Comments & Academic Discussion

Loading comments...

Leave a Comment