DeepImageSearch: Benchmarking Multimodal Agents for Context-Aware Image Retrieval in Visual Histories

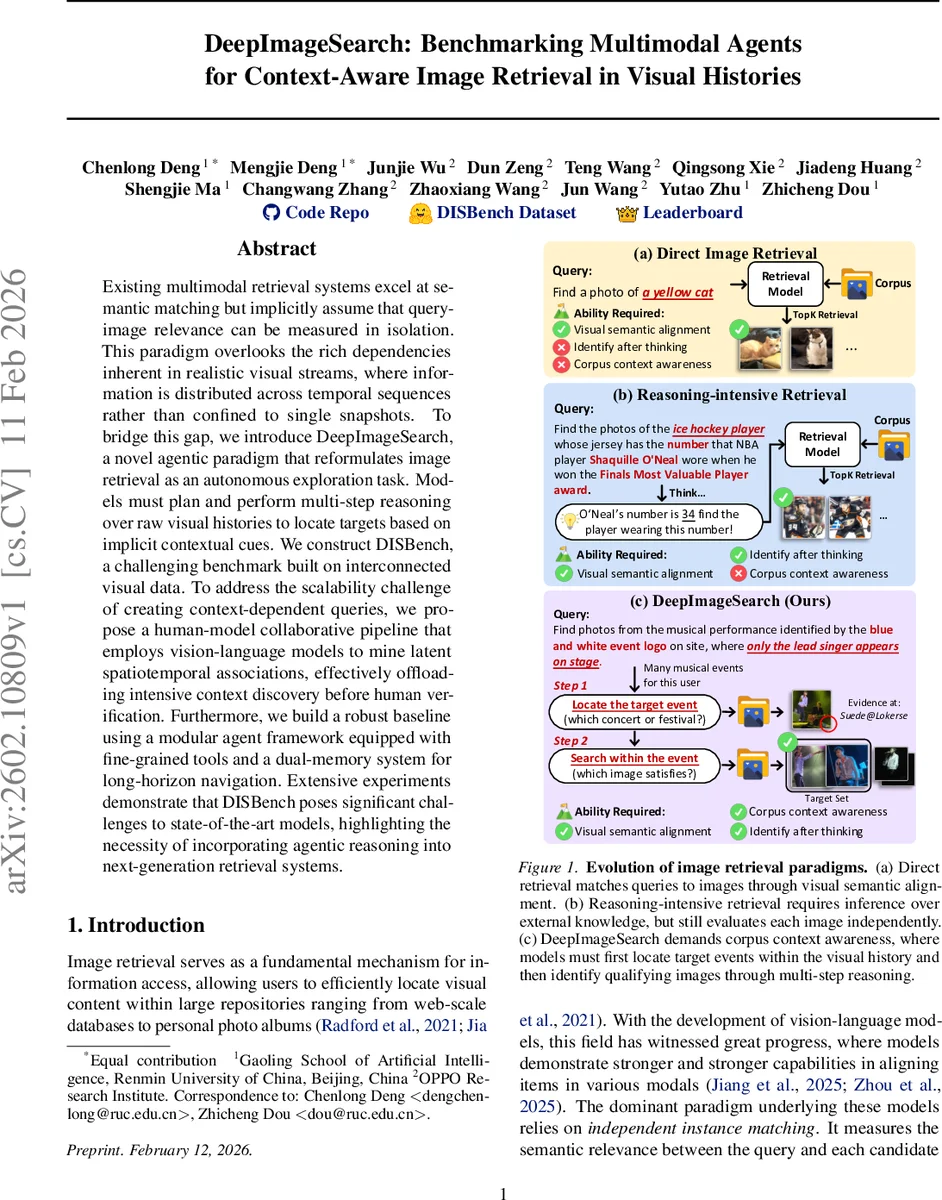

Existing multimodal retrieval systems excel at semantic matching but implicitly assume that query-image relevance can be measured in isolation. This paradigm overlooks the rich dependencies inherent in realistic visual streams, where information is distributed across temporal sequences rather than confined to single snapshots. To bridge this gap, we introduce DeepImageSearch, a novel agentic paradigm that reformulates image retrieval as an autonomous exploration task. Models must plan and perform multi-step reasoning over raw visual histories to locate targets based on implicit contextual cues. We construct DISBench, a challenging benchmark built on interconnected visual data. To address the scalability challenge of creating context-dependent queries, we propose a human-model collaborative pipeline that employs vision-language models to mine latent spatiotemporal associations, effectively offloading intensive context discovery before human verification. Furthermore, we build a robust baseline using a modular agent framework equipped with fine-grained tools and a dual-memory system for long-horizon navigation. Extensive experiments demonstrate that DISBench poses significant challenges to state-of-the-art models, highlighting the necessity of incorporating agentic reasoning into next-generation retrieval systems.

💡 Research Summary

The paper introduces a fundamentally new perspective on image retrieval, shifting from the traditional “query‑to‑image matching” paradigm to an agentic, context‑aware search over visual histories. The authors argue that real‑world user intents often cannot be satisfied by examining a single image in isolation; instead, relevant evidence is distributed across temporally ordered photos, requiring multi‑step reasoning that intertwines textual cues with visual evidence spread over many images.

DeepImageSearch Paradigm

DeepImageSearch reframes image retrieval as an autonomous exploration task. Given a textual query and a user’s chronological photo collection, the system must first locate an “anchor” image (e.g., a distinctive logo, a recurring object) that grounds the query, then use that anchor to navigate the corpus, chaining together visual clues until the final target images are identified. This two‑stage process—anchor discovery followed by targeted filtering—forces the model to reason at the corpus level rather than scoring each image independently.

DISBench Benchmark Construction

To evaluate this new task at scale, the authors build DISBench, a benchmark derived from the YFCC100M dataset. They preserve the natural hierarchy of users → photosets → photos, where each photoset corresponds to a real‑world event (concert, trip, exhibition). For each user, up to 2,000 photos are retained, providing a realistic multi‑year visual history. Queries are of two types:

- Intra‑Event – The model must first identify a specific event (using an anchor such as a logo) and then filter images within that event that satisfy a condition (e.g., “only the lead singer appears on stage”).

- Inter‑Event – The model must scan across multiple events to find recurring entities under temporal constraints (e.g., “a non‑plaster statue photographed twice in different trips within six months”).

Creating such context‑dependent queries manually would be prohibitive. The authors therefore devise a human‑model collaborative pipeline:

- Visual Semantic Parsing – A vision‑language model (VLM) processes each image and its metadata, extracting visual cues (landmarks, text, objects, faces).

- Latent Association Mining – For each cue, an embedding is generated and used to retrieve top‑k candidate images both within and outside the same photoset. A second VLM verifies whether the candidate truly shares the cue, filtering out false positives.

- Memory Graph Construction – The verified cues and their relationships are organized into a heterogeneous graph with four node types (Photo, Photoset, Visual‑Clue, Person) and two edge types (structural membership edges and association edges that carry natural‑language explanations).

- Subgraph Sampling & Query Synthesis – Meaningful subgraphs are sampled by expanding from a random photo node, balancing intra‑event density and cross‑event links. The subgraph is serialized into a structured textual description, which becomes a query after human verification.

Agentic Baseline Architecture

The baseline system is a modular agent equipped with:

- Planner – An LLM (e.g., GPT‑4) that decides which tool to invoke next based on the current state.

- Tool Suite – Image search, OCR, object detection, and text extraction modules that provide fine‑grained perception.

- Dual Memory – A short‑term “tool memory” that records recent tool outputs and a long‑term episodic memory that stores accumulated evidence across the exploration.

The agent follows a three‑step loop: (1) locate the anchor using visual‑clue search, (2) traverse association edges in the memory graph by invoking appropriate tools, and (3) filter the final set of images that satisfy the query condition.

Experimental Findings

When evaluated on DISBench, state‑of‑the‑art multimodal models (CLIP, BLIP‑2, Flamingo, FLAVA) and the proposed agent baseline achieve modest Exact‑Match (EM) scores, with the best model reaching only 28.7 %. This contrasts sharply with near‑perfect performance on conventional retrieval benchmarks, highlighting the difficulty of long‑horizon, corpus‑level reasoning. Error analysis reveals three dominant failure modes:

- Long‑Horizon Exploration Collapse – The agent loses track of earlier evidence, leading to premature termination of the reasoning chain.

- Inefficient Tool Sequencing – Redundant OCR or image‑search calls waste budget and cause timeouts.

- Ambiguous Visual Cues – Identical colors or logos appearing in multiple events cause the agent to select the wrong event.

These findings suggest that current LLM planners excel at short‑term decision making but lack robust mechanisms for maintaining and exploiting a structured memory of visual evidence over many steps.

Significance and Future Directions

The work makes several key contributions:

- It defines a new retrieval task that requires contextual, multi‑step reasoning over visual histories, filling a gap in existing benchmarks that focus on independent matching.

- It introduces a scalable, semi‑automated pipeline for generating high‑quality, context‑dependent queries, demonstrating how vision‑language models can shoulder the heavy lifting of association discovery while humans ensure correctness.

- It provides a baseline agent architecture with dual memory and tool integration, establishing a reference point for future research.

Future research avenues include improving memory retention (e.g., graph‑neural memory networks), developing more sophisticated planning algorithms that can anticipate long‑term consequences, handling ambiguous cues through probabilistic reasoning, and extending the benchmark to multimodal references (e.g., providing a sketch or a short video as an anchor).

In summary, “DeepImageSearch” and the DISBench benchmark push image retrieval toward a truly agentic, context‑aware paradigm, challenging the community to build systems that can explore, reason, and synthesize evidence across large, temporally structured visual corpora.

Comments & Academic Discussion

Loading comments...

Leave a Comment