LOREN: Low Rank-Based Code-Rate Adaptation in Neural Receivers

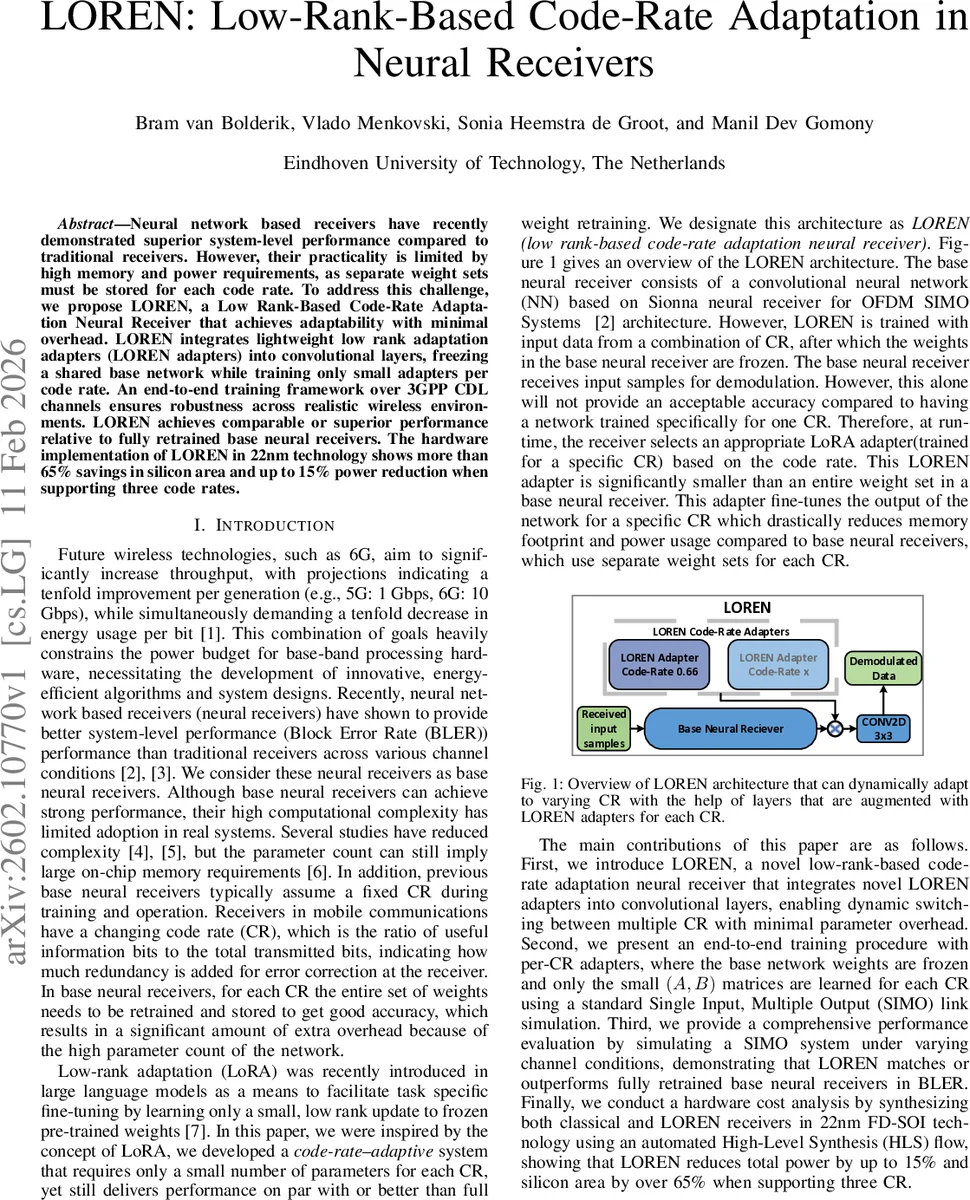

Neural network based receivers have recently demonstrated superior system-level performance compared to traditional receivers. However, their practicality is limited by high memory and power requirements, as separate weight sets must be stored for each code rate. To address this challenge, we propose LOREN, a Low Rank-Based Code-Rate Adaptation Neural Receiver that achieves adaptability with minimal overhead. LOREN integrates lightweight low rank adaptation adapters (LOREN adapters) into convolutional layers, freezing a shared base network while training only small adapters per code rate. An end-to-end training framework over 3GPP CDL channels ensures robustness across realistic wireless environments. LOREN achieves comparable or superior performance relative to fully retrained base neural receivers. The hardware implementation of LOREN in 22nm technology shows more than 65% savings in silicon area and up to 15% power reduction when supporting three code rates.

💡 Research Summary

The paper tackles a practical bottleneck of neural‑network (NN) based receivers: each code rate (CR) traditionally requires a separate full set of network weights, leading to prohibitive memory and power consumption for real‑world base‑band hardware. To overcome this, the authors introduce LOREN (Low‑Rank‑Based Code‑Rate Adaptation Neural Receiver), which adapts a single frozen base NN to multiple CRs by inserting lightweight low‑rank adapters into selected convolutional layers.

The base network follows the Sionna OFDM SIMO architecture (a ResNet‑style CNN with ~4.8 M parameters). It is first trained end‑to‑end on a diverse dataset that spans the full SNR range, random channel realizations from the 3GPP CDL‑C model, and a uniform distribution of CRs. After this global training, all 3×3 convolution kernels (W₀) are frozen. For each CR, a pair of small matrices A(CR)∈ℝ^{C_in×r} and B(CR)∈ℝ^{r×C_out} is learned; the adapter’s contribution is ΔW = α·A·B, implemented as a 1×1 convolution applied independently at every time‑frequency location of the OFDM grid. The rank r is set to 4 and α=1 in the experiments, so each adapter adds only (C_in+r)·r·2 ≈ 3 072 parameters for three CRs, compared with 147 456 parameters per 3×3 kernel in a naïve approach.

Training of the adapters proceeds by sampling a random CR and noise variance at each SGD step, generating OFDM symbols, passing them through the frozen base NN plus the current adapter, and back‑propagating the loss through the whole transmitter‑channel‑receiver chain. This per‑CR fine‑tuning reduces the number of trainable parameters from O(K²·C_in·C_out) to O(r·(C_in+C_out)) per CR, enabling instantaneous CR switching at inference time without re‑training the full network.

Performance is evaluated on a SIMO link (1 Tx, 2 Rx) with 16‑QAM, FFT size 128, sub‑carrier spacing 30 kHz, and 14 OFDM symbols per slot at 3.5 GHz. Three CRs (0.5, 0.66, 0.75) are considered. BLER curves show that for CR = 0.66, LOREN matches or slightly exceeds the fully retrained base NN, and outperforms a conventional LS‑based receiver by 2–3 dB. Across all CRs, LOREN consistently beats the traditional receiver and approaches the ideal “perfect CSI” benchmark, demonstrating that the low‑rank adapters capture the CR‑specific adjustments while the frozen base captures the bulk of channel‑induced spatial filtering.

A hardware synthesis in 22 nm FD‑SOI using an automated HLS flow quantifies the practical benefits. Supporting three CRs, LOREN reduces total silicon area by more than 65 % and cuts power consumption by up to 15 % relative to a baseline neural receiver that stores separate weight sets for each CR. The savings stem from the dramatically smaller adapter memory footprint and the minimal additional compute (only 1×1 convolutions).

The authors acknowledge limitations: only three CRs were experimentally validated, and the impact of varying the adapter rank r or the number of adapted layers was not exhaustively explored. Extending LOREN to MIMO configurations, higher‑order modulations (e.g., 64‑QAM, 256‑QAM), and dynamic SNR‑driven adapter selection are identified as future work.

In summary, LOREN demonstrates that low‑rank adaptation—originally popular in large language models—can be effectively transplanted to wireless signal processing. By freezing a powerful base NN and learning tiny per‑CR adapters, the approach delivers near‑state‑of‑the‑art BLER performance while slashing memory and power requirements, making neural receivers far more viable for next‑generation (6G) base‑band hardware.

Comments & Academic Discussion

Loading comments...

Leave a Comment