Convergence Rates for Distribution Matching with Sliced Optimal Transport

We study the slice-matching scheme, an efficient iterative method for distribution matching based on sliced optimal transport. We investigate convergence to the target distribution and derive quantitative non-asymptotic rates. To this end, we establi…

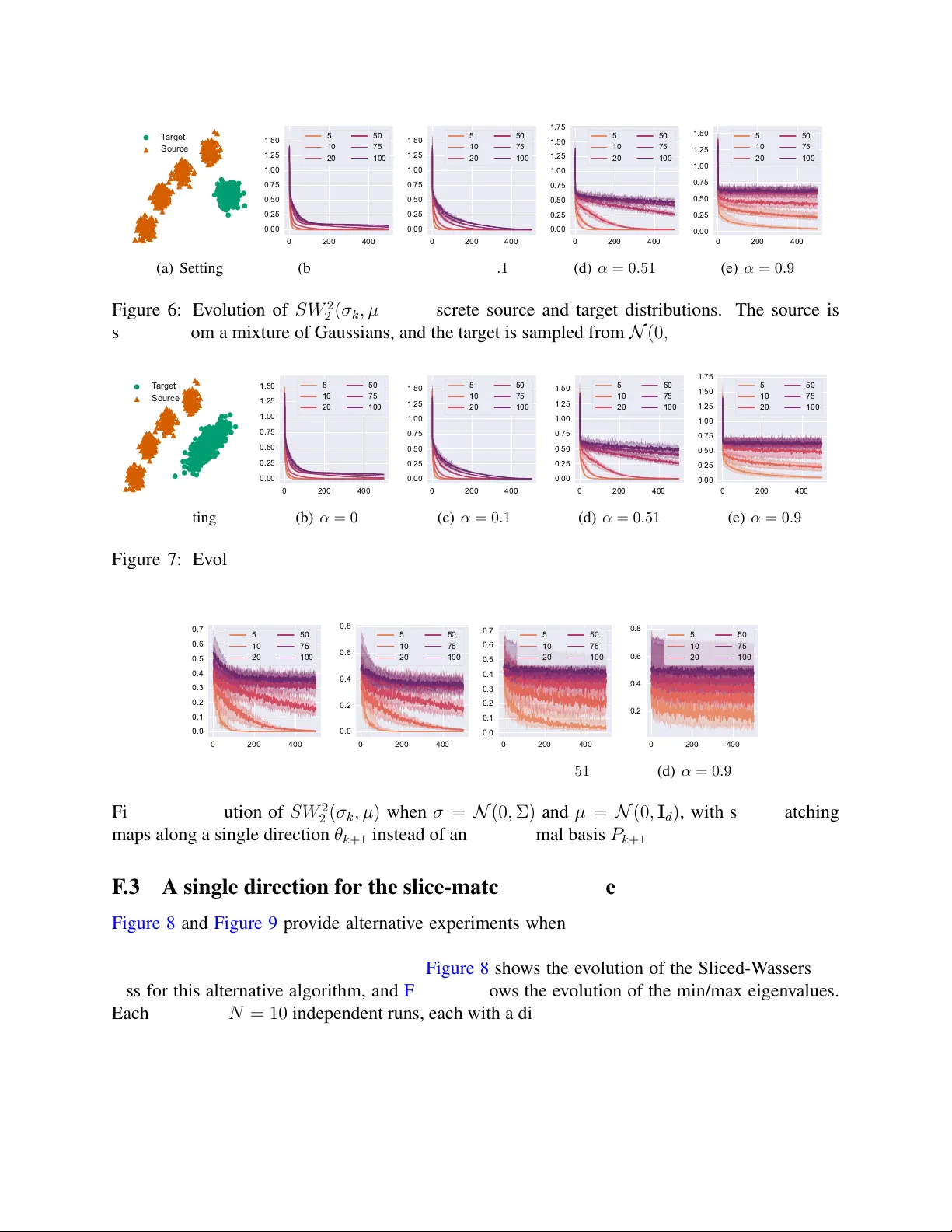

Authors: Gauthier Thurin, Claire Boyer, Kimia Nadjahi