Beyond Task Performance: A Metric-Based Analysis of Sequential Cooperation in Heterogeneous Multi-Agent Destructive Foraging

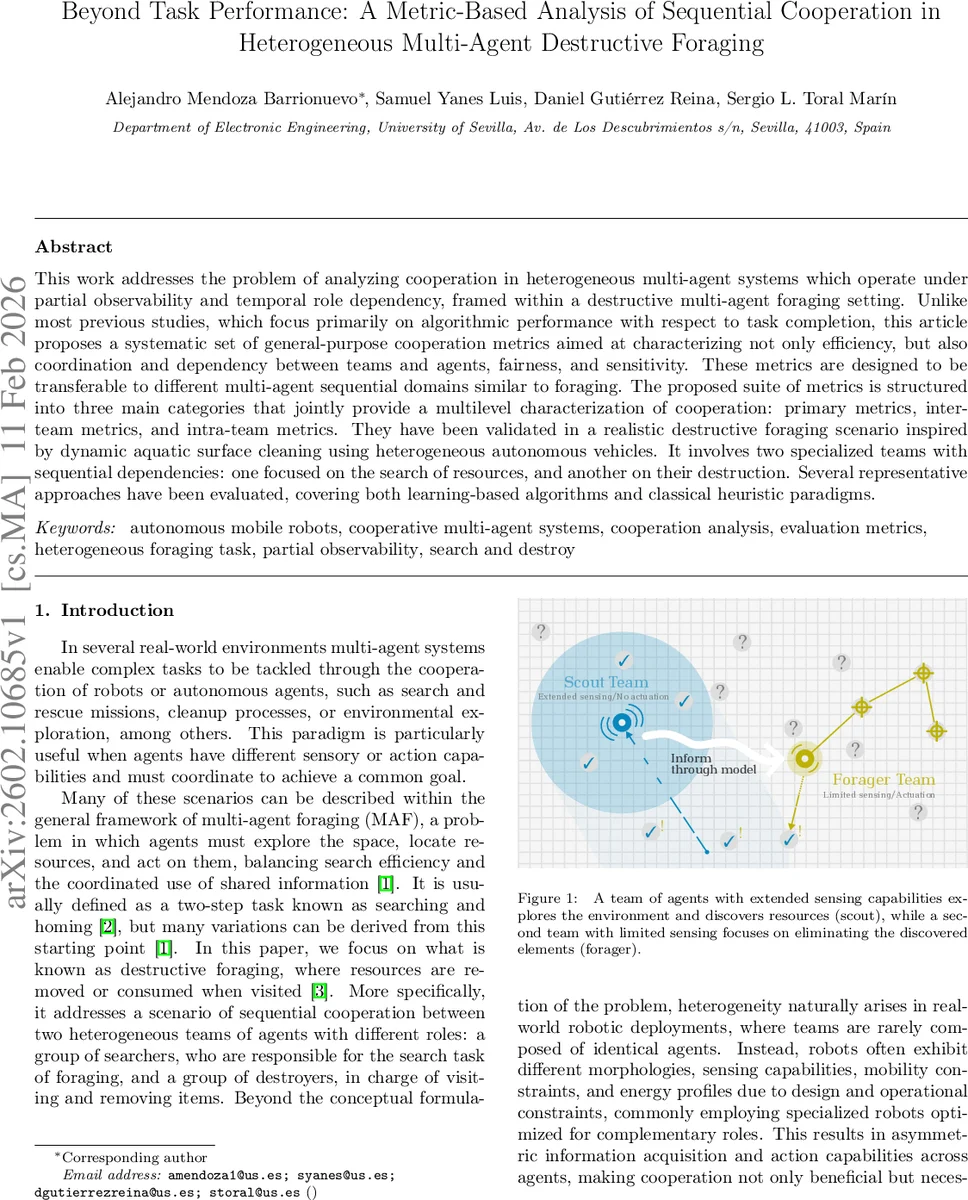

This work addresses the problem of analyzing cooperation in heterogeneous multi-agent systems which operate under partial observability and temporal role dependency, framed within a destructive multi-agent foraging setting. Unlike most previous studies, which focus primarily on algorithmic performance with respect to task completion, this article proposes a systematic set of general-purpose cooperation metrics aimed at characterizing not only efficiency, but also coordination and dependency between teams and agents, fairness, and sensitivity. These metrics are designed to be transferable to different multi-agent sequential domains similar to foraging. The proposed suite of metrics is structured into three main categories that jointly provide a multilevel characterization of cooperation: primary metrics, inter-team metrics, and intra-team metrics. They have been validated in a realistic destructive foraging scenario inspired by dynamic aquatic surface cleaning using heterogeneous autonomous vehicles. It involves two specialized teams with sequential dependencies: one focused on the search of resources, and another on their destruction. Several representative approaches have been evaluated, covering both learning-based algorithms and classical heuristic paradigms.

💡 Research Summary

**

The paper tackles a gap in the evaluation of heterogeneous multi‑agent systems that operate under partial observability and temporal role dependencies, specifically within a destructive foraging context. While most prior work on multi‑agent foraging (MAF) focuses on task‑completion metrics such as total resources collected or mission‑completion time, this study proposes a comprehensive, domain‑agnostic metric suite that captures multiple dimensions of cooperation: efficiency, coordination, fairness, redundancy, and robustness.

The authors organize the metrics into three hierarchical layers. Primary metrics assess basic performance of individual agents and the whole team, including removal efficiency (resources removed per unit distance), model accuracy (how well the shared map reflects the true waste distribution), and energy consumption. Inter‑team metrics quantify the interaction between the two specialized teams—scouts (searchers) and foragers (destroyers). These include a Dependency Index that measures how much the foragers’ actions rely on scout‑provided information, Information Latency (average delay from discovery to dissemination), and Collaboration Strength (the proportion of total reward attributable to joint actions). Intra‑team metrics evaluate internal dynamics such as Fairness Coefficient (energy‑to‑contribution ratio across agents), Redundancy Ratio (extent of duplicated exploration or removal), and Robustness Score (performance degradation when a subset of agents fails). All metrics are formally defined, normalized to a common 0‑1 scale, and designed to be comparable across different algorithms and domains.

To validate the framework, the authors construct a realistic scenario inspired by autonomous surface vehicle (ASV) cleanup of floating plastic waste. The environment is modeled as an H × W grid graph where each node may contain multiple waste items that drift over time due to wind and currents. Two heterogeneous teams operate: fast, lightweight scouts with a wide sensing radius that can move two nodes per step and continuously update a shared waste map; slower, heavier foragers with limited sensing that can remove all items at a visited node. Both teams have a finite number of moves reflecting energy constraints, and their cooperation is sequential: scouts first discover and broadcast waste locations, then foragers plan routes to eliminate the waste.

Three representative approaches are evaluated: (1) a centralized reinforcement‑learning (RL) policy that jointly controls both teams, (2) a distributed heuristic combining Lévy‑walk exploration for scouts with stigmergic (virtual pheromone) path planning for foragers, and (3) a rule‑based role‑specific strategy where scouts prioritize unexplored high‑uncertainty regions and foragers always head to the most recently reported waste.

When judged solely by traditional performance (total waste removed), the centralized RL method outperforms the others, achieving the highest removal efficiency and model accuracy. However, the richer metric suite reveals nuanced trade‑offs. The RL approach exhibits a high Dependency Index and longer Information Latency, indicating strong reliance on scout information; consequently, when a portion of scouts fails, the foragers’ behavior degrades sharply. The heuristic method, while slightly lower in raw removal, shows low redundancy, modest fairness, and superior robustness: its Dependency Index is modest, and the system gracefully handles scout failures with only a 5 % performance drop. The rule‑based method scores best on fairness and redundancy, distributing workload evenly among agents and avoiding excessive duplicate exploration.

Sensitivity analyses explore how variations in sensor range and movement speed affect the cooperation metrics. Reducing scout sensor range by 20 % raises the Dependency Index by 0.15 and cuts removal efficiency by roughly 8 %. Robustness tests where 30 % of scouts are disabled demonstrate that algorithms with lower inter‑team dependency (heuristic and rule‑based) maintain near‑optimal performance, whereas the centralized RL suffers an 18 % drop.

The authors argue that these multidimensional insights are essential for real‑world deployments where energy limits, sensor failures, and dynamic environments are the norm. By exposing the trade‑offs between efficiency, fairness, and robustness, the metric suite enables system designers to tailor algorithms to mission priorities, incorporate cooperation‑aware reward functions in learning, or perform systematic algorithm selection.

In conclusion, the paper delivers a novel, extensible framework for quantifying cooperation in heterogeneous multi‑agent foraging tasks. It demonstrates the framework’s practical utility through a realistic aquatic cleanup case study and shows that focusing solely on task‑completion metrics can mask critical cooperation deficiencies. Future work is outlined to integrate these metrics directly into learning objectives, automate algorithm tuning based on metric feedback, and extend the approach to other sequential cooperation domains such as disaster response, precision agriculture, and planetary exploration.

Comments & Academic Discussion

Loading comments...

Leave a Comment