ReSPEC: A Framework for Online Multispectral Sensor Reconfiguration in Dynamic Environments

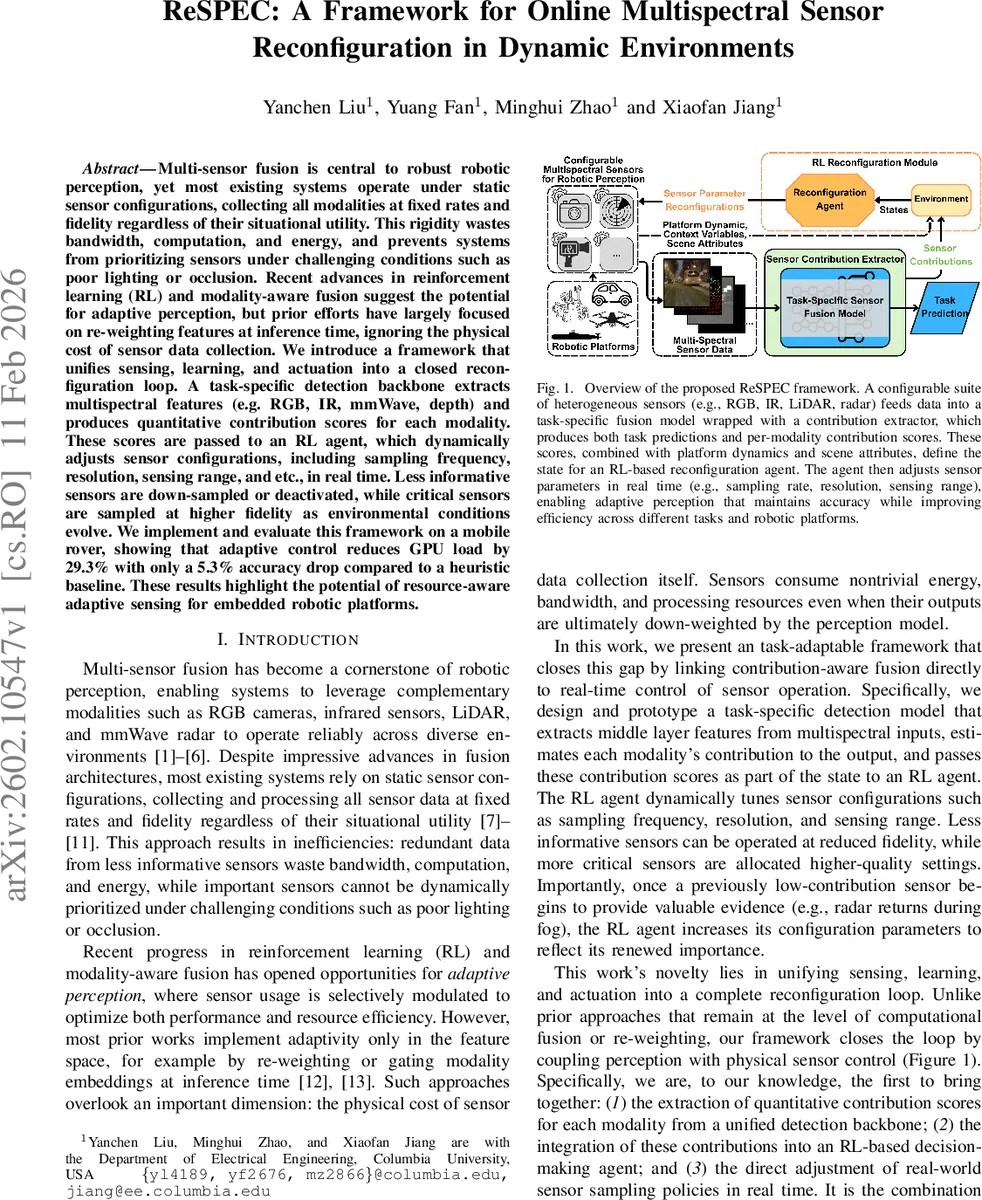

Multi-sensor fusion is central to robust robotic perception, yet most existing systems operate under static sensor configurations, collecting all modalities at fixed rates and fidelity regardless of their situational utility. This rigidity wastes bandwidth, computation, and energy, and prevents systems from prioritizing sensors under challenging conditions such as poor lighting or occlusion. Recent advances in reinforcement learning (RL) and modality-aware fusion suggest the potential for adaptive perception, but prior efforts have largely focused on re-weighting features at inference time, ignoring the physical cost of sensor data collection. We introduce a framework that unifies sensing, learning, and actuation into a closed reconfiguration loop. A task-specific detection backbone extracts multispectral features (e.g. RGB, IR, mmWave, depth) and produces quantitative contribution scores for each modality. These scores are passed to an RL agent, which dynamically adjusts sensor configurations, including sampling frequency, resolution, sensing range, and etc., in real time. Less informative sensors are down-sampled or deactivated, while critical sensors are sampled at higher fidelity as environmental conditions evolve. We implement and evaluate this framework on a mobile rover, showing that adaptive control reduces GPU load by 29.3% with only a 5.3% accuracy drop compared to a heuristic baseline. These results highlight the potential of resource-aware adaptive sensing for embedded robotic platforms.

💡 Research Summary

The paper introduces ReSPEC, a novel framework that tightly couples multispectral perception with real‑time sensor configuration control in dynamic robotic environments. While prior works have focused on adaptive weighting of sensor modalities at the feature‑level, they ignore the physical costs associated with acquiring sensor data (bandwidth, power, computational load). ReSPEC addresses this gap by creating a closed loop: a detection backbone processes RGB, infrared (IR), millimeter‑wave (mmWave) radar, and depth inputs; a contribution extractor quantifies each modality’s utility for the current detection task; and a reinforcement‑learning (RL) agent uses these utility scores together with platform dynamics and scene attributes to adjust sensor parameters such as sampling frequency, resolution, and radar operating mode.

The detection backbone is built on a lightweight YOLOv8‑style architecture. RGB is processed by an independent CNN, while IR, mmWave, and depth are first projected onto the RGB image plane using calibrated extrinsics and then jointly processed by a shared CNN. Mid‑level features from each modality are concatenated and fused before the detection head produces bounding boxes and confidence scores. Modality contribution is estimated via a gradient‑based attribution method: for each detected object, gradients are back‑propagated to the input channels, aggregated over the bounding‑box region, and summed across objects to yield scene‑level contribution scores. These scores serve as part of the RL state.

The RL agent employs tabular Q‑learning for computational efficiency. The state vector comprises platform velocity, object density, lighting/clutter indicators, and the modality contribution scores. The discrete action space includes per‑sensor frame‑rate choices (1–30 Hz), resolution options (e.g., 1280×720, 960×540, 640×360 for RGB; 160×120, 320×240 for IR), and for mmWave a binary mode selection between “range‑prioritized” and “velocity‑prioritized” configurations. The reward function balances detection utility (high contribution) against resource consumption (higher frame rates and resolutions incur larger penalties). Consequently, the agent learns to allocate high‑fidelity settings to sensors that are currently informative while throttling or minimally sampling less useful modalities.

Experiments were conducted on a mobile rover (named SPEC) equipped with the four sensors. The system was evaluated across varied lighting, weather (including fog), and clutter conditions. Compared with a heuristic baseline that statically configures all sensors at high fidelity, ReSPEC achieved a 29.3 % reduction in GPU load while incurring only a 5.3 % drop in mean average precision (mAP). Notably, under foggy conditions the agent automatically increased the mmWave radar’s frame rate and selected the “range‑prioritized” mode, compensating for the loss of visual information and preserving overall detection performance.

Key contributions are: (1) a practical method for extracting per‑modality contribution scores directly from a detection network, (2) integration of these scores into an RL‑based sensor‑reconfiguration policy, and (3) a real‑world demonstration that adaptive sensor control can substantially lower sensing and computational costs without severely compromising perception accuracy. Limitations include the use of discrete Q‑learning, which restricts the granularity of parameter tuning, and reliance on gradient‑based attribution that may be noisy if the detection backbone is not well‑trained. Future work could explore continuous‑action RL algorithms (e.g., PPO, DDPG), uncertainty‑aware contribution estimation, and joint optimization across multiple robotic tasks such as SLAM and navigation. Overall, ReSPEC showcases the promise of resource‑aware adaptive sensing for embedded robotic platforms operating in unpredictable environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment