RealHD: A High-Quality Dataset for Robust Detection of State-of-the-Art AI-Generated Images

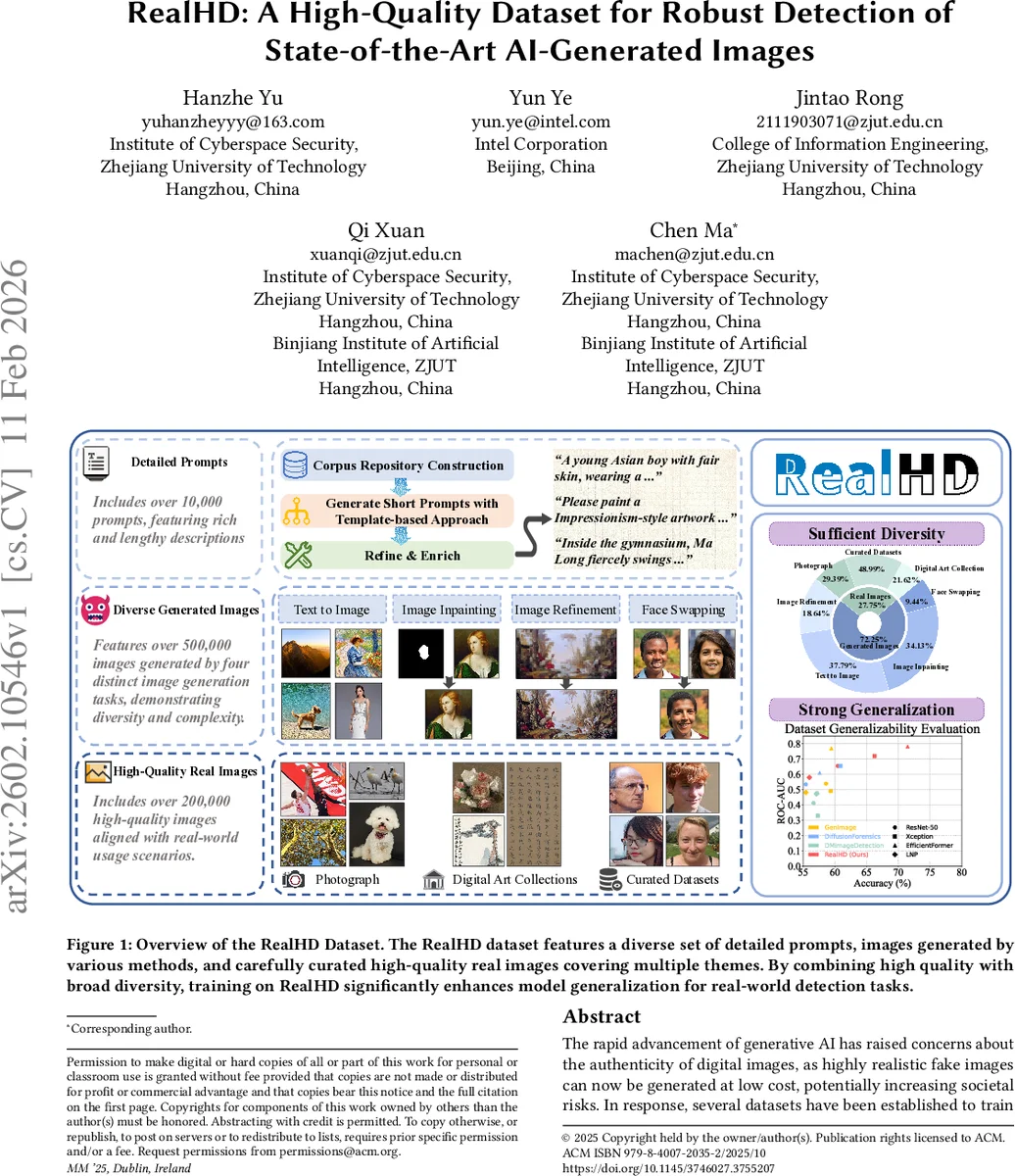

The rapid advancement of generative AI has raised concerns about the authenticity of digital images, as highly realistic fake images can now be generated at low cost, potentially increasing societal risks. In response, several datasets have been established to train detection models aimed at distinguishing AI-generated images from real ones. However, existing datasets suffer from limited generalization, low image quality, overly simple prompts, and insufficient image diversity. To address these limitations, we propose a high-quality, large-scale dataset comprising over 730,000 images across multiple categories, including both real and AI-generated images. The generated images are synthesized via state-of-the-art methods, including text-to-image generation (guided by over 10,000 carefully designed prompts), image inpainting, image refinement, and face swapping. Each generated image is annotated with its generation method and category. Inpainting images further include binary masks to indicate inpainted regions, providing rich metadata for analysis. Compared to existing datasets, detection models trained on our dataset demonstrate superior generalization capabilities. Our dataset not only serves as a strong benchmark for evaluating detection methods but also contributes to advancing the robustness of AI-generated image detection techniques. Building upon this, we propose a lightweight detection method based on image noise entropy, which transforms the original image into an entropy tensor of Non-Local Means (NLM) noise before classification. Extensive experiments demonstrate that models trained on our dataset achieve strong generalization, and our method delivers competitive performance, establishing a solid baseline for future research. The dataset and source code are publicly available at https://real-hd.github.io.

💡 Research Summary

RealHD introduces a comprehensive, high‑quality dataset designed to advance the detection of AI‑generated images across a wide range of generation techniques. The authors first motivate the work by noting that recent diffusion models such as Stable Diffusion and FLUX enable anyone to produce photorealistic images at negligible cost, raising concerns about misinformation, forgery, and copyright infringement. Existing detection datasets either focus narrowly on facial forgeries, contain only text‑to‑image samples, or suffer from low resolution and simplistic prompts, which limits the ability of detection models to generalize to real‑world scenarios.

To address these gaps, RealHD assembles over 730 000 images, of which roughly 200 000 are authentic high‑resolution photographs, artworks, news shots, and portraits. All real images are stored losslessly (PNG) or as high‑quality JPEG (quality ≥ 90) and have been filtered to ensure a minimum resolution; more than 73 % of them exceed one million pixels, matching the quality of images commonly shared on social media. The synthetic portion comprises 531 430 AI‑generated images produced with four distinct tasks: (1) text‑to‑image (T2I), (2) image inpainting (INP), (3) image refinement (REF), and (4) face swapping (FS). Each generated image is accompanied by fine‑grained annotations indicating the generation method, semantic category, and, for inpainting, a binary mask that marks the altered region. The dataset therefore supports both image‑level classification and region‑level analysis.

A key contribution is the construction of more than 10 000 detailed prompts. The authors define 15 sub‑corpora (e.g., ethnic group, facial expression, artist, landscape type) and use large language models (GPT‑4o, DeepSeek‑V2, Qwen‑72B) together with human expert filtering to generate rich, multi‑sentence prompts. These prompts are then combined with template structures to produce diverse textual descriptions that guide the diffusion models, ensuring that generated images contain complex semantics, varied lighting, and realistic compositions—far beyond the “a photo of a cat” style prompts typical of earlier datasets.

The dataset’s impact is demonstrated through extensive experiments. Detection models such as Xception, ConvNeXt, and ViT‑Base are trained on RealHD and compared against models trained on prior datasets (GenImage, DiffusionForensics, etc.). When evaluated on the challenging Chameleon benchmark—where all images pass a human Turing test—RealHD‑trained models achieve up to 99 % accuracy and an AUC of 0.9996, whereas the same architectures trained on GenImage only reach 85 % accuracy and an AUC of 0.9515. This 10–15 % absolute gain in accuracy and a 0.03–0.05 increase in AUC illustrate the strong generalization afforded by RealHD’s high‑quality, multi‑modal content.

Beyond the dataset, the paper proposes a lightweight detection method based on noise entropy. The approach first applies a Non‑Local Means (NLM) filter to each image to isolate residual noise, then computes a per‑pixel Shannon entropy map, which is treated as an “entropy tensor.” This tensor is fed into a compact classifier (either a shallow 1‑D CNN or a two‑layer MLP). Because the entropy map captures subtle statistical irregularities left by diffusion samplers, the method outperforms standard RGB‑based classifiers while using less than one‑tenth the parameters and achieving inference times under 1 ms per image on an NVIDIA 1080 Ti. When combined with Xception, the entropy‑based model improves accuracy by 15.9 % and AUC by 5 % relative to the baseline.

The authors acknowledge limitations: the dataset currently reflects generation pipelines up to Stable Diffusion v3.5 and FLUX, so future releases must incorporate newer models (e.g., Stable Diffusion XL, Midjourney V6). Inpainting masks are primarily rectangular, limiting evaluation of more complex free‑form edits. Moreover, while the real‑image collection spans art, portrait, landscape, animal, and news domains, specialized fields such as medical imaging or satellite imagery remain absent. Ethical considerations around large‑scale distribution of synthetic media are also discussed, with a call for clear licensing and responsible use guidelines.

In summary, RealHD delivers a uniquely valuable resource: a large‑scale, high‑resolution, multi‑modal dataset with rich annotations and realistic prompts, enabling detection models to learn robust, transferable cues for AI‑generated imagery. The accompanying noise‑entropy baseline provides a practical, low‑cost detection strategy that can serve as a reference point for future research. By openly releasing the data and code, the authors aim to catalyze further advances in AI‑generated image forensics, helping the community build more trustworthy visual media ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment