Co-jump: Cooperative Jumping with Quadrupedal Robots via Multi-Agent Reinforcement Learning

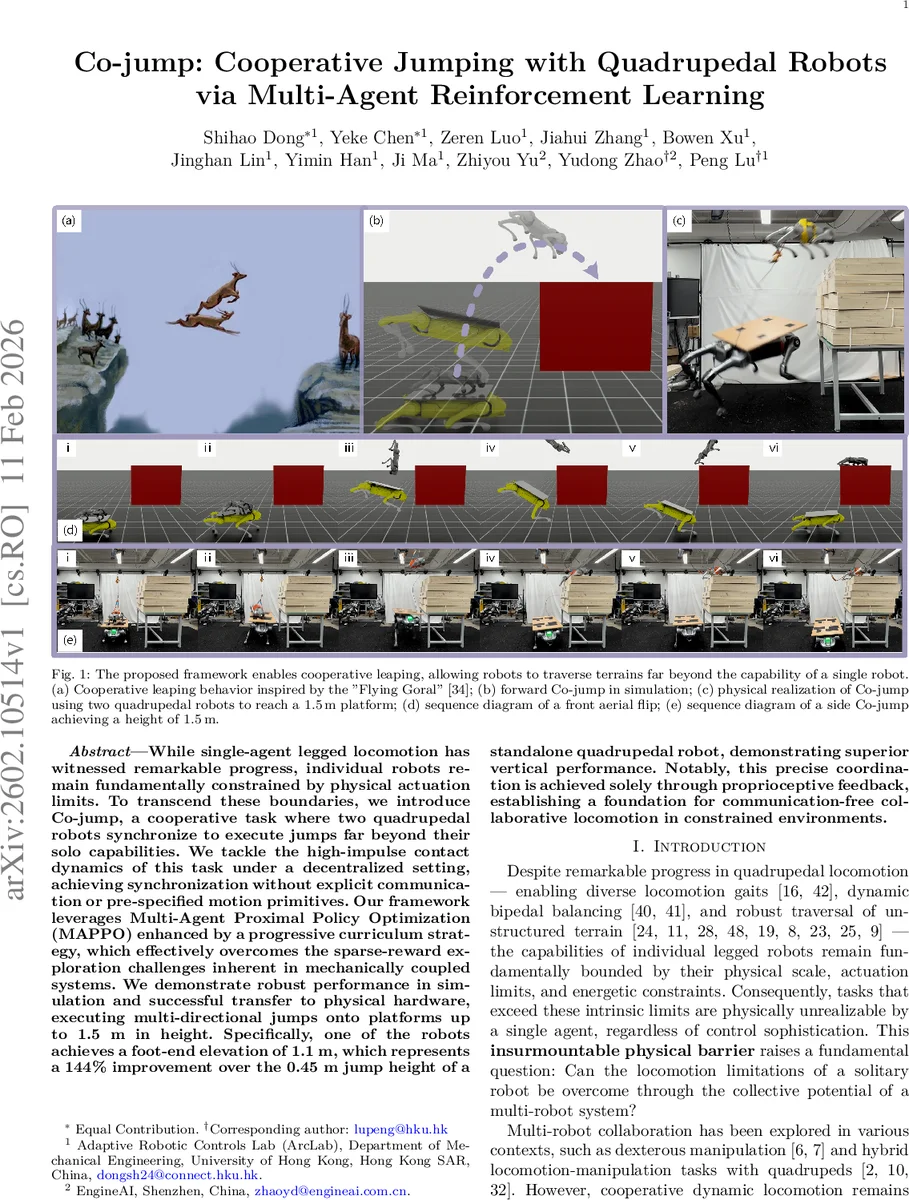

While single-agent legged locomotion has witnessed remarkable progress, individual robots remain fundamentally constrained by physical actuation limits. To transcend these boundaries, we introduce Co-jump, a cooperative task where two quadrupedal robots synchronize to execute jumps far beyond their solo capabilities. We tackle the high-impulse contact dynamics of this task under a decentralized setting, achieving synchronization without explicit communication or pre-specified motion primitives. Our framework leverages Multi-Agent Proximal Policy Optimization (MAPPO) enhanced by a progressive curriculum strategy, which effectively overcomes the sparse-reward exploration challenges inherent in mechanically coupled systems. We demonstrate robust performance in simulation and successful transfer to physical hardware, executing multi-directional jumps onto platforms up to 1.5 m in height. Specifically, one of the robots achieves a foot-end elevation of 1.1 m, which represents a 144% improvement over the 0.45 m jump height of a standalone quadrupedal robot, demonstrating superior vertical performance. Notably, this precise coordination is achieved solely through proprioceptive feedback, establishing a foundation for communication-free collaborative locomotion in constrained environments.

💡 Research Summary

The paper introduces “Co‑jump,” a novel cooperative locomotion task in which two quadrupedal robots work together to achieve jumps that far exceed the capabilities of a single robot. One robot (the “launcher”) actively pushes against the ground to generate a large impulse, effectively acting as a springboard for the second robot (the “jumper”), which uses this impulse to reach platforms otherwise inaccessible. The key challenge is to achieve precise spatiotemporal synchronization using only proprioceptive sensing, without any external perception or inter‑robot communication.

To address this, the authors formulate the problem as a Decentralized Partially Observable Markov Decision Process (Dec‑POMDP) and adopt a Multi‑Agent Proximal Policy Optimization (MAPPO) algorithm within a Centralized Training with Decentralized Execution (CTDE) framework. Each robot receives a local observation vector consisting of joint positions and velocities, base angular velocity, gravity direction, the previous action, and the 3‑D position and size of the shared target platform. A centralized critic receives the concatenated observations of both agents plus their relative base positions, enabling effective credit assignment during training, while the actors operate solely on local data at runtime.

The reward function is a weighted sum of three components: (1) a task reward that evaluates individual jump performance (height, landing accuracy, foot‑contact management), (2) a regularization reward that penalizes physically implausible actions (excessive torques, joint limit violations, abrupt motions), and (3) a cooperation reward shared by both agents that encourages synchronized aerial phases, penalizes the jumper’s fall, and grants bonuses for successful joint landings. This design mitigates the credit‑assignment problem inherent in asymmetric multi‑robot tasks.

Training proceeds through a four‑stage curriculum: (a) Gravity Curriculum – initially reduces gravity to let the agents discover a viable jumping posture; (b) Target Curriculum – gradually raises the platform height to increase difficulty; (c) Initialization Curriculum – randomizes starting poses and positions to improve robustness; (d) Delay Curriculum – introduces artificial timing offsets between the robots to force the policies to handle non‑stationary interactions. Each stage builds on the policy learned in the previous stage, allowing the agents to explore the sparse‑reward space efficiently.

Simulation experiments demonstrate successful forward, lateral, and flip Co‑jumps. The jumper achieves a foot‑end elevation of 1.1 m, a 144 % improvement over the 0.45 m height attainable by a solo robot. The learned policies are transferred directly to physical hardware without additional fine‑tuning. Using domain randomization of dynamics parameters and a PD controller for low‑level joint actuation, the two real robots execute cooperative jumps onto a 1.5 m high platform, confirming the sim‑to‑real transfer.

Key contributions include: (1) a communication‑free MARL framework that achieves high‑dynamic, tightly coupled cooperative jumping using only proprioception; (2) a progressive curriculum that enables emergence of complex coordination from scratch, eliminating reliance on expert demonstrations or motion‑capture data; (3) a reward architecture that resolves multi‑agent credit assignment in asymmetric physical interactions; and (4) real‑world validation of the approach on hardware, achieving record‑breaking vertical performance.

Limitations are noted: experiments are limited to a pair of robots with fixed asymmetric roles, raising questions about scalability to larger teams or role‑flexible scenarios. The approach also depends on precise actuation and low sensor noise; performance under more challenging terrains or external disturbances remains to be explored. Future work could extend the method to multi‑robot swarms, incorporate limited low‑bandwidth communication, and investigate adaptive role assignment to broaden applicability of communication‑free collaborative locomotion.

Comments & Academic Discussion

Loading comments...

Leave a Comment