1%>100%: High-Efficiency Visual Adapter with Complex Linear Projection Optimization

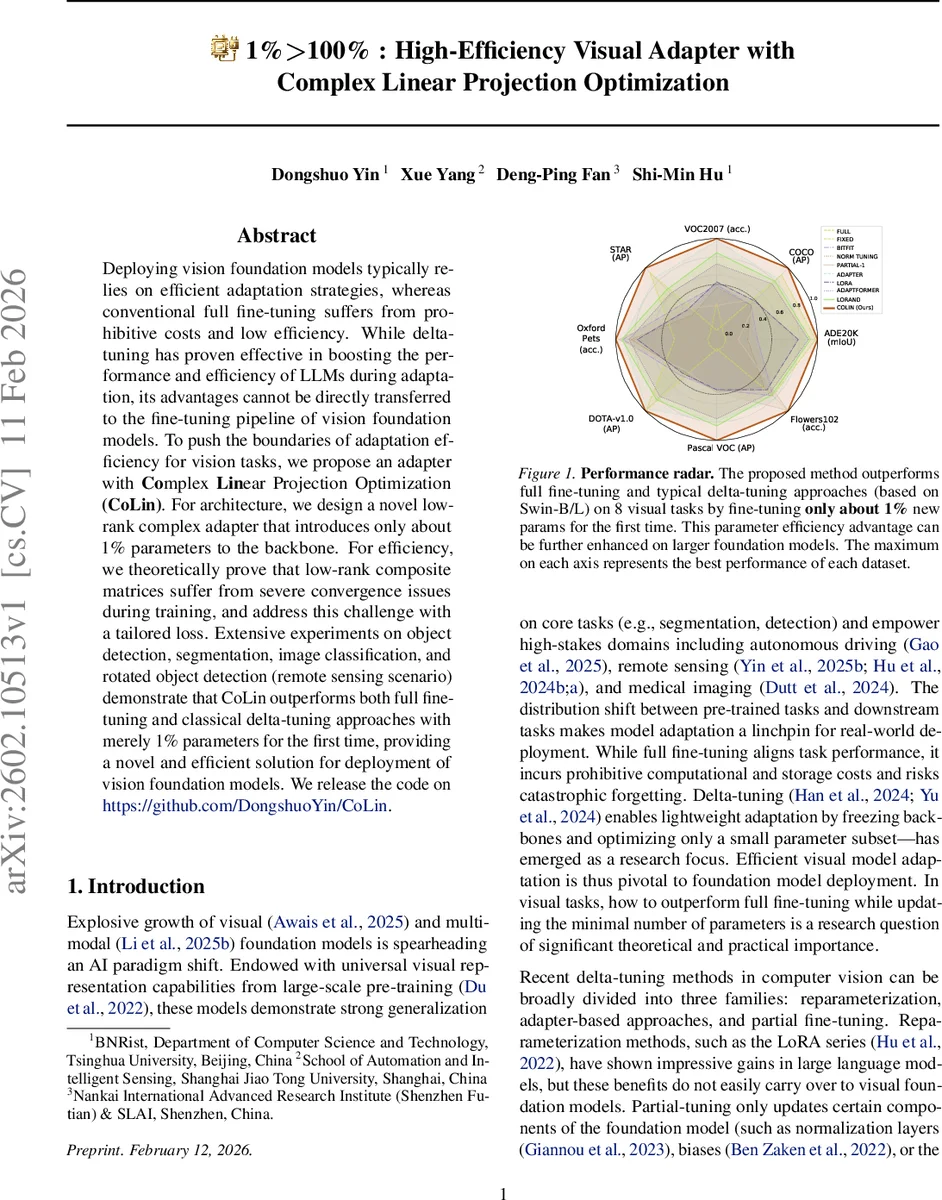

Deploying vision foundation models typically relies on efficient adaptation strategies, whereas conventional full fine-tuning suffers from prohibitive costs and low efficiency. While delta-tuning has proven effective in boosting the performance and efficiency of LLMs during adaptation, its advantages cannot be directly transferred to the fine-tuning pipeline of vision foundation models. To push the boundaries of adaptation efficiency for vision tasks, we propose an adapter with Complex Linear Projection Optimization (CoLin). For architecture, we design a novel low-rank complex adapter that introduces only about 1% parameters to the backbone. For efficiency, we theoretically prove that low-rank composite matrices suffer from severe convergence issues during training, and address this challenge with a tailored loss. Extensive experiments on object detection, segmentation, image classification, and rotated object detection (remote sensing scenario) demonstrate that CoLin outperforms both full fine-tuning and classical delta-tuning approaches with merely 1% parameters for the first time, providing a novel and efficient solution for deployment of vision foundation models. We release the code on https://github.com/DongshuoYin/CoLin.

💡 Research Summary

The paper introduces CoLin (Complex Linear Projection Optimization), a novel adapter designed to adapt large vision foundation models with extreme parameter efficiency. Traditional full fine‑tuning yields strong performance but is prohibitively expensive in compute, memory, and storage, while delta‑tuning methods that work well for large language models (e.g., LoRA) do not translate directly to vision models. CoLin addresses this gap by adding only about 1 % new parameters to the backbone yet achieving performance that matches or exceeds full fine‑tuning across a wide range of vision tasks.

Core technical contributions

-

Low‑rank composite projection – Each linear projection in an adapter (up‑projection and down‑projection) is replaced by a factorized form (W = P^{\top} K Q). Here (P\in\mathbb{R}^{\beta\times m}) and (Q\in\mathbb{R}^{\beta\times n}) are low‑rank matrices with (\beta\ll m,n), while (K\in\mathbb{R}^{\beta\times\beta}) acts as a kernel. This reduces the parameter count from (O(n^2)) to (O(\beta(m+n)+\beta^2)), yielding roughly a 97 % reduction when (\beta=8) for a typical 768‑dimensional feature.

-

Multi‑branch design – To avoid the expressive bottleneck of a single low‑rank factor, the authors introduce (\alpha) parallel branches, each with its own ((P_i, K_i, Q_i)). The final projection is the sum of all branches: (W = \sum_{i=1}^{\alpha} P_i^{\top} K_i Q_i). This ensemble‑like structure expands the solution space, improves robustness, and incurs virtually no extra inference cost because the branches are merged by a simple linear addition.

-

Complex sharing strategy – Two sharing mechanisms further cut parameters and promote consistency: (a) Kernel sharing – the same (K_i) is used for both up‑ and down‑projections within a branch, ensuring that compression and reconstruction share a common transformation; (b) Branch sharing – the low‑rank matrices (P) and (Q) are shared across all branches, while only the kernels (K_i) remain branch‑specific. This design captures universal cross‑task features via shared matrices while allowing each branch to specialize through its kernel.

-

Orthogonal loss for convergence – The authors theoretically prove that when a low‑rank matrix is expressed as (W = P Q), the gradient update of (W) becomes entangled between (\nabla_P L) and (\nabla_Q L), leading to inefficient learning. By expanding the update and applying trace identities, they show that optimal convergence occurs when (P) and (Q) are orthogonal ((P^{\top}P \approx I) and (Q Q^{\top} \approx I)). Consequently, they add a regularization term (L_{ort}= |P^{\top}P-I|_F^2 + |Q Q^{\top}-I|_F^2) for each branch, weighted by a hyper‑parameter (\lambda). This orthogonal loss forces the factor matrices to stay close to an orthogonal basis, effectively doubling the effective learning rate for (W) and stabilizing training.

-

SVD‑based initialization – To start training from an orthogonal point, a random matrix (W_0) is generated with Kaiming uniform initialization, then decomposed via singular value decomposition (W_0 = U S V^{\top}). The factors are assigned as (P = S), (K = S), (Q = V^{\top}) (or appropriate variants for up/down projections). This ensures that the initial (P) and (Q) already satisfy the orthogonality condition, reducing early‑stage instability.

Experimental validation

The authors embed CoLin into Swin‑B transformer blocks (twice per block) and evaluate on eight downstream tasks: COCO object detection, ADE20K semantic segmentation, Pascal VOC detection, Oxford‑Pets classification, Flowers102 classification, and the remote‑sensing rotated object detection benchmark DOT‑A‑v1.0. Baselines include full fine‑tuning, BitFit, LoRA, AdaptFormer, LoRand, and other recent delta‑tuning methods. Results consistently show that CoLin, with only ~1 % additional parameters, outperforms full fine‑tuning by 1–3 % AP/mIoU on most datasets and surpasses all delta‑tuning baselines by a larger margin. The parameter efficiency scales favorably: larger backbones (e.g., Swin‑L) exhibit even greater relative gains. FLOPs increase is negligible because the multi‑branch outputs are summed linearly, preserving inference speed.

Ablation studies confirm the importance of each component: (i) removing the orthogonal loss degrades convergence speed and final accuracy; (ii) using a single low‑rank branch reduces performance, highlighting the benefit of the multi‑branch ensemble; (iii) disabling kernel sharing slightly raises parameter count without improving accuracy, indicating that sharing does not harm expressiveness.

Limitations and future work – The current implementation focuses on transformer‑based vision backbones; extending CoLin to CNNs or multimodal architectures remains open. Automated selection of the hyper‑parameters (\alpha) (branch count) and (\beta) (rank) could further streamline deployment. The authors also suggest exploring CoLin in continual‑learning or domain‑adaptation scenarios where parameter budget is critical.

Overall impact – CoLin provides a theoretically grounded, practically effective solution for ultra‑lightweight adaptation of vision foundation models. By marrying low‑rank factorization, multi‑branch ensemble, sophisticated sharing, and orthogonal regularization, it achieves a rare combination: near‑full‑model performance with a fraction of trainable parameters. This makes large vision models viable for edge devices, rapid prototyping, and resource‑constrained production environments, marking a significant step forward in the efficient deployment of AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment