Analyzing Fairness of Neural Network Prediction via Counterfactual Dataset Generation

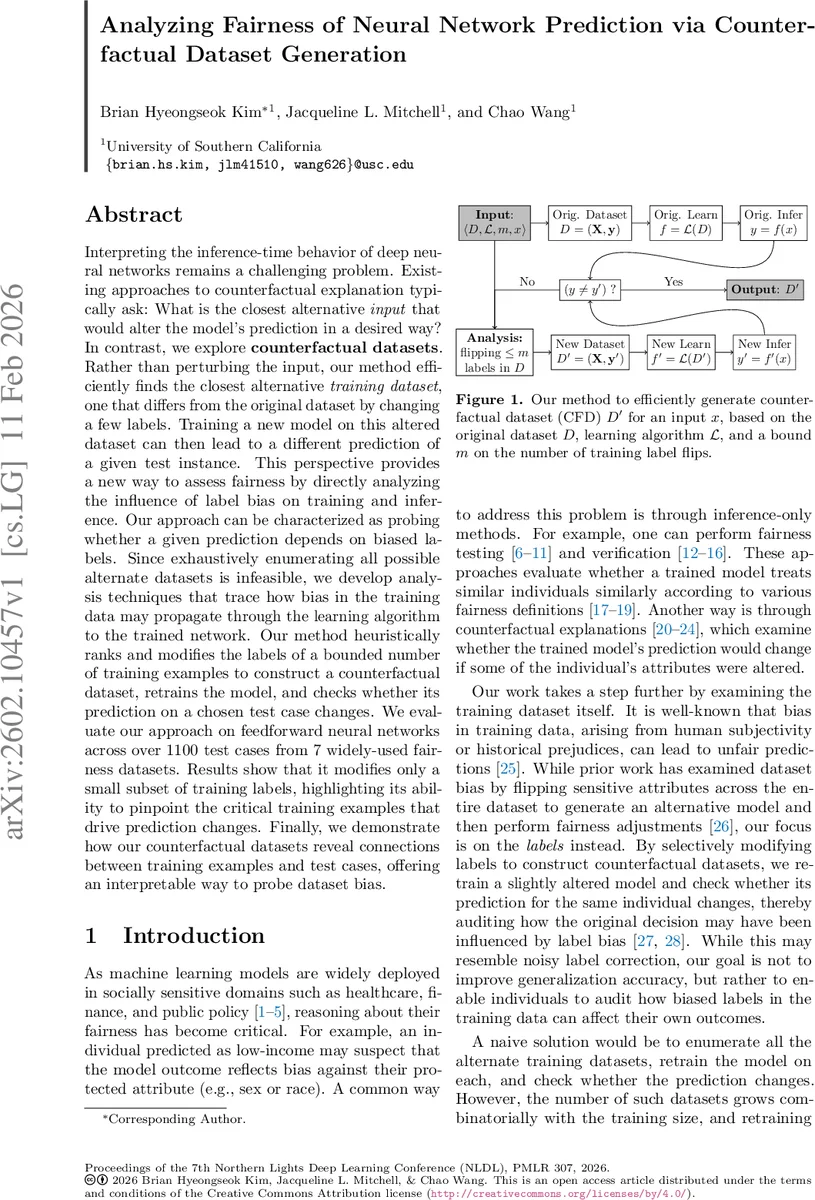

Interpreting the inference-time behavior of deep neural networks remains a challenging problem. Existing approaches to counterfactual explanation typically ask: What is the closest alternative input that would alter the model’s prediction in a desired way? In contrast, we explore counterfactual datasets. Rather than perturbing the input, our method efficiently finds the closest alternative training dataset, one that differs from the original dataset by changing a few labels. Training a new model on this altered dataset can then lead to a different prediction of a given test instance. This perspective provides a new way to assess fairness by directly analyzing the influence of label bias on training and inference. Our approach can be characterized as probing whether a given prediction depends on biased labels. Since exhaustively enumerating all possible alternate datasets is infeasible, we develop analysis techniques that trace how bias in the training data may propagate through the learning algorithm to the trained network. Our method heuristically ranks and modifies the labels of a bounded number of training examples to construct a counterfactual dataset, retrains the model, and checks whether its prediction on a chosen test case changes. We evaluate our approach on feedforward neural networks across over 1100 test cases from 7 widely-used fairness datasets. Results show that it modifies only a small subset of training labels, highlighting its ability to pinpoint the critical training examples that drive prediction changes. Finally, we demonstrate how our counterfactual datasets reveal connections between training examples and test cases, offering an interpretable way to probe dataset bias.

💡 Research Summary

The paper tackles the problem of understanding how biased training labels affect the predictions of deep neural networks, especially in socially sensitive applications where fairness is paramount. While most existing counterfactual explanation methods focus on minimally altering an input instance to change a model’s output, this work shifts the focus to the training data itself. It asks: “If we were to change a small number of labels in the training set, could the model’s prediction for a particular test case change?” The answer to this question provides a direct audit of whether a specific prediction depends on potentially biased labels.

To avoid the combinatorial explosion of enumerating all possible label‑flipping scenarios (the number of alternative datasets grows as the binomial coefficient (n choose ≤ m)), the authors propose an efficient two‑stage analysis that guides the search for a Counterfactual Dataset (CFD). A CFD is defined as a training set D′ that differs from the original D by at most m label flips and, after retraining the same learning algorithm L, yields a different prediction for the chosen test input x (i.e., f′(x) ≠ f(x)).

Stage 1 – Linear‑Regression Surrogate:

Neural networks with ReLU activations are piecewise linear. For a fixed test input x, the set of active neurons defines a linear region where the network behaves as y = θᵀx. By extracting the effective weight matrix θ (product of weight matrices masked by the binary activation vectors of each layer), the authors obtain a local linear model. Linear regression has a closed‑form solution θ = (XᵀX)⁻¹Xᵀy, allowing the computation of how each training label contributes to θ. This yields a ranking of training examples by the estimated impact of flipping their labels on the local linear predictor.

Stage 2 – Neuron‑Activation Similarity:

Beyond the training‑stage impact, the authors consider inference‑stage proximity. They encode the activation pattern of each training example and the test input as binary vectors (active = 1, inactive = 0). A similarity measure (e.g., Hamming distance or cosine similarity) quantifies how “close” a training example is to the test input in activation space. Intuitively, examples with similar activation patterns lie in the same linear region and are more likely to influence the test prediction when their labels are altered.

The two rankings are combined—either by weighted averaging or by intersecting top‑k lists—to produce a small candidate set of training points whose labels are most promising to flip. The algorithm then iteratively flips up to m of these labels, retrains the model (using the same optimizer, e.g., Adam), and checks whether the prediction on x changes. If a change occurs, the corresponding D′ is returned as a CFD; otherwise, the next candidate combination is tried.

Experimental Evaluation:

The method is implemented in PyTorch and evaluated on seven widely used fairness benchmark datasets (Adult, COMPAS, German Credit, Law School, etc.), covering over 1,100 individual test cases. Key findings include:

- Efficiency: For small datasets the algorithm quickly recovers ground‑truth CFDs; for larger datasets it outperforms baselines (including influence‑function based methods) by producing CFDs for a larger fraction of test cases with negligible overhead.

- Sparsity: Typically only 5–10 label flips (far less than 0.1 % of the training set) are sufficient to change a prediction, demonstrating that a tiny subset of biased labels can drive unfair outcomes.

- Effectiveness vs. Influence Functions: State‑of‑the‑art influence‑function techniques are both slower (requiring Hessian‑vector products) and less successful at finding label flips that alter predictions for the tested neural network architectures.

- Robustness Across Decision Boundaries: The approach works for test inputs both near and far from the learned decision boundary, indicating that the combined training‑ and inference‑stage analysis captures a broad range of causal pathways.

Contributions:

- Introduction of the Counterfactual Dataset concept for auditing label‑bias‑induced unfairness at the individual prediction level.

- Development of two complementary analytical tools—linear‑regression surrogate for the training phase and neuron‑activation similarity for the inference phase—that together guide an efficient search for minimal label modifications.

- Empirical validation on multiple fairness datasets, showing that the method can pinpoint critical training examples and provide interpretable evidence of bias.

Limitations and Future Work:

The current study focuses on feed‑forward multilayer perceptrons with ReLU activations. Extending the piecewise‑linear approximation to architectures with non‑ReLU activations, convolutional layers, or attention mechanisms remains an open challenge. Moreover, while flipping labels serves as a diagnostic tool, it does not directly correct real‑world data collection processes. Future research directions include adapting the technique to more complex networks, integrating causal models of label generation, and exploring how CFDs can be leveraged for automated fairness remediation or legal evidence generation.

In summary, the paper presents a novel, computationally tractable framework for generating counterfactual training datasets that expose the dependence of neural‑network predictions on potentially biased labels, offering a valuable addition to the toolbox of fairness auditing and interpretability.

Comments & Academic Discussion

Loading comments...

Leave a Comment