LocoVLM: Grounding Vision and Language for Adapting Versatile Legged Locomotion Policies

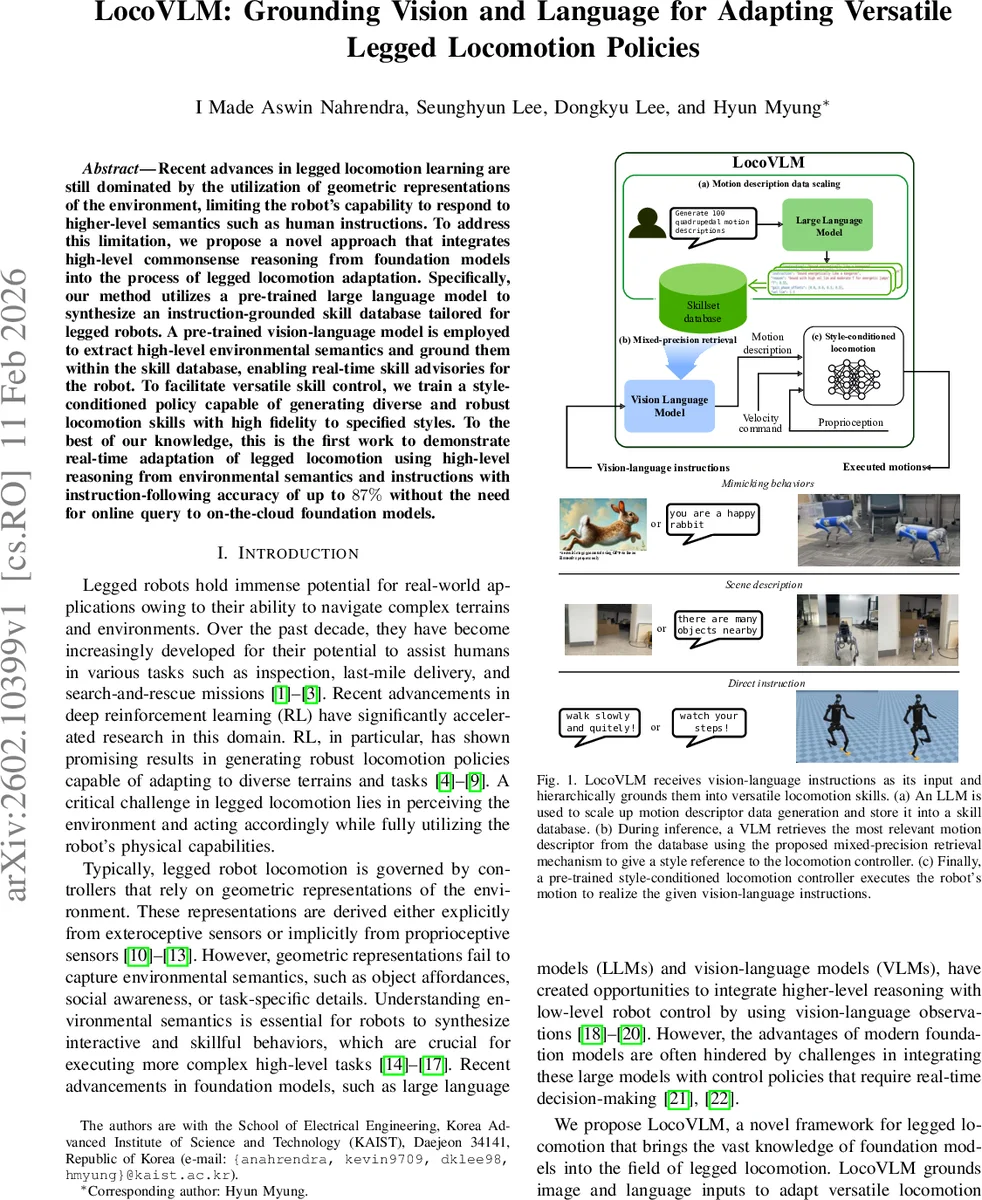

Recent advances in legged locomotion learning are still dominated by the utilization of geometric representations of the environment, limiting the robot’s capability to respond to higher-level semantics such as human instructions. To address this limitation, we propose a novel approach that integrates high-level commonsense reasoning from foundation models into the process of legged locomotion adaptation. Specifically, our method utilizes a pre-trained large language model to synthesize an instruction-grounded skill database tailored for legged robots. A pre-trained vision-language model is employed to extract high-level environmental semantics and ground them within the skill database, enabling real-time skill advisories for the robot. To facilitate versatile skill control, we train a style-conditioned policy capable of generating diverse and robust locomotion skills with high fidelity to specified styles. To the best of our knowledge, this is the first work to demonstrate real-time adaptation of legged locomotion using high-level reasoning from environmental semantics and instructions with instruction-following accuracy of up to 87% without the need for online query to on-the-cloud foundation models.

💡 Research Summary

LocoVLM presents a novel framework that bridges high‑level human instructions and low‑level quadrupedal locomotion through the integration of foundation models—specifically a large language model (LLM) and a vision‑language model (VLM). The authors first employ GPT‑4o to automatically generate a “skill database” consisting of 100 diverse instruction‑motion descriptor pairs. Instructions are categorized into scene descriptions, mimic‑behavior prompts, and direct commands; each is paired with a motion descriptor that encodes three parameters: gait‑cycle period T, per‑leg phase offsets ψ ∈ ℝ⁴, and a maximum forward velocity vₓ. This data‑generation pipeline uses a two‑stage prompting strategy: an initial prompt creates concise textual instructions, and a meta‑prompt expands each instruction into a detailed technical description, reasoning, and the corresponding motion descriptor.

During deployment, the robot receives a user query either as text or an image. A pre‑trained BLIP‑2 model extracts embeddings from the query and from every instruction in the database. To achieve both scalability and accuracy, the authors introduce a mixed‑precision retrieval algorithm. First, cosine similarity on the BLIP‑2 text embeddings quickly selects the top‑K candidate instructions. Then, the more expensive image‑text matching (ITM) head evaluates these candidates, and the final score is the sum of the coarse and fine similarity scores. An additional trick renders the textual query as an image (white background with black text) so that the VLM can exploit its stronger image‑text alignment capabilities, further improving retrieval precision. The retrieved instruction’s motion descriptor is then fed to the locomotion controller.

The locomotion controller itself is a style‑conditioned policy built on a blind velocity‑conditioned RL framework. It augments the observation with a gait‑phase encoding vector ϕ(t) =

Comments & Academic Discussion

Loading comments...

Leave a Comment