Agent World Model: Infinity Synthetic Environments for Agentic Reinforcement Learning

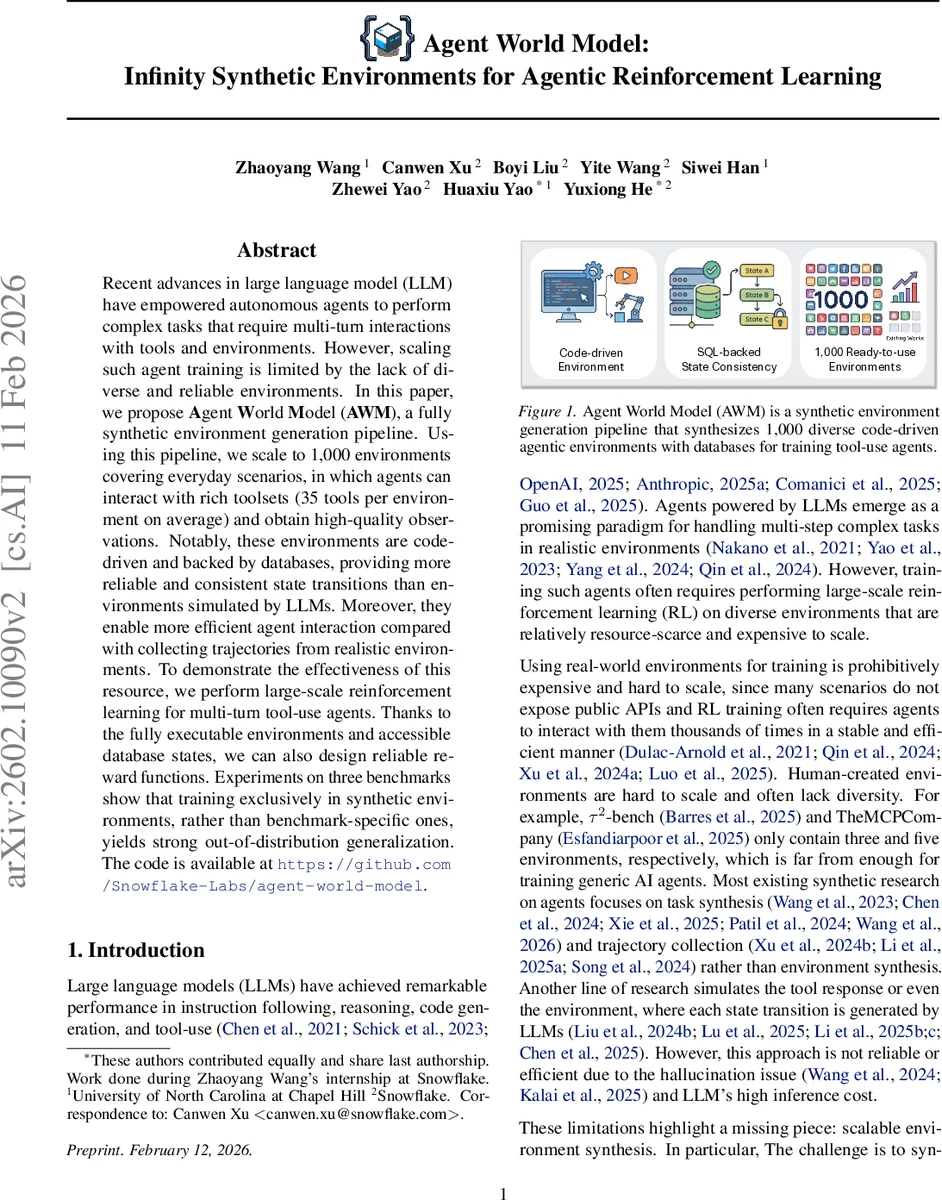

Recent advances in large language model (LLM) have empowered autonomous agents to perform complex tasks that require multi-turn interactions with tools and environments. However, scaling such agent training is limited by the lack of diverse and reliable environments. In this paper, we propose Agent World Model (AWM), a fully synthetic environment generation pipeline. Using this pipeline, we scale to 1,000 environments covering everyday scenarios, in which agents can interact with rich toolsets (35 tools per environment on average) and obtain high-quality observations. Notably, these environments are code-driven and backed by databases, providing more reliable and consistent state transitions than environments simulated by LLMs. Moreover, they enable more efficient agent interaction compared with collecting trajectories from realistic environments. To demonstrate the effectiveness of this resource, we perform large-scale reinforcement learning for multi-turn tool-use agents. Thanks to the fully executable environments and accessible database states, we can also design reliable reward functions. Experiments on three benchmarks show that training exclusively in synthetic environments, rather than benchmark-specific ones, yields strong out-of-distribution generalization. The code is available at https://github.com/Snowflake-Labs/agent-world-model.

💡 Research Summary

Agent World Model (AWM) addresses a critical bottleneck in scaling tool‑use agents: the scarcity of diverse, reliable, and efficiently interactable environments for reinforcement learning (RL). The authors introduce a fully automated pipeline that synthesizes 1,000 executable environments from high‑level scenario descriptions. Each environment is built around a SQLite database that defines the state space, a set of Python‑implemented tools exposed via the Model Context Protocol (MCP), and verification code that produces robust reward signals.

The pipeline consists of five stages: (1) scenario generation, where a large language model (LLM) creates 1,000 distinct application‑style scenarios (e‑commerce, finance, social media, etc.) filtered for CRUD relevance and deduplicated for diversity; (2) task generation, producing ten API‑solvable user tasks per scenario (10,000 tasks total) while avoiding UI‑only actions and assuming authentication is pre‑handled; (3) database schema and sample data synthesis, where the LLM infers required tables, columns, constraints, and populates realistic initial records that satisfy all tasks; (4) tool‑interface generation, where a two‑step process first designs a tool schema and then generates executable Python code for each endpoint, turning database operations into MCP tools that define the action and observation spaces; (5) verification and reward design, where code‑based modules compare pre‑ and post‑execution database states, and an LLM‑as‑a‑Judge combines these signals with the agent’s trajectory to emit the final reward.

A self‑correction loop is embedded in each stage: generated code is executed, errors are fed back to the LLM, and corrected code is regenerated, ensuring that all environments are runnable without human intervention. This systematic decomposition mirrors conventional software engineering, guaranteeing consistency and reproducibility.

For RL training, the authors run massive parallel experiments with 1,024 environment instances per training step, using PPO‑style algorithms adapted for multi‑turn tool use. They evaluate on three public tool‑use benchmarks (e.g., WebShop, API‑Bench, ToolEval). Agents trained exclusively on AWM environments achieve higher out‑of‑distribution (OOD) success rates than baselines trained on the benchmarks themselves, and they transfer to real‑world API settings with less than 10 % performance degradation.

Key advantages of AWM over prior LLM‑simulated environments are: (i) elimination of hallucination because state transitions are governed by deterministic database operations; (ii) dramatically lower per‑step computational cost—tool calls are simple Python function executions rather than costly LLM inference; (iii) scalable reward engineering through code‑based verification plus LLM judgment, which mitigates noisy signals from environment imperfections.

The paper also discusses limitations: reliance on LLMs for schema and tool design can propagate generation errors; SQLite, while lightweight, may not support complex transaction semantics or massive data volumes required by some domains. Future work includes integrating more powerful DBMSs (PostgreSQL), leveraging specialized code‑generation models for higher fidelity logic, and extending the framework to multi‑agent collaboration scenarios.

In summary, Agent World Model provides an open‑source, end‑to‑end solution for generating thousands of high‑quality, executable environments that enable large‑scale RL for tool‑use agents. By grounding environments in concrete databases, exposing a uniform MCP interface, and offering robust verification‑based rewards, AWM demonstrates that agents can learn generalizable tool‑use policies without being tied to any specific benchmark, paving the way for more scalable and reliable agentic reinforcement learning research.

Comments & Academic Discussion

Loading comments...

Leave a Comment