ClinAlign: Scaling Healthcare Alignment from Clinician Preference

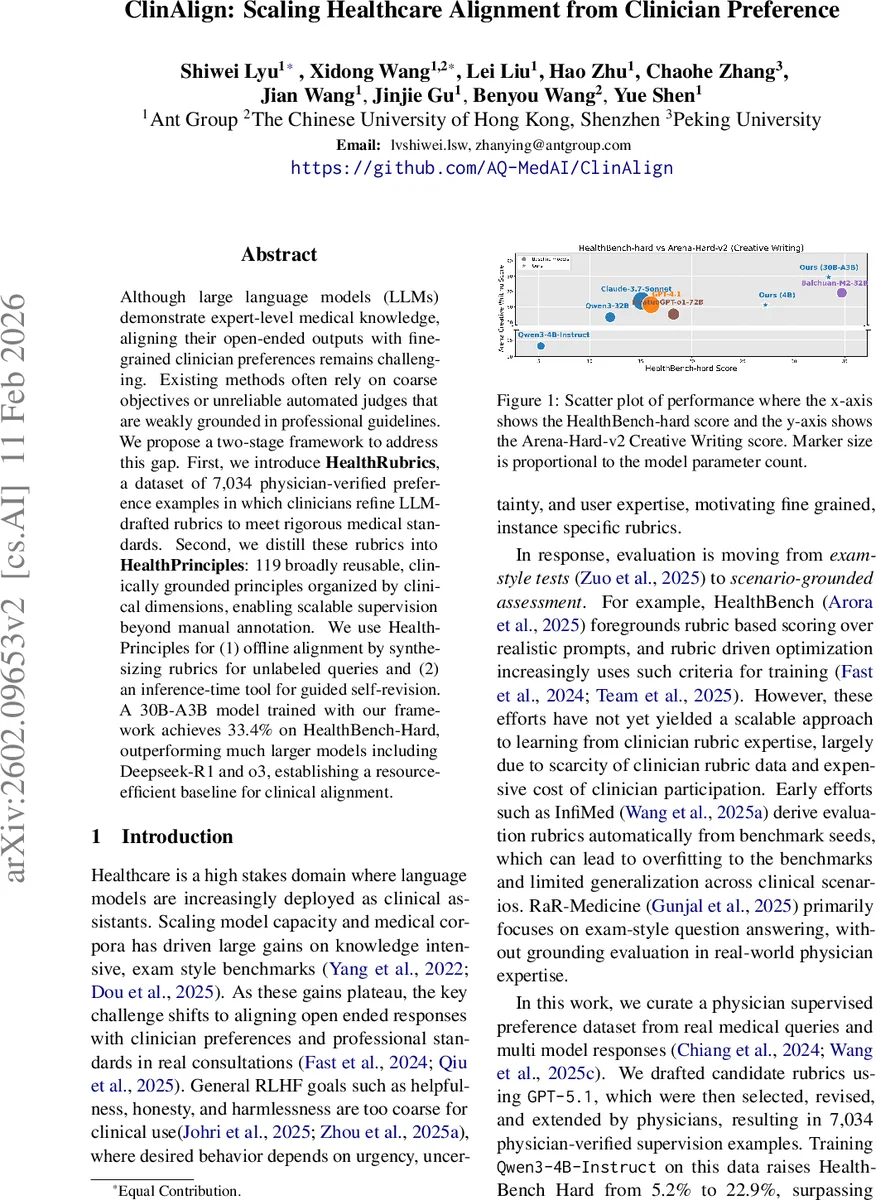

Although large language models (LLMs) demonstrate expert-level medical knowledge, aligning their open-ended outputs with fine-grained clinician preferences remains challenging. Existing methods often rely on coarse objectives or unreliable automated judges that are weakly grounded in professional guidelines. We propose a two-stage framework to address this gap. First, we introduce HealthRubrics, a dataset of 7,034 physician-verified preference examples in which clinicians refine LLM-drafted rubrics to meet rigorous medical standards. Second, we distill these rubrics into HealthPrinciples: 119 broadly reusable, clinically grounded principles organized by clinical dimensions, enabling scalable supervision beyond manual annotation. We use HealthPrinciples for (1) offline alignment by synthesizing rubrics for unlabeled queries and (2) an inference-time tool for guided self-revision. A 30B-A3B model trained with our framework achieves 33.4% on HealthBench-Hard, outperforming much larger models including Deepseek-R1 and o3, establishing a resource-efficient baseline for clinical alignment.

💡 Research Summary

ClinAlign tackles the pressing problem of aligning large language models (LLMs) with fine‑grained clinician preferences in real‑world medical consultations. While recent scaling of medical LLMs has yielded impressive knowledge‑intensive benchmark scores, these gains plateau when it comes to open‑ended, context‑sensitive interactions where factors such as urgency, uncertainty, and user expertise matter. Existing reinforcement‑learning‑from‑human‑feedback (RLHF) pipelines typically optimize coarse objectives—helpfulness, honesty, harmlessness—that are insufficient for clinical safety and efficacy.

To bridge this gap, the authors propose a two‑stage framework. The first stage, HealthRubrics, creates a high‑quality preference dataset. Starting from 103,575 real medical queries paired with multiple model responses harvested from public preference datasets (Chatbot Arena, ShareGPT, WildChat‑1M, etc.), a classifier filters out non‑medical items, leaving 7,034 medical instances. For each instance, GPT‑5.1 drafts a set of 7‑20 rubric items covering safety, factuality, uncertainty handling, completeness, and clarity. Two independent physicians then iteratively review, edit, and reach consensus on each rubric; on average 1.34 revision loops are required. The final rubrics are stored in a standardized JSON schema, enabling downstream consumption. The physician cohort includes 111 reviewers across surgery, internal medicine, and other specialties, ensuring diverse clinical perspectives.

The second stage, HealthPrinciples, abstracts recurring patterns from the rubrics into a compact, reusable library of 119 principles. The authors design a taxonomy with four dimensions: urgency (non‑emergent, conditionally emergent, emergent), uncertainty (sufficient information, reducible uncertainty, irreducible uncertainty), user expertise (non‑professional, professional), and task type (21 clusters derived from GPT‑5.1‑extracted task labels). Each rubric is mapped to one or more sub‑categories; within each sub‑category, similar rubrics are clustered and distilled into a principle after physician consensus. Principles are human‑readable statements that capture what matters for a given clinical context.

HealthPrinciples enable scalable supervision in two ways. First, for offline alignment, the authors collect an additional 16,872 medical questions from UltraMedical‑Preference, MedQuAD, and ChatDoctor. GPT‑5.1 predicts the taxonomy labels for each question, retrieves the relevant principles (average 22.9 per question), and converts them into question‑specific rubric items via a prompt. These synthetic rubrics serve as training signals for further fine‑tuning. Second, at inference time, the model can query the principle library, obtain the appropriate rubric, and perform self‑revision: the model generates an initial answer, evaluates it against the rubric, and iteratively refines the response guided by the rubric criteria.

Experimental results demonstrate the efficacy of the approach. A 30B‑parameter model (A3B architecture) fine‑tuned on the original HealthRubrics data improves its HealthBench‑Hard score from 5.2% (baseline) to 22.9%. Adding the principle‑generated synthetic data (16,872 examples) pushes performance to 33.4%, surpassing much larger commercial models such as DeepSeek‑R1 and o3, despite having fewer parameters. The same model also shows strong performance on the Arena‑Hard‑v2 creative writing benchmark, indicating that the alignment does not over‑specialize. Ablation studies confirm that naive supervised fine‑tuning (SFT) quickly saturates after one epoch and fails to generalize to held‑out questions, whereas the principle‑driven pipeline yields consistent gains across epochs. The inference‑time self‑revision further adds a modest but consistent improvement (≈2% absolute) on the test set, validating the utility of rubric guidance during generation.

The paper’s contributions are threefold: (1) the release of HealthRubrics, a physician‑validated dataset of 7,034 detailed rubrics; (2) the definition of HealthPrinciples, a reusable set of 119 clinically grounded principles that enable scalable synthetic rubric generation; (3) a demonstration that principle‑conditioned self‑revision can improve model outputs at inference time.

Limitations include potential cultural or specialty bias introduced by the physician cohort, reliance on GPT‑5.1 for initial rubric drafting and taxonomy labeling (which may propagate model errors), and the current focus on English/Chinese medical content. Future work should expand the principle library across languages and healthcare systems, incorporate multi‑turn dialogue feedback loops, and evaluate the approach in live clinical decision‑support settings to assess safety and efficacy in practice.

Comments & Academic Discussion

Loading comments...

Leave a Comment