UniComp: A Unified Evaluation of Large Language Model Compression via Pruning, Quantization and Distillation

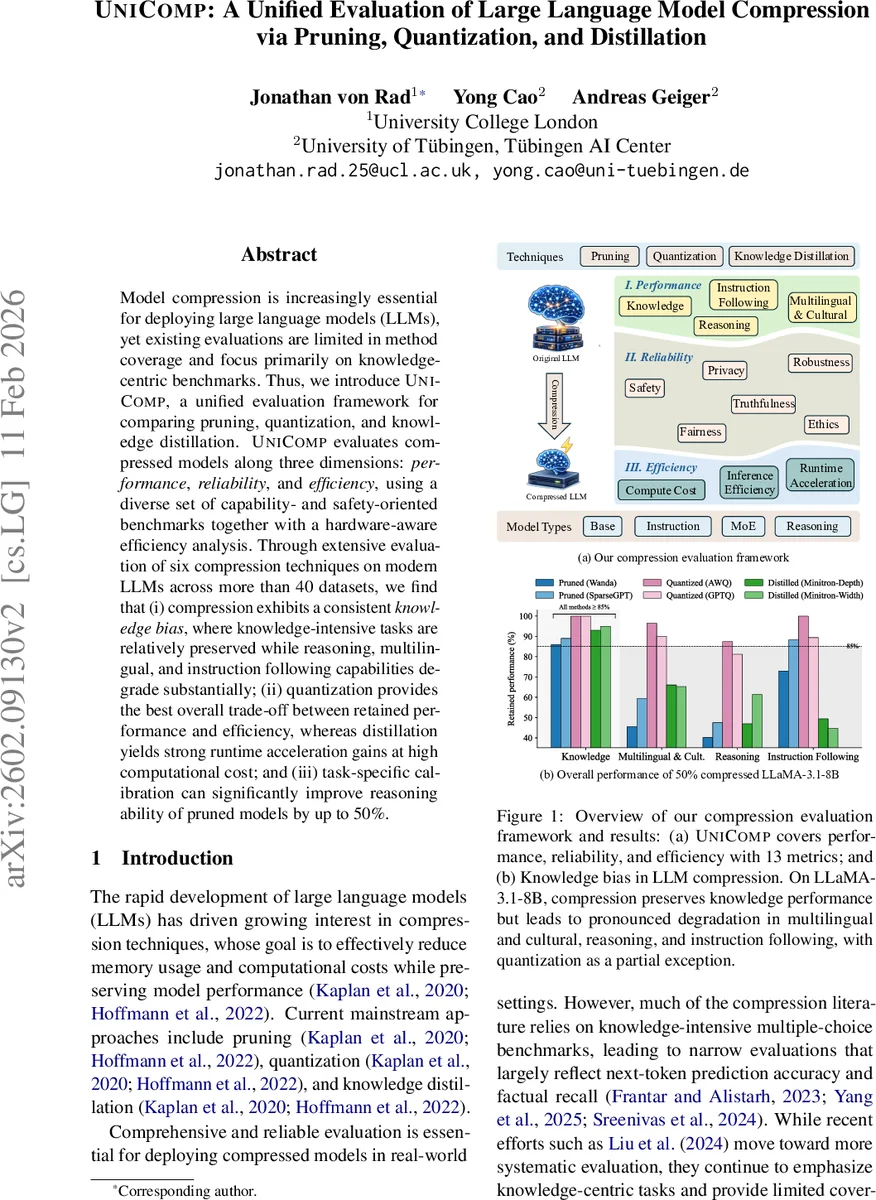

Model compression is increasingly essential for deploying large language models (LLMs), yet existing evaluations are limited in method coverage and focus primarily on knowledge-centric benchmarks. Thus, we introduce UniComp, a unified evaluation framework for comparing pruning, quantization, and knowledge distillation. UniComp evaluates compressed models along three dimensions: performance, reliability, and efficiency, using a diverse set of capability- and safety-oriented benchmarks together with a hardware-aware efficiency analysis. Through extensive evaluation of six compression techniques on modern LLMs across more than 40 datasets, we find that (i) compression exhibits a consistent knowledge bias, where knowledge-intensive tasks are relatively preserved while reasoning, multilingual, and instruction-following capabilities degrade substantially; (ii) quantization provides the best overall trade-off between retained performance and efficiency, whereas distillation yields strong runtime acceleration gains at high computational cost; and (iii) task-specific calibration can significantly improve the reasoning ability of pruned models by up to 50%.

💡 Research Summary

The paper introduces UniComp, a unified evaluation framework designed to systematically compare three major large‑language‑model (LLM) compression techniques—pruning, quantization, and knowledge distillation—across multiple dimensions that matter for real‑world deployment. Existing studies typically evaluate compression on a narrow set of knowledge‑centric multiple‑choice benchmarks, overlooking how compression affects reasoning, multilingual understanding, instruction following, and safety‑related behavior. UniComp addresses this gap by defining three evaluation axes: Performance, Reliability, and Efficiency, each quantified with a set of metrics (13 in total).

Performance is broken down into four sub‑categories: Knowledge (standard factual QA), Multilingual & Cultural Generalization (multilingual QA and bias detection), Reasoning (chain‑of‑thought benchmarks such as GSM8K, Math‑500, GPQA‑Diamond), and Instruction Following (IFBench). Scores are computed as the ratio of a compressed model’s accuracy to its base model, scaled to 0‑100. Reliability follows the extensive TrustLLM protocol and includes six aspects: Truthfulness, Safety, Fairness, Robustness, Privacy, and Ethics, again using normalized ratios (with inversion where lower raw scores are better). Efficiency is measured through three concrete metrics: Runtime Acceleration (throughput and latency), Inference Efficiency (GPU memory, disk size, FLOPs), and Compute Cost (compression time and peak GPU memory). Each metric is normalized relative to the best‑performing method and combined via a geometric mean to penalize imbalanced improvements.

The experimental suite evaluates two primary base models—LLaMA‑3.1‑8B and Qwen‑2.5‑7B—plus a broader set of architectures (LLaMA‑2/3, DeepSeek‑R1, Qwen‑3 series, and MoE variants). For pruning, SparseGPT (unstructured) and Wanda (semi‑structured) are applied at 50 % sparsity. Quantization uses weight‑only 4‑bit post‑training methods GPTQ and AWQ. Knowledge distillation is treated as a compression method, with two pipelines: Minitron (a depth‑and‑width‑pruned 4‑B student from LLaMA‑3.1) and Low‑Rank‑Clone (a 4‑B student distilled from Qwen‑2.5‑7B). All methods are run on H100 GPUs using the vLLM backend; reliability judgments are generated by GPT‑4o‑mini.

Key findings:

-

Knowledge Retention vs. Capability Degradation – Across all compression paradigms, multiple‑choice factual benchmarks (MMLU, ARC‑E/C) retain a large fraction of performance (often > 80 % of the base). In contrast, reasoning‑heavy tasks (GSM8K, Math‑500, GPQA‑Diamond) suffer dramatic drops, especially for pruning and distillation (30‑50 % loss). Multilingual and instruction‑following abilities also degrade markedly, confirming a “knowledge bias” where static facts survive compression but dynamic reasoning does not.

-

Quantization Offers the Best Trade‑off – 4‑bit weight‑only quantization reduces memory and disk footprint by ~ 75 % while keeping overall performance scores around 85 / 100. Throughput improves modestly, and latency remains comparable to the uncompressed model, making quantization the most balanced approach for most deployment scenarios.

-

Distillation Provides Strong Runtime Gains at High Cost – Distillation pipelines require substantial compute (2‑3× longer compression time, higher peak GPU memory) but deliver the highest runtime acceleration (≈ 1.8× throughput) and comparable inference efficiency. This makes distillation attractive when inference speed is the primary bottleneck and resources for compression are available.

-

Task‑Specific Calibration Boosts Pruned Models – Adding a small calibration set tailored to reasoning tasks before or after pruning can recover up to 50 % of the lost reasoning performance, with an average gain of ~ 12 points on the reasoning score. This suggests that pruning’s impact can be mitigated through data‑driven fine‑tuning.

-

Reliability Impacts Are Modest – Compressed models show slight declines in truthfulness and safety metrics, but fairness, privacy, and ethical behavior remain largely unchanged. This indicates that compression primarily affects the model’s knowledge representation and reasoning pathways rather than its bias or privacy leakage mechanisms.

Overall, UniComp demonstrates that compression is not a monolithic trade‑off; different techniques excel on different axes. Quantization emerges as the most practical for general use, pruning can be salvaged for reasoning‑heavy applications with calibration, and distillation is best when maximal inference speed is required despite higher upfront cost. The framework itself provides a reproducible, multi‑dimensional benchmark suite that can guide both academic research and industry deployment decisions. Future work could extend UniComp to larger model scales, incorporate emerging compression methods (e.g., mixed‑precision, structural re‑parameterization), and explore more nuanced reliability scenarios such as long‑context or interactive agent settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment