No Word Left Behind: Mitigating Prefix Bias in Open-Vocabulary Keyword Spotting

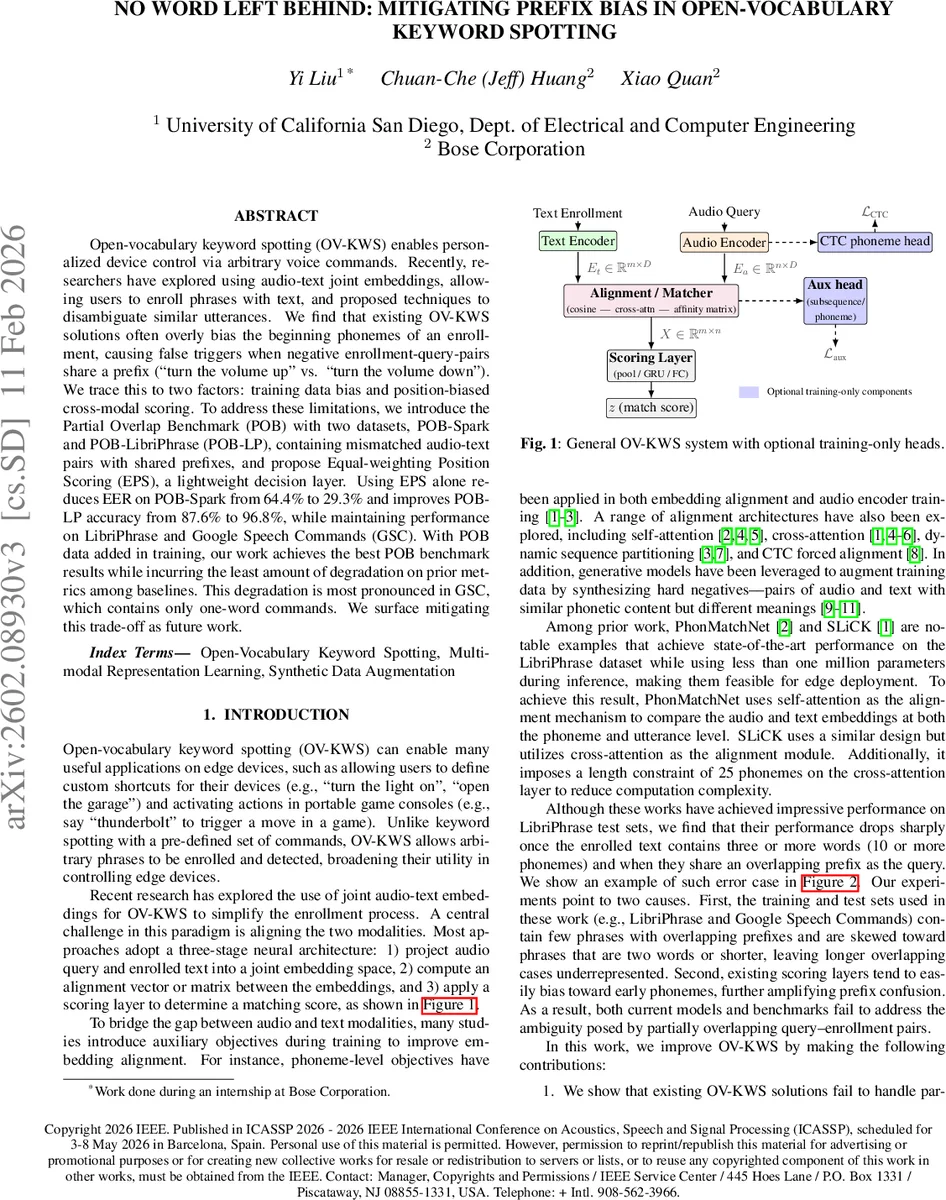

Open-vocabulary keyword spotting (OV-KWS) enables personalized device control via arbitrary voice commands. Recently, researchers have explored using audio-text joint embeddings, allowing users to enroll phrases with text, and proposed techniques to disambiguate similar utterances. We find that existing OV-KWS solutions often overly bias the beginning phonemes of an enrollment, causing false triggers when negative enrollment-query-pairs share a prefix (turn the volume up'' vs. turn the volume down’’). We trace this to two factors: training data bias and position-biased cross-modal scoring. To address these limitations, we introduce the Partial Overlap Benchmark (POB) with two datasets, POB-Spark and POB-LibriPhrase (POB-LP), containing mismatched audio-text pairs with shared prefixes, and propose Equal-weighting Position Scoring (EPS), a lightweight decision layer. Using EPS alone reduces EER on POB-Spark from 64.4% to 29.3% and improves POB-LP accuracy from 87.6% to 96.8%, while maintaining performance on LibriPhrase and Google Speech Commands (GSC). With POB data added in training, our work achieves the best POB benchmark results while incurring the least amount of degradation on prior metrics among baselines. This degradation is most pronounced in GSC, which contains only one-word commands. We surface mitigating this trade-off as future work.

💡 Research Summary

Open‑vocabulary keyword spotting (OV‑KWS) enables users to define arbitrary voice commands for edge devices, but current state‑of‑the‑art models such as PhonMatchNet and SLiCK suffer from a “prefix bias”: the scoring stage places disproportionate weight on the early phonemes of an enrolled phrase. This bias leads to false activations when a negative query shares the same prefix as the enrollment (e.g., “turn the volume up” vs. “turn the volume down”).

The authors first formalize the problem. A pair (x, y) exhibits partial overlap if the query y matches the first q tokens of the enrollment x and q < p (the enrollment is longer). They introduce the “first‑different phoneme index” to quantify how many phonemes are shared before a mismatch. An analysis of the widely used LibriPhrase dataset shows that most pairs differ within the first 0‑4 phonemes, meaning the dataset under‑represents long‑prefix cases that occur in real applications.

To evaluate models under realistic conditions, the paper presents the Partial Overlap Benchmark (POB), consisting of two datasets:

- POB‑LP – derived from LibriPhrase by appending a common English word to each enrollment, thereby creating controlled prefix overlaps while preserving the original length distribution.

- POB‑Spark – synthetically generated with the Spark‑TTS model, offering balanced phrase lengths, diverse speakers, and uniformly distributed first‑different phoneme indices.

Both datasets provide (anchor, query, label) triples for true‑match, false‑match, and reverse‑match cases, enabling precise measurement of a model’s ability to distinguish partial overlaps.

The core technical contribution is the Equal‑weighting Position Scoring (EPS) module. In typical OV‑KWS pipelines, after an audio‑text alignment module produces a matrix X ∈ ℝ^{m×n} (m token positions, n feature dimensions), a position‑dependent fully‑connected layer learns a weight vector a_i for each position i, then aggregates them (often via a weighted sum). This design naturally concentrates weight on early positions, as visualized by the authors’ “prefix concentration score” ρ(k). EPS replaces the position‑dependent FC with a single shared linear map w ∈ ℝ^{n} applied to every position, followed by average pooling:

z_i = wᵀ X_i z = (1/m) ∑_{i=1}^{m} z_i + b

Thus every position contributes equally, eliminating the learned bias without adding parameters or changing the rest of the network.

Experiments:

- Training regimes: (1) LibriPhrase‑only, (2) LibriPhrase + POB training data.

- Models: baseline SLiCK, PhonMatchNet, and SLiCK‑EPS (the EPS‑augmented version).

- Evaluation metrics: Equal Error Rate (EER) and Accuracy (ACC) on LibriPhrase‑easy, LibriPhrase‑hard, Google Speech Commands (GSC), and the two POB test sets.

Key results:

| Model | Training | LibriPhrase‑hard ACC | GSC ACC | POB‑Spark EER | POB‑LP ACC |

|---|---|---|---|---|---|

| SLiCK | LibriPhrase‑only | 99.76 % | 91.77 % | 64.4 % | 87.6 % |

| SLiCK‑EPS | LibriPhrase‑only | 99.80 % | 92.66 % | 29.3 % | 96.8 % |

| SLiCK‑EPS + POB | LibriPhrase + POB | 99.49 % | 89.41 % | 16.2 % | 99.4 % |

- EPS alone reduces POB‑Spark EER by 35.1 % points and raises POB‑LP accuracy by 9.2 % points, while keeping LibriPhrase and GSC performance essentially unchanged (often slight improvements).

- Adding POB data further improves overlap robustness for all models, achieving the best POB scores when combined with EPS. However, this comes at a modest cost to the original benchmarks, especially GSC, which consists of single‑word commands where prefix bias is less relevant.

The authors conclude that prefix bias stems from both data distribution (lack of long‑prefix examples) and architectural design (position‑dependent scoring). EPS offers a lightweight, parameter‑free remedy, and the POB benchmark provides a necessary testbed for future OV‑KWS research. They suggest future work on dynamic positional regularization, multi‑task training to balance short‑ and long‑phrase performance, and on‑device latency/energy profiling.

Overall, the paper makes three substantive contributions: (1) identification and quantification of prefix bias in OV‑KWS, (2) a publicly released benchmark (POB) that stresses this failure mode, and (3) a simple yet effective scoring modification (EPS) that dramatically improves robustness to partial overlaps while preserving efficiency for edge deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment