EventCast: Hybrid Demand Forecasting in E-Commerce with LLM-Based Event Knowledge

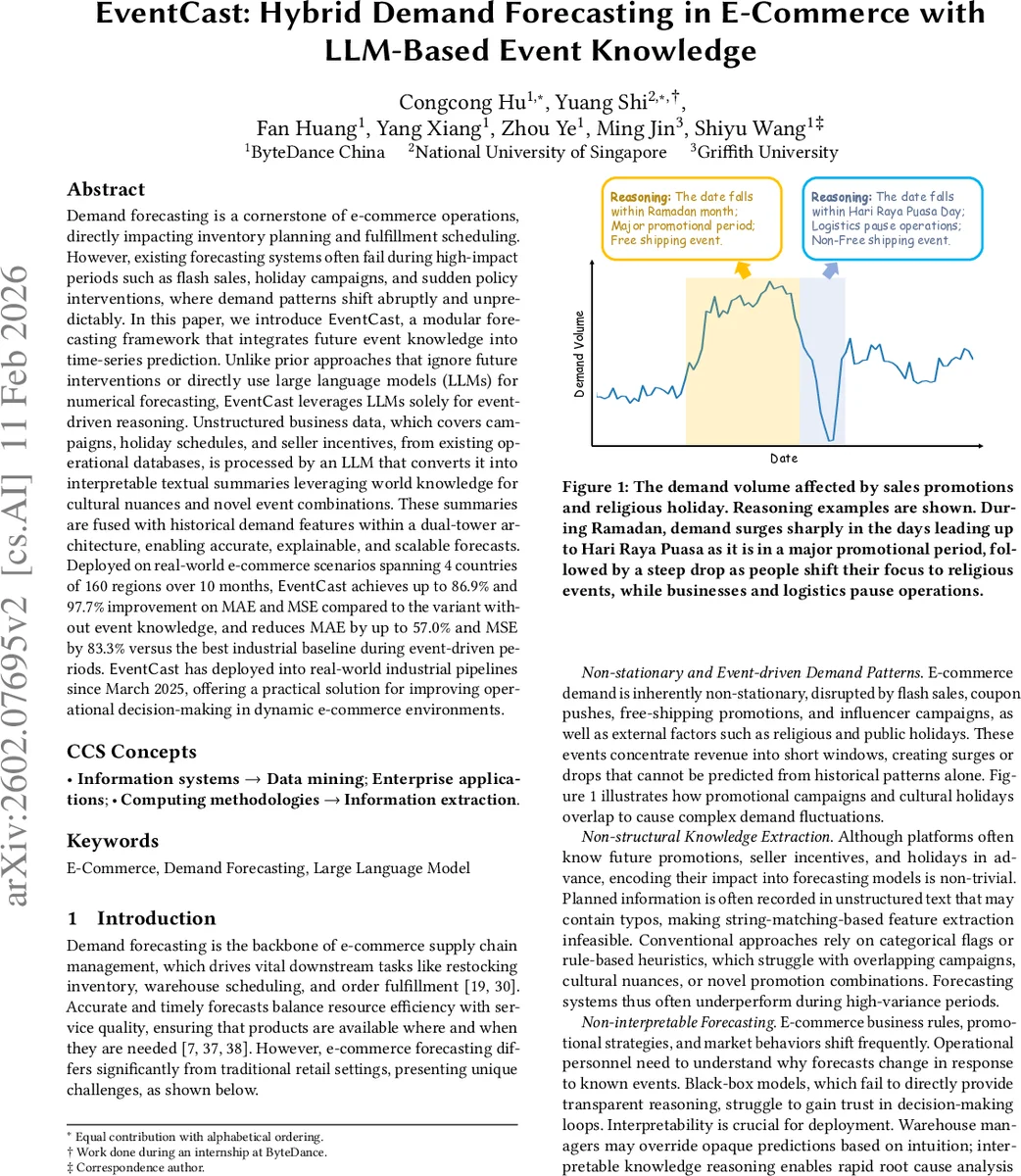

Demand forecasting is a cornerstone of e-commerce operations, directly impacting inventory planning and fulfillment scheduling. However, existing forecasting systems often fail during high-impact periods such as flash sales, holiday campaigns, and sudden policy interventions, where demand patterns shift abruptly and unpredictably. In this paper, we introduce EventCast, a modular forecasting framework that integrates future event knowledge into time-series prediction. Unlike prior approaches that ignore future interventions or directly use large language models (LLMs) for numerical forecasting, EventCast leverages LLMs solely for event-driven reasoning. Unstructured business data, which covers campaigns, holiday schedules, and seller incentives, from existing operational databases, is processed by an LLM that converts it into interpretable textual summaries leveraging world knowledge for cultural nuances and novel event combinations. These summaries are fused with historical demand features within a dual-tower architecture, enabling accurate, explainable, and scalable forecasts. Deployed on real-world e-commerce scenarios spanning 4 countries of 160 regions over 10 months, EventCast achieves up to 86.9% and 97.7% improvement on MAE and MSE compared to the variant without event knowledge, and reduces MAE by up to 57.0% and MSE by 83.3% versus the best industrial baseline during event-driven periods. EventCast has deployed into real-world industrial pipelines since March 2025, offering a practical solution for improving operational decision-making in dynamic e-commerce environments.

💡 Research Summary

EventCast tackles a fundamental weakness of modern e‑commerce demand forecasting: the inability to anticipate abrupt demand shifts caused by planned business interventions such as flash sales, holiday campaigns, and policy changes. While prior work either ignores future events or attempts to feed them into models as rigid categorical or numerical covariates, this paper proposes a hybrid architecture that treats large language models (LLMs) solely as knowledge‑extraction agents.

The system starts from an “Event Expert Database” that stores unstructured textual descriptions of upcoming campaigns, public and religious holidays, and seller incentive rules. Business teams already maintain such records for operational purposes, but they are typically noisy, contain typos, and lack a uniform schema. EventCast designs a parameterized prompt template that injects the target forecast date, country, and other contextual cues into a frozen LLM (e.g., GPT‑4‑Turbo). The LLM returns a concise, human‑readable summary that captures the salient aspects of that date – e.g., “Wednesday: no free‑shipping; Level‑10 promotion active; 10th day of Ramadan.”

These summaries are tokenized and passed through a lightweight learnable embedding layer; they are not converted into high‑dimensional LLM embeddings, which keeps computational costs low and avoids the need for fine‑tuning. The core forecasting model follows a dual‑tower design: (1) a historical tower that encodes multivariate time‑series features (GMV, order volume, price, etc.) using convolutional and self‑attention blocks, and (2) an event tower that processes the LLM‑generated embeddings. A fusion layer concatenates the two representations, and a simple feed‑forward predictor outputs the demand forecast.

Experiments span four countries, 160 regions, and ten months of daily data (≈10 k SKUs). Compared with a version that omits event knowledge, EventCast reduces MAE by up to 86.9 % and MSE by 97.7 % on average. During high‑variance periods (flash sales, Ramadan, Christmas), it outperforms the strongest industrial baseline by 57 % in MAE and 83 % in MSE. The LLM is called only once per forecast date, incurring ~120 ms latency; the entire batch pipeline processes 10 k SKUs in about 2 seconds, far cheaper than end‑to‑end LLM‑based forecasters.

The paper emphasizes interpretability: the textual summaries are presented alongside predictions, allowing operations teams to trace demand spikes or drops back to specific promotions or holidays. Because the LLM is frozen, updating the system for new campaign types merely requires adjusting the prompt, not retraining the model.

Limitations include dependence on prompt quality, occasional hallucinations in LLM outputs, and the current inability to model complex interactions among multiple overlapping events beyond what a single summary can express. The authors suggest future work on automatic validation of LLM summaries, graph‑based modeling of event interdependencies, and incorporation of multimodal event data (images, videos).

In summary, EventCast demonstrates a practical, scalable paradigm for integrating rich, unstructured business knowledge into time‑series forecasting: LLMs serve as semantic reasoners, dual‑tower fusion preserves event signals, and a lightweight predictor delivers production‑grade accuracy and speed. The approach yields substantial performance gains, operational transparency, and easy maintainability, making it a compelling blueprint for any domain where future interventions heavily influence demand patterns.

Comments & Academic Discussion

Loading comments...

Leave a Comment