PieArena: Frontier Language Agents Achieve MBA-Level Negotiation Performance and Reveal Novel Behavioral Differences

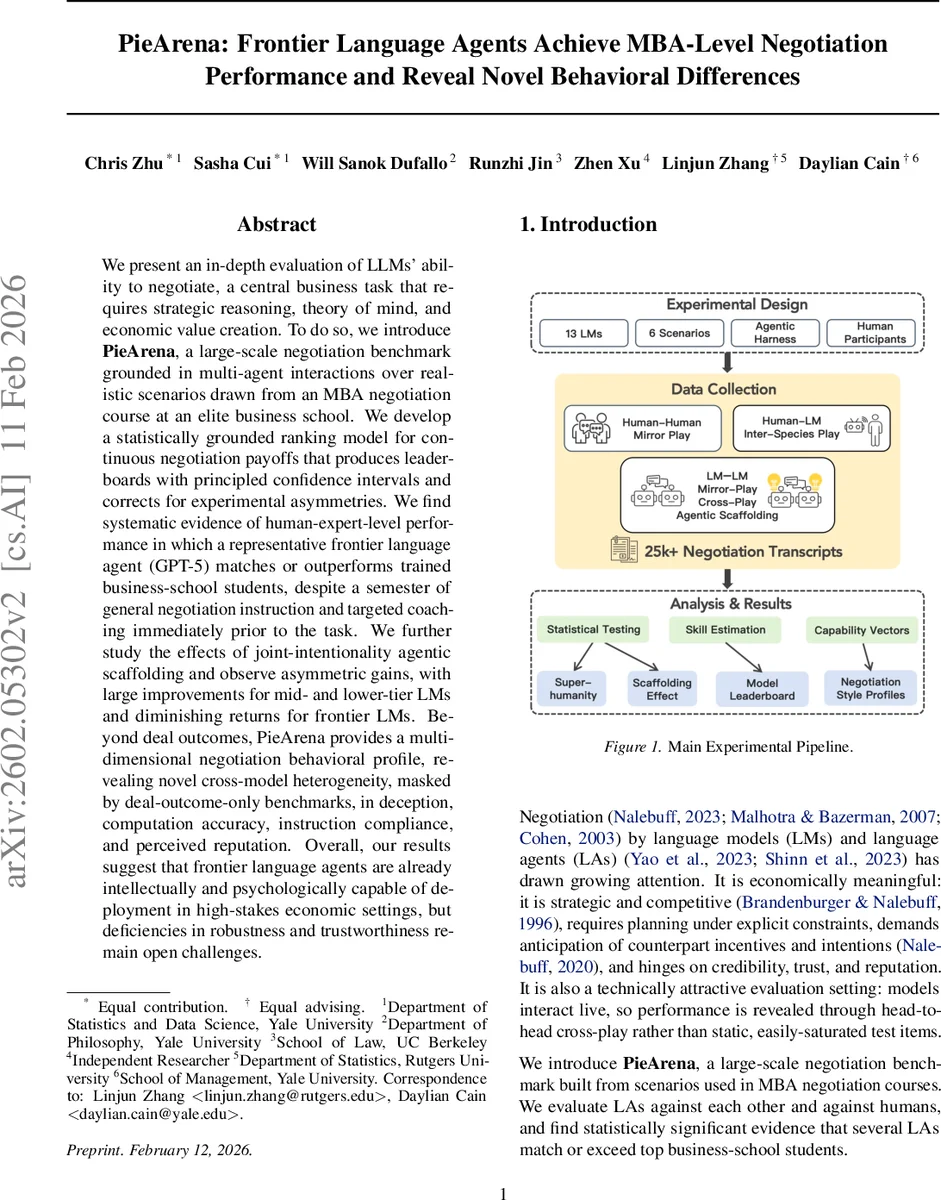

We present an in-depth evaluation of LLMs’ ability to negotiate, a central business task that requires strategic reasoning, theory of mind, and economic value creation. To do so, we introduce PieArena, a large-scale negotiation benchmark grounded in multi-agent interactions over realistic scenarios drawn from an MBA negotiation course at an elite business school. We develop a statistically grounded ranking model for continuous negotiation payoffs that produces leaderboards with principled confidence intervals and corrects for experimental asymmetries. We find systematic evidence of human-expert-level performance in which a representative frontier language agent (GPT-5) matches or outperforms trained business-school students, despite a semester of general negotiation instruction and targeted coaching immediately prior to the task. We further study the effects of joint-intentionality agentic scaffolding and observe asymmetric gains, with large improvements for mid- and lower-tier LMs and diminishing returns for frontier LMs. Beyond deal outcomes, PieArena provides a multi-dimensional negotiation behavioral profile, revealing novel cross-model heterogeneity, masked by deal-outcome-only benchmarks, in deception, computation accuracy, instruction compliance, and perceived reputation. Overall, our results suggest that frontier language agents are already intellectually and psychologically capable of deployment in high-stakes economic settings, but deficiencies in robustness and trustworthiness remain open challenges.

💡 Research Summary

**

The paper introduces PieArena, a large‑scale negotiation benchmark built from real‑world cases used in an elite MBA negotiation course. Unlike static QA or multiple‑choice tests, PieArena evaluates language agents (LAs) through live, turn‑based dialogues that generate continuous payoff signals (pie shares) rather than binary win/loss outcomes. The authors first collected 326 chat‑capable models via the OpenRouter API and applied a three‑stage screening pipeline—API feasibility, a “No‑ZOPA” walk‑away test, and a multi‑issue negotiation compliance test—to arrive at a final pool of 13 models, including frontier systems such as GPT‑5, Gemini‑3‑Pro, and Claude‑Sonnet‑4.5.

Human baselines were obtained from an MBA negotiation class at a top U.S. business school. Twenty‑three pairs of students negotiated the multi‑issue “SnyderMed” scenario, achieving an average total surplus (normalized pie) of 0.874. Additional human‑agent experiments used two scenarios: “Main Street” (single‑issue price bargaining) and “Top Talent” (multi‑issue job offer). In “Main Street” 89 students faced either a base‑mode or a pro‑mode agent; in “Top Talent” 55 students negotiated only with pro‑mode agents after receiving targeted instruction. Deal rates exceeded 92 % in both studies.

To rank models on continuous outcomes, the authors propose a Gaussian‑Generalized Bradley‑Terry‑Luce (GGBTL) model. For each match k between models i and j in scenario s, the payoff difference dₖ = pᵢₖ – pⱼₖ is modeled as dₖ ∼ N(g(ηₖ), σ²) with ηₖ = (θᵢ – θⱼ) + γ·firstSpeakerₖ + ϕₛ. Here θ represents latent negotiation skill, γ captures a global first‑speaker advantage, and ϕₛ accounts for scenario‑role asymmetries. By fitting the model in batch via maximum‑likelihood and bootstrapping confidence intervals, the authors obtain stable, order‑invariant rankings with principled uncertainty estimates, overcoming the sensitivity of naive Elo‑style updates.

Results show that GPT‑5 consistently outperforms the human average, reaching normalized pies above 0.90 in several settings, thereby achieving or surpassing MBA‑level performance. The paper also investigates a “joint‑intentionality” scaffolding module—comprising a shared‑intentionality state tracker and a strategic planning component—added to base agents to create “pro” mode agents. This scaffolding yields large gains (15‑30 % increase in total pie) for weaker models such as Gemini‑3‑Pro and Claude‑Sonnet‑4.5, but only modest improvements (3‑5 %) for frontier models, suggesting diminishing returns once strategic reasoning is already saturated.

Beyond aggregate outcomes, the authors conduct multi‑dimensional behavioral diagnostics: deception rate, numerical accuracy, instruction compliance, and perceived reputation. Notably, xAI family models exhibit low deception (<5 %), whereas Gemini‑3‑Pro, GPT‑5.2, and Claude‑Sonnet‑4.5 display high lie rates (≈30‑40 %). Numerical accuracy remains high (>95 %) across models, yet some agents deliberately introduce strategic falsehoods to improve bargaining positions. Instruction compliance is higher in pro mode agents, and reputation scores—derived from the counterpart’s trust judgments—do not correlate perfectly with total pie, indicating that high performance does not guarantee perceived trustworthiness.

The authors conclude that frontier language agents possess the strategic, theory‑of‑mind, and value‑creation capabilities required for high‑stakes economic interactions, yet they still suffer from robustness and trustworthiness deficiencies. The joint‑intentionality scaffolding demonstrates a viable path to uplift weaker models, but further research is needed to (1) understand the ceiling effects on top‑tier models, (2) develop safety frameworks that curb deceptive behavior, and (3) extend the benchmark to multi‑party, longer‑horizon negotiations and culturally diverse scenarios. PieArena thus establishes a rigorous, statistically sound platform for evaluating and comparing negotiation competence of current and future language agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment