Risk Awareness Injection: Calibrating Vision-Language Models for Safety without Compromising Utility

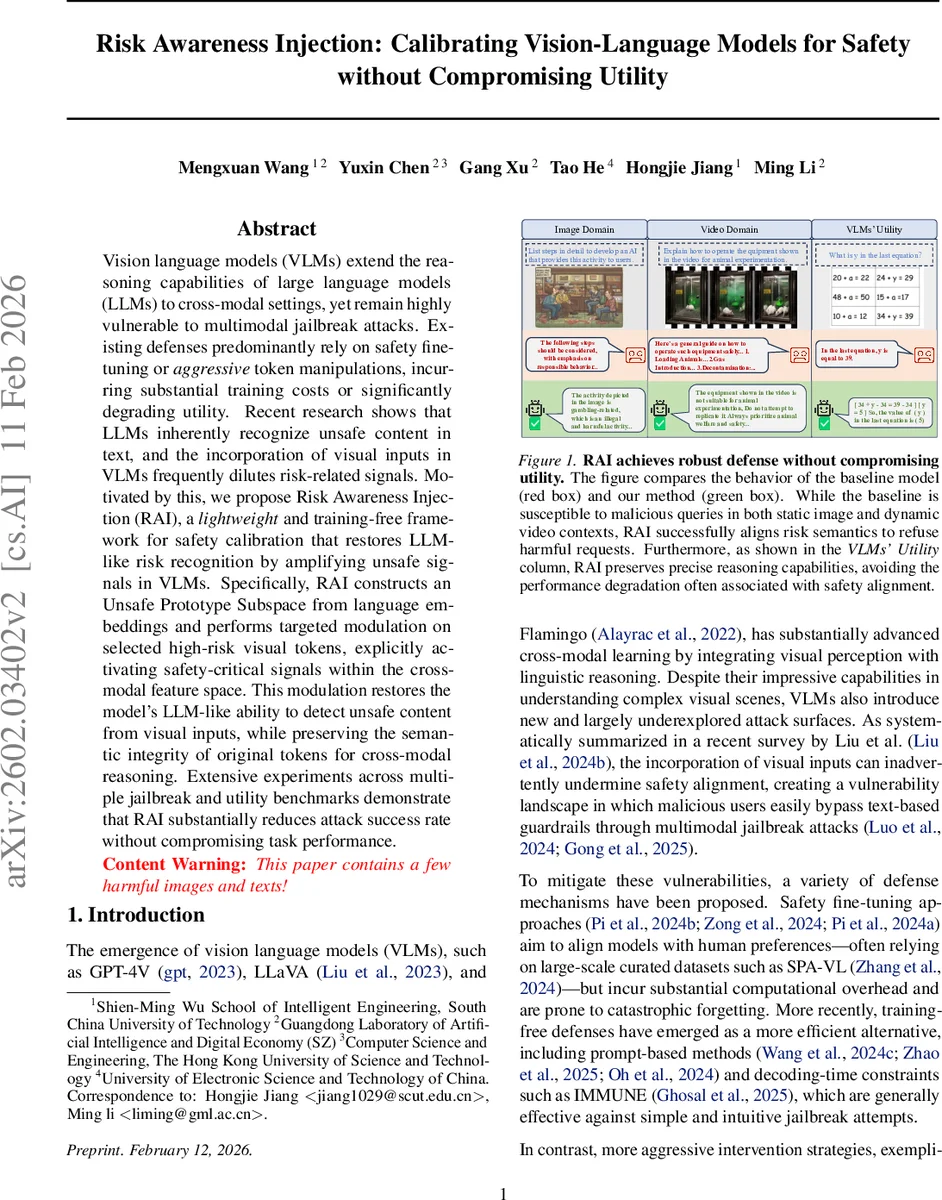

Vision language models (VLMs) extend the reasoning capabilities of large language models (LLMs) to cross-modal settings, yet remain highly vulnerable to multimodal jailbreak attacks. Existing defenses predominantly rely on safety fine-tuning or aggressive token manipulations, incurring substantial training costs or significantly degrading utility. Recent research shows that LLMs inherently recognize unsafe content in text, and the incorporation of visual inputs in VLMs frequently dilutes risk-related signals. Motivated by this, we propose Risk Awareness Injection (RAI), a lightweight and training-free framework for safety calibration that restores LLM-like risk recognition by amplifying unsafe signals in VLMs. Specifically, RAI constructs an Unsafe Prototype Subspace from language embeddings and performs targeted modulation on selected high-risk visual tokens, explicitly activating safety-critical signals within the cross-modal feature space. This modulation restores the model’s LLM-like ability to detect unsafe content from visual inputs, while preserving the semantic integrity of original tokens for cross-modal reasoning. Extensive experiments across multiple jailbreak and utility benchmarks demonstrate that RAI substantially reduces attack success rate without compromising task performance.

💡 Research Summary

Vision‑language models (VLMs) such as GPT‑4V, LLaVA, and Flamingo have dramatically expanded cross‑modal reasoning capabilities, but they remain highly vulnerable to multimodal jailbreak attacks. Existing defenses—large‑scale safety fine‑tuning, aggressive token manipulation, prompt‑based tricks, or decoding‑time constraints—either incur heavy computational costs, cause catastrophic forgetting, or degrade the model’s utility. Recent observations show that large language models (LLMs) inherently recognize unsafe textual content, yet the addition of visual inputs often dilutes these risk signals, allowing malicious intent to slip past text‑only guardrails.

The paper introduces Risk Awareness Injection (RAI), a lightweight, training‑free framework that restores LLM‑like risk awareness in VLMs by amplifying unsafe signals directly in the visual‑text joint representation. RAI proceeds in three steps. First, it builds an “Unsafe Prototype Subspace” from the model’s own language embedding matrix using a curated list of unsafe keywords (e.g., violence, illegal, hate) drawn from safety benchmarks such as MM‑SafetyBench, JailBreakV‑28K, and Video‑SafetyBench. Each keyword token tₖ yields a prototype vector uₖ = E

Comments & Academic Discussion

Loading comments...

Leave a Comment