FragmentFlow: Scalable Transition State Generation for Large Molecules

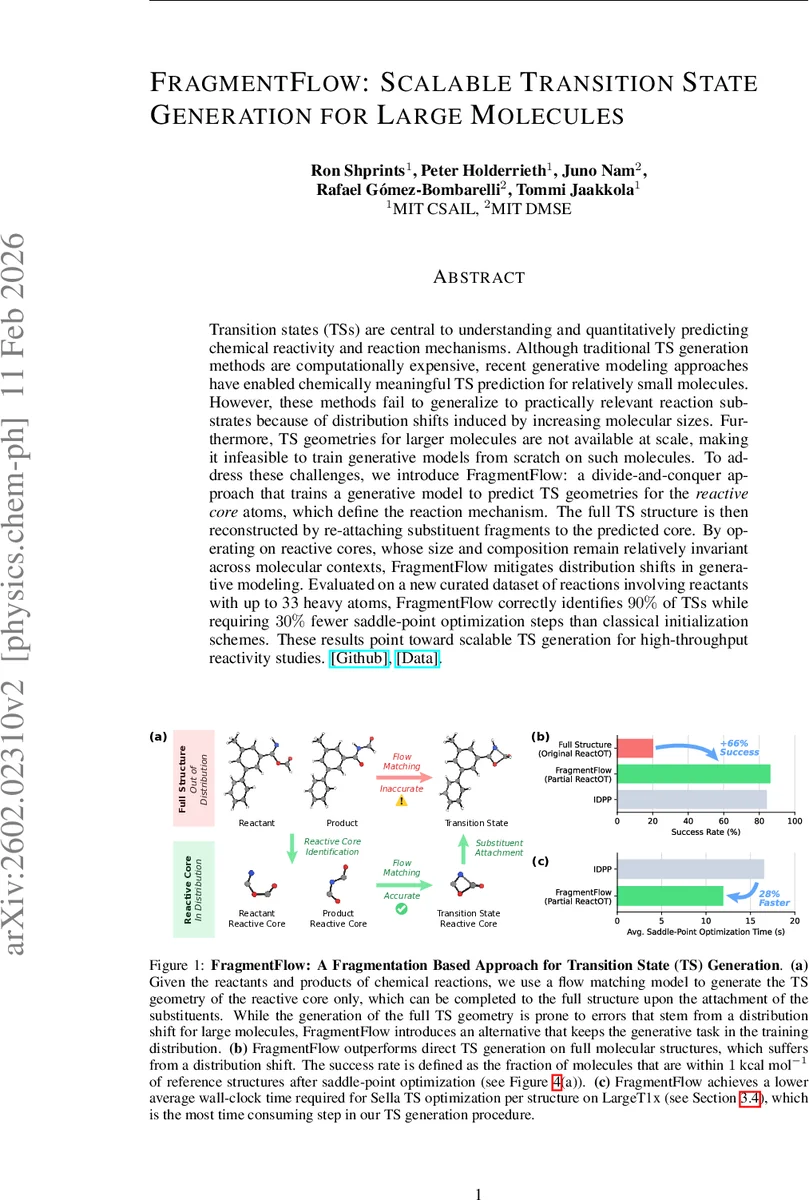

Transition states (TSs) are central to understanding and quantitatively predicting chemical reactivity and reaction mechanisms. Although traditional TS generation methods are computationally expensive, recent generative modeling approaches have enabled chemically meaningful TS prediction for relatively small molecules. However, these methods fail to generalize to practically relevant reaction substrates because of distribution shifts induced by increasing molecular sizes. Furthermore, TS geometries for larger molecules are not available at scale, making it infeasible to train generative models from scratch on such molecules. To address these challenges, we introduce FragmentFlow: a divide-and-conquer approach that trains a generative model to predict TS geometries for the reactive core atoms, which define the reaction mechanism. The full TS structure is then reconstructed by re-attaching substituent fragments to the predicted core. By operating on reactive cores, whose size and composition remain relatively invariant across molecular contexts, FragmentFlow mitigates distribution shifts in generative modeling. Evaluated on a new curated dataset of reactions involving reactants with up to 33 heavy atoms, FragmentFlow correctly identifies 90% of TSs while requiring 30% fewer saddle-point optimization steps than classical initialization schemes. These results point toward scalable TS generation for high-throughput reactivity studies.

💡 Research Summary

Transition states (TSs) are the highest‑energy points along a reaction pathway and are essential for predicting rates, yields, and selectivities. Traditional double‑ended methods such as nudged elastic band (NEB), string, and growing‑string algorithms, when coupled with density‑functional theory (DFT), are prohibitively expensive for high‑throughput applications. Recent machine‑learning approaches—diffusion models and flow‑matching (FM) networks—have shown that TS geometries can be generated directly for small molecules (≈10–15 heavy atoms). However, these models suffer from a severe distribution shift when applied to larger, practically relevant substrates because existing TS datasets contain only small molecules. This creates a chicken‑and‑egg problem: large‑scale TS data are needed to train models, yet generating such data is computationally costly.

FragmentFlow addresses this bottleneck with a divide‑and‑conquer strategy that isolates the “reactive core” – the set of atoms that actually change during the reaction – from the surrounding substituents. The core typically comprises 5–10 atoms and its size and chemical composition remain roughly constant across different molecular contexts, keeping the learning problem within the distribution of existing small‑molecule datasets. The authors first identify the reactive core automatically using a combination of Bemis–Murcko scaffold extraction and a Weisfeiler‑Lehman Network atom mapper.

For generation, they adopt ReactOT, a state‑of‑the‑art FM model that treats TS prediction as an optimal‑transport problem. They train a “Partial ReactOT” model on the Transition1x training set augmented with fragmented structures, masking out substituents and minimizing a flow‑matching loss only on the core coordinates. At inference, Partial ReactOT produces a candidate core geometry. To reconstruct the full molecule, the pipeline creates 10⁴ candidate full‑molecule structures via IDPP interpolation, selects the one whose core best matches the generated core (lowest RMSD), aligns the cores with a Kabsch transformation, and swaps in the generated core. Finally, all candidates are refined with the Sella TS optimizer, which resolves any inconsistencies introduced during the swapping step.

The authors provide a theoretical decomposition of the KL divergence between the true TS distribution and the factorized model into (1) reactive‑core modeling error (which scales with the small core size) and (2) attachment error (which grows slowly because substituent positions are largely inherited from reactants/products). This analysis justifies why focusing on the core yields better out‑of‑distribution generalization.

To evaluate scalability, the authors curate a new benchmark, LargeT1x, containing 131 reactions with up to 33 heavy atoms—far larger than any existing TS dataset. The curation pipeline involves converting Transition1x geometries to SMILES, extracting cores, generating initial TS guesses with GFN2‑xTB‑based tools, optimizing with Sella/UMA, and validating via intrinsic reaction coordinate (IRC) checks and vibrational frequency analysis.

On LargeT1x, FragmentFlow achieves a 90 % success rate defined as a final TS within 1 kcal mol⁻¹ of the reference after Sella refinement. Compared to classical IDPP initializations, the method reduces the average number of Sella optimization steps by 30 %, translating into up to a 28 % reduction in wall‑clock time on a 128‑CPU node. The authors also demonstrate a strong correlation between the quality of the generated core and the final full‑TS accuracy, confirming the core‑scaling hypothesis.

Key contributions are: (1) a novel “core‑and‑substituent” paradigm that mitigates size‑induced distribution shifts, (2) the LargeT1x benchmark extending TS datasets to much larger molecules, and (3) empirical and theoretical validation of the factorized KL‑divergence framework. Limitations include reliance on IDPP for substituent placement, which may be insufficient for highly flexible or metal‑containing systems, and dependence on automated core identification that could struggle with ambiguous reaction mechanisms. Future work is suggested on more sophisticated substituent reconstruction, extension to catalytic and metallorganic reactions, and reinforcement‑learning‑based core detection.

Overall, FragmentFlow demonstrates that by training generative models only on the chemically active core and re‑attaching the rest of the molecule afterward, one can generate high‑quality transition states for large molecules with substantially lower computational cost, opening the door to truly high‑throughput reaction‑network exploration and catalyst design.

Comments & Academic Discussion

Loading comments...

Leave a Comment