From Junior to Senior: Allocating Agency and Navigating Professional Growth in Agentic AI-Mediated Software Engineering

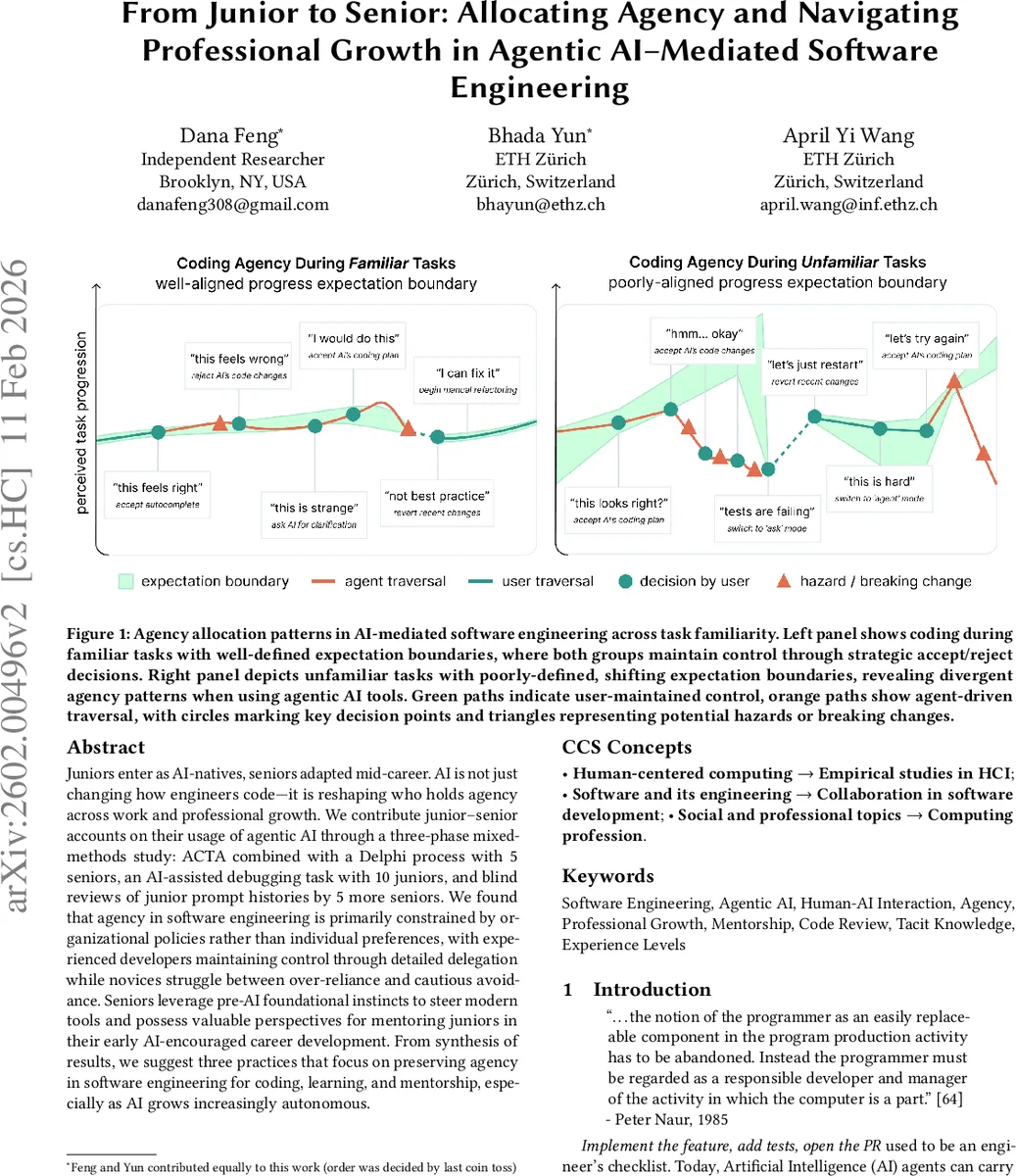

Juniors enter as AI-natives, seniors adapted mid-career. AI is not just changing how engineers code-it is reshaping who holds agency across work and professional growth. We contribute junior-senior accounts on their usage of agentic AI through a three-phase mixed-methods study: ACTA combined with a Delphi process with 5 seniors, an AI-assisted debugging task with 10 juniors, and blind reviews of junior prompt histories by 5 more seniors. We found that agency in software engineering is primarily constrained by organizational policies rather than individual preferences, with experienced developers maintaining control through detailed delegation while novices struggle between over-reliance and cautious avoidance. Seniors leverage pre-AI foundational instincts to steer modern tools and possess valuable perspectives for mentoring juniors in their early AI-encouraged career development. From synthesis of results, we suggest three practices that focus on preserving agency in software engineering for coding, learning, and mentorship, especially as AI grows increasingly autonomous.

💡 Research Summary

This paper investigates how agency—the allocation of decision‑making authority and accountability—is distributed between human developers and agentic AI tools across experience levels in modern software engineering. The authors focus on two cohorts: “AI‑native” junior engineers who entered the workforce alongside generative and agentic AI, and senior engineers who adapted to AI mid‑career after building pre‑AI expertise. To explore their practices, perceptions, and mentorship dynamics, the study employs a three‑phase mixed‑methods design.

Phase 1 consists of semi‑structured interviews with five senior engineers, augmented by Applied Cognitive Task Analysis (ACTA) to elicit tacit knowledge and decision‑point maps for a realistic debugging scenario. The ACTA data are refined through a Delphi‑style consensus process, producing a concrete task that reflects both high‑familiarity and low‑familiarity code contexts.

Phase 2 engages ten junior engineers in the finalized debugging task using Cursor, an agentic AI that can autonomously edit code. After the task, participants complete surveys, post‑mortems, and reflective interviews describing how they prompted the AI, accepted or rejected its changes, and perceived learning outcomes.

Phase 3 involves five different senior engineers who blind‑review the anonymized artifacts (code, prompt histories, and reflections) generated by the juniors. Their evaluations simulate real‑world mentorship and code‑review settings, focusing on how AI‑generated provenance information supports accountability and knowledge transfer.

Across all phases, the authors address four research questions: (1) how agency is allocated between developers and AI in daily work; (2) how professionals perceive junior growth in an AI‑augmented environment; (3) when mentorship becomes indispensable; and (4) how AI records shape code review and mentorship.

Key findings reveal that organizational policies—tool defaults, CI pipeline constraints, and company‑wide AI usage guidelines—form the primary “policy‑first” layer that limits agency before individual preferences even matter. Within this envelope, seniors maintain control by delegating low‑level, well‑defined subtasks to the AI while inserting explicit verification loops, a strategy the authors term “controlled delegation.” Seniors also use AI for idea generation but keep final judgment to themselves, thereby preserving professional responsibility.

Juniors display a bifurcated pattern. In familiar code regions they use short, task‑focused prompts and perform incremental acceptance/rejection, mirroring senior behavior. In unfamiliar regions, however, they either over‑rely on the AI—accepting hallucinated or sub‑optimal changes without sufficient intuition—or avoid AI altogether, leading to slower progress and heightened impostor syndrome. The study documents concrete instances where juniors failed to detect AI‑generated bugs, resulting in reduced code understanding and concerns about skill degradation.

Mentorship emerges as a critical mediating factor. Seniors position themselves as “critical reviewers” who contextualize AI output, articulate the reasoning behind prompts, and model the verification process. Juniors, while appreciating AI for quick answers, still seek human discussion to build deeper conceptual models and to validate AI suggestions. The blind‑review phase shows that well‑documented prompt histories enable seniors to provide targeted feedback, reinforcing the junior’s agency over learning.

From these insights, the authors propose three evolving practices for AI‑mediated software engineering:

-

Preserving Individual Agency – Establish company‑wide AI usage policies that mandate incremental changes, require explicit pause‑and‑verify steps, and log provenance. This keeps developers ultimately responsible for the code they ship.

-

Evolving the Mentorship Pipeline – Encourage seniors to externalize their tacit intuition during AI‑assisted work, sharing prompt design rationales and verification criteria. This helps juniors retain agency over their learning trajectory and prevents over‑dependence on the tool.

-

Prompt & Code Reviews (PCRs) – Institutionalize a structured review process where juniors document key prompts, rationales, and AI‑generated diffs; seniors then audit these artifacts, ensuring accountability and reinforcing the junior’s ownership of reasoning.

The paper acknowledges limitations: a modest sample size, concentration on U.S.‑based firms, and reliance on a single agentic tool (Cursor). Future work should examine cross‑cultural policy variations, compare multiple agentic platforms, and conduct longitudinal studies on skill development under sustained AI assistance.

In conclusion, as agentic AI tools become more autonomous, the distribution of agency in software development is reshaped by a hierarchy of organizational constraints and individual delegation strategies. Senior engineers leverage their pre‑AI expertise to steer AI output while preserving responsibility, whereas junior engineers navigate a tension between productivity gains and learning loss. By articulating the policy‑first layer, controlled delegation, and the pivotal role of mentorship, the study offers actionable guidance for organizations seeking to integrate AI responsibly while safeguarding professional growth and accountability.

Comments & Academic Discussion

Loading comments...

Leave a Comment