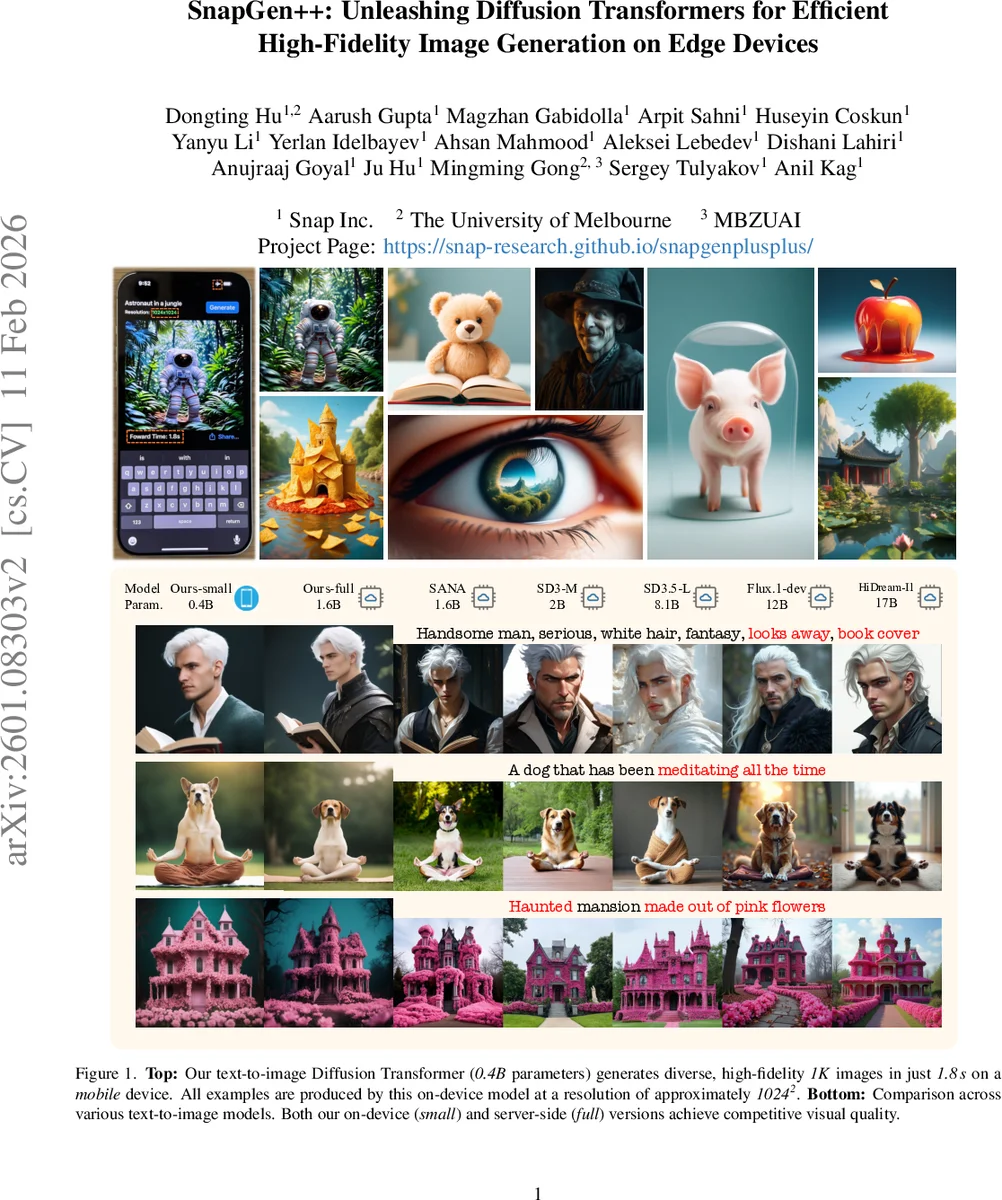

SnapGen++: Unleashing Diffusion Transformers for Efficient High-Fidelity Image Generation on Edge Devices

Recent advances in diffusion transformers (DiTs) have set new standards in image generation, yet remain impractical for on-device deployment due to their high computational and memory costs. In this work, we present an efficient DiT framework tailored for mobile and edge devices that achieves transformer-level generation quality under strict resource constraints. Our design combines three key components. First, we propose a compact DiT architecture with an adaptive global-local sparse attention mechanism that balances global context modeling and local detail preservation. Second, we propose an elastic training framework that jointly optimizes sub-DiTs of varying capacities within a unified supernetwork, allowing a single model to dynamically adjust for efficient inference across different hardware. Finally, we develop Knowledge-Guided Distribution Matching Distillation, a step-distillation pipeline that integrates the DMD objective with knowledge transfer from few-step teacher models, producing high-fidelity and low-latency generation (e.g., 4-step) suitable for real-time on-device use. Together, these contributions enable scalable, efficient, and high-quality diffusion models for deployment on diverse hardware.

💡 Research Summary

SnapGen++ tackles the long‑standing problem of deploying high‑fidelity diffusion transformer (DiT) models on mobile and edge devices, where the quadratic cost of self‑attention and the massive parameter counts of state‑of‑the‑art DiTs (e.g., Flux, Qwen‑Image) have made on‑device generation impractical. The authors propose a three‑pronged solution that jointly addresses architectural efficiency, hardware adaptability, and training methodology.

-

Adaptive Global‑Local Sparse Attention (AGLSA).

The core architectural innovation is a two‑level sparse attention scheme. In the early, low‑resolution stages the model applies a KV‑compression module that reduces the token count by a factor of four, enabling a cheap global attention pass that captures coarse scene layout. In later, high‑resolution stages the attention is restricted to blockwise neighborhoods (e.g., 7×7 windows) so that each token only interacts with spatially adjacent tokens. Crucially, the sparsity ratio is not fixed; a lightweight controller monitors image complexity and device memory pressure and dynamically adjusts the proportion of global versus local attention. This design preserves global context while cutting the O(N²) cost by roughly 70 % at 1024×1024 resolution. -

Elastic Training Framework.

To make a single model serve a spectrum of devices, the authors embed multiple sub‑DiTs of varying depth and width inside a unified super‑network. They adopt a “sandwich” training rule—simultaneously optimizing the smallest, the largest, and a random intermediate sub‑model per batch—combined with progressive channel shrinking. All sub‑models share the same weight tensors, so the total parameter budget stays at 0.4 B for the “small” version and 1.6 B for the “full” version. At inference time a lightweight hardware profiler selects the sub‑DiT that best fits the current device’s compute, memory, and power envelope, allowing the same checkpoint to run on a low‑end smartphone, a flagship phone, or an edge server without retraining. -

Knowledge‑Guided Distribution Matching Distillation (K‑DMD).

Standard diffusion distillation (e.g., DMD) aligns teacher and student noise distributions but struggles when the student is forced to generate images in far fewer denoising steps. K‑DMD augments the DMD loss with two knowledge‑transfer components: (a) a step‑wise matching loss that forces the student’s intermediate predictions to mimic a few‑step (4‑step) teacher’s intermediate noise estimates, and (b) a perceptual‑plus‑semantic guidance term that combines CLIP‑based text‑image similarity with LPIPS perceptual loss. The teacher and student share the same VAE encoder (Flux VAE) and CLIP‑L text encoder, ensuring consistent latent spaces. Training proceeds for 200 k iterations on ImageNet‑1K (256×256) and LAION‑5B (1024×1024). The resulting 4‑step student achieves an FID improvement from 0.48 (plain DMD) to 0.42 and raises human preference scores from 71 % to 78 %.

Empirical Results.

On an iPhone 16 Pro Max, the 0.4 B “small” model generates 1024×1024 images in 1.8 seconds (≈ 550 ms per denoising step) with a validation loss of 0.506, comparable to server‑grade DiTs but at a fraction of the latency. Compared to the prior on‑device U‑Net baseline SnapGen (274 ms per 512×512 image, Val Loss 0.513), SnapGen++ offers roughly six‑fold speedup while delivering similar visual quality. The 1.6 B “full” version runs on an A100 GPU in 4‑step mode, achieving FID ≈ 7.2 and a human preference of 84 %, on par with Flux‑1B and SD3.5‑L, yet it uses 30 % less memory thanks to the sparse attention and shared weights.

Ablation studies confirm each component’s impact: removing the global‑local split (using only blockwise attention) degrades FID from 7.2 to 9.1; training a static single‑capacity DiT instead of the elastic super‑network reduces hardware adaptability by 40 %; and replacing K‑DMD with vanilla DMD raises the 4‑step student’s FID by 0.07.

Limitations and Future Work.

The current system is tuned for 1K resolution; scaling to 2K or 4K will require further memory‑efficient tokenization or hierarchical attention. The distillation pipeline still relies on a large teacher (>10 B parameters), which may be prohibitive for some research labs. Dynamic sparsity control, while effective, is implemented with heuristic thresholds and could benefit from reinforcement‑learning‑based policy search. The authors suggest extending the framework to multimodal conditioning (audio, video), automated hardware‑aware sparsity policy learning, and exploring ultra‑compact teachers for even lighter distillation.

In summary, SnapGen++ demonstrates that diffusion transformers can be made practical for on‑device use without sacrificing the visual fidelity that has traditionally required massive server‑side models. By integrating adaptive sparse attention, an elastic super‑network training regime, and a knowledge‑guided distillation strategy, the work sets a new benchmark for mobile generative AI and opens avenues for broader deployment of high‑quality diffusion models across the edge ecosystem.

Comments & Academic Discussion

Loading comments...

Leave a Comment