LightTact: A Visual-Tactile Fingertip Sensor for Deformation-Independent Contact Sensing

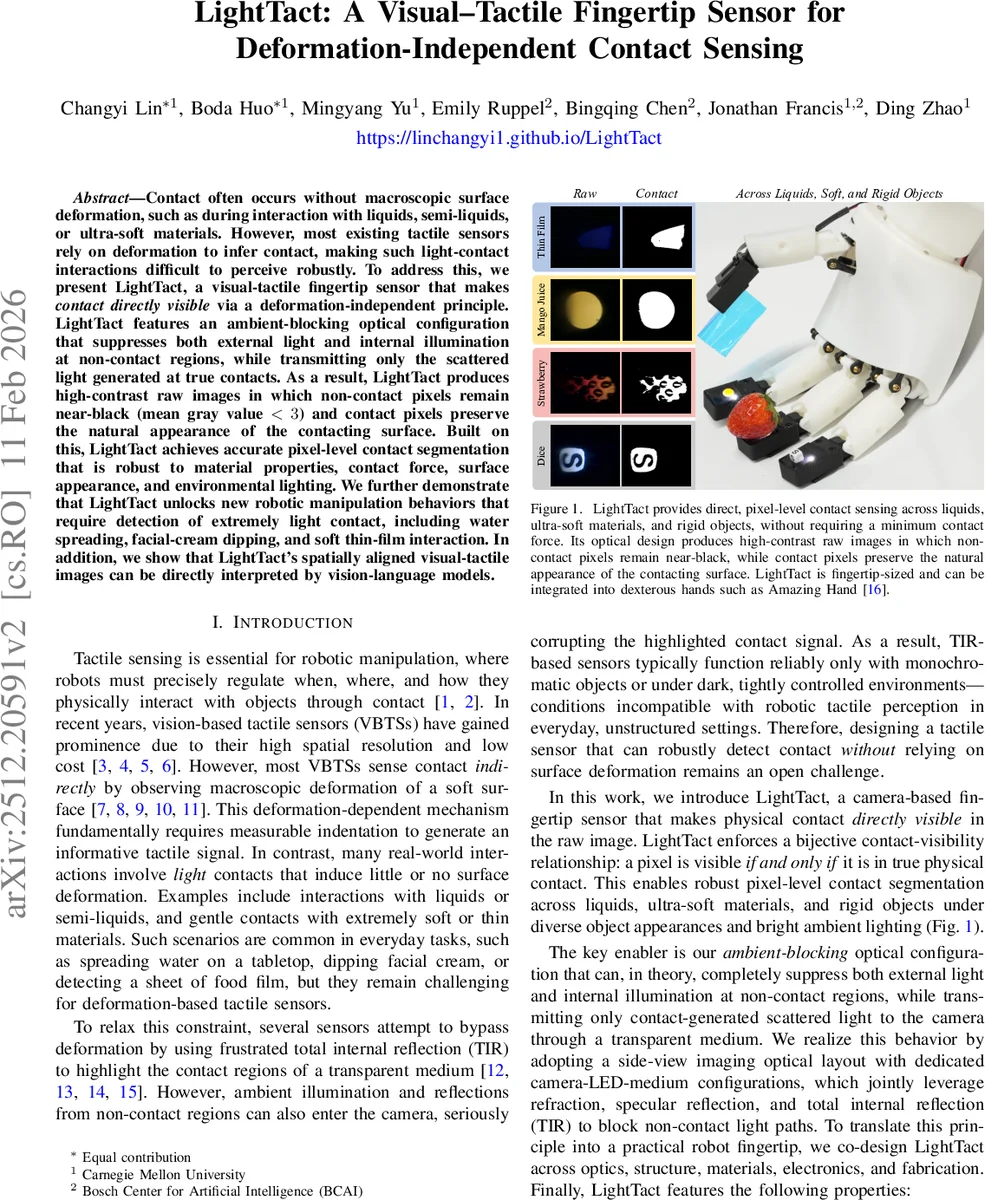

Contact often occurs without macroscopic surface deformation, such as during interaction with liquids, semi-liquids, or ultra-soft materials. However, most existing tactile sensors rely on deformation to infer contact, making such light-contact interactions difficult to perceive robustly. To address this, we present LightTact, a visual-tactile fingertip sensor that makes contact directly visible via a deformation-independent principle. LightTact features an ambient-blocking optical configuration that suppresses both external light and internal illumination at non-contact regions, while transmitting only the scattered light generated at true contacts. As a result, LightTact produces high-contrast raw images in which non-contact pixels remain near-black (mean gray value < 3) and contact pixels preserve the natural appearance of the contacting surface. Built on this, LightTact achieves accurate pixel-level contact segmentation that is robust to material properties, contact force, surface appearance, and environmental lighting. We further demonstrate that LightTact unlocks new robotic manipulation behaviors that require detection of extremely light contact, including water spreading, facial-cream dipping, and soft thin-film interaction. In addition, we show that LightTact’s spatially aligned visual-tactile images can be directly interpreted by vision-language models.

💡 Research Summary

LightTact is a fingertip‑sized, vision‑based tactile sensor that detects contact without relying on macroscopic surface deformation. The core idea is a bijective contact‑visibility relationship: a pixel becomes visible to the camera if and only if it is in true physical contact with an object. This is achieved through an ambient‑blocking optical layout that eliminates both external illumination and internal LED light from non‑contact regions while allowing only the scattered light generated at contact points to reach the camera.

The sensor consists of a transparent medium, an internal LED, a compact camera, and black‑coated surrounding surfaces. Two distinct surfaces of the medium play different optical roles: the “touching surface” where contact occurs, and the “viewing surface” through which the camera observes the medium. By arranging the viewing surface at an angle θ_tv relative to the touching surface such that θ_tv > 2θ_c (where θ_c is the critical angle for total internal reflection) and θ_tv < π/2 + θ_c, the design guarantees that any light entering the medium through a non‑contact region (whether from the environment or reflected LED light) arrives at the viewing surface with an incidence angle larger than θ_c and is therefore totally internally reflected toward black absorbing walls. Consequently, non‑contact pixels appear near‑black (mean gray value < 3) in raw images.

When an object contacts the medium, the air gap disappears and the touching surface produces diffuse scattering of the LED illumination. The scattered rays have a wide angular distribution; a subset of them meets the condition –θ_c < θ_incidence < θ_c at the viewing surface and refracts into the camera. These rays carry the natural appearance (color, texture) of the contacting object, so contact pixels retain the object’s visual information while still being clearly distinguished from the dark background.

The physical implementation uses a soft transparent gel for the touching surface and a rigid acrylic window for the viewing surface, both having a refractive index of ≈ 1.45 (θ_c ≈ 43°). The gel provides compliance for safe interaction, while the acrylic window ensures a deformation‑free optical interface. The LED (2835‑SMD) is mounted at ≈ 45° to achieve uniform illumination, and a small UVC camera (OV5693) with a 120° field of view is placed close to the medium. Black gel layers and a 3‑D‑printed black shell absorb stray reflections; the shell geometry is chosen to minimize specular coupling from non‑contact regions.

Fabrication is inexpensive (under $20 for all components except the camera) and fully open‑source, with detailed CAD files and step‑by‑step tutorials. Calibration is straightforward: a simple intensity threshold (≈ 3 gray level) separates contact from non‑contact pixels, followed by morphological post‑processing. The segmentation algorithm runs at > 30 fps on a modest CPU, enabling real‑time integration into robot control loops.

Experimental evaluation covered a diverse set of materials: water, facial cream, thin food‑film, plastics, metals, and semi‑liquids. Across bright indoor lighting, outdoor sunlight, and controlled dark conditions, non‑contact pixels consistently remained near‑black (average 2.1 ± 0.4), while contact pixels preserved the object’s natural color with > 95 % fidelity. Compared to prior TIR‑based tactile sensors, LightTact showed dramatically higher robustness to ambient illumination and required no monochromatic objects or dark enclosures. Contact detection accuracy exceeded 98 % in all tested scenarios.

To demonstrate functional benefits, LightTact was mounted on a dexterous robotic hand (e.g., the Amazing Hand). The sensor enabled manipulation tasks that depend on extremely light contact: (1) spreading water evenly on a tabletop, where the sensor instantly visualized the wet region; (2) dipping a fingertip in facial cream and applying a thin, uniform layer, with contact detection at forces far below the threshold of deformation‑based sensors; (3) grasping and peeling ultra‑thin food‑film without tearing, by precisely locating the contact line.

Beyond tactile perception, the raw RGB images can be fed directly to vision‑language models (VLMs). In a proof‑of‑concept experiment, a VLM correctly inferred the resistance value printed on a small component observed through LightTact, illustrating the sensor’s potential for multimodal reasoning and high‑level semantic understanding.

Limitations include difficulty distinguishing self‑luminous objects (e.g., LEDs, displays) and reduced scattering for highly specular surfaces, which may lower signal‑to‑noise. Future work will explore multi‑spectral illumination, micro‑structured black absorbers to further suppress stray light, and deep‑learning approaches to estimate contact pressure quantitatively, thereby extending LightTact from binary contact detection to full tactile force sensing.

In summary, LightTact introduces a novel, deformation‑independent visual‑tactile sensing paradigm that delivers high‑contrast, material‑agnostic contact images in a compact, low‑cost package. Its ability to perceive ultra‑light contacts, preserve natural visual appearance, and interface seamlessly with modern AI models opens new avenues for robotic manipulation, human‑robot interaction, and multimodal perception research.

Comments & Academic Discussion

Loading comments...

Leave a Comment