Designing Beyond Language: Sociotechnical Barriers in AI Health Technologies for Limited English Proficiency

Limited English proficiency (LEP) patients in the U.S. face systemic barriers to healthcare beyond language and interpreter access, encompassing procedural and institutional constraints. AI advances may support communication and care through on-demand translation and visit preparation, but also risk exacerbating existing inequalities. We conducted storyboard-driven interviews with 14 patient navigators to explore how AI could shape care experiences for Spanish-speaking LEP individuals. We identified tensions around linguistic and cultural misunderstandings, privacy concerns, and opportunities and risks for AI to augment care workflows. Participants highlighted structural factors that can undermine trust in AI systems, including sensitive information disclosure, unstable technology access, and low literacy. While AI tools can potentially alleviate social barriers and institutional constraints, there are risks of misinformation and reducing human-to-human interactions. Our findings contribute AI design considerations that support LEP patients and care teams via rapport-building, educational and language support, and minimizing disruptions to existing practices.

💡 Research Summary

This paper investigates the sociotechnical challenges and design opportunities for AI‑driven health technologies aimed at patients with limited English proficiency (LEP) in the United States, focusing on Spanish‑speaking individuals. The authors conducted storyboard‑driven semi‑structured interviews with 14 patient navigators—professionals who accompany LEP patients to appointments, translate, teach digital skills, and coordinate resources. By presenting six visual scenarios (pre‑visit preparation, real‑time translation, in‑visit AI assistance, post‑visit summarization and education, privacy management, and technology access), the study elicited navigators’ lived experiences, perceived pain points, and reactions to potential AI interventions.

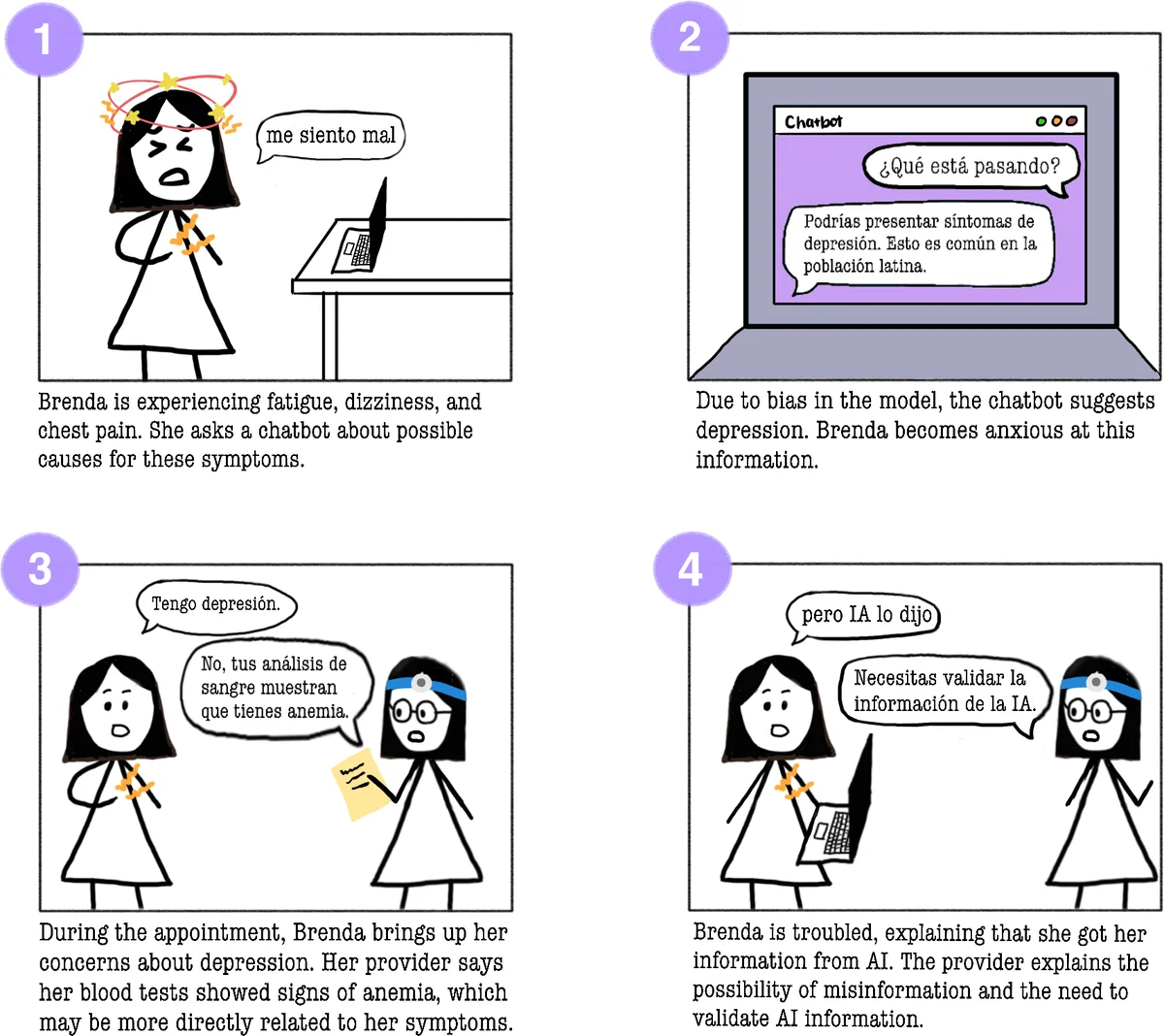

Key findings fall into four thematic clusters. First, linguistic and cultural misunderstandings extend beyond literal translation errors to include cultural norms such as family‑centric decision‑making and differing expectations of medical authority. Second, privacy and data sensitivity are paramount; many LEP patients, particularly recent immigrants, fear legal repercussions or discrimination if personal health information is mishandled. Third, digital and health literacy deficits combined with unstable internet or device access create a practical barrier to any AI tool, regardless of its technical sophistication. Fourth, AI promises and perils co‑exist: participants see value in on‑demand translation, visit‑prep prompts, and simplified medical explanations, yet worry about misinformation, erosion of human support (especially the navigator’s role), and loss of trust if AI behaves opaquely or makes errors.

From these insights the authors derive four design principles. 1) Cultural and linguistic sensitivity: AI models must be fine‑tuned with community‑sourced data, respect dialectal variation, and embed cultural context in explanations. 2) Support for low literacy: interfaces should rely on icons, audio, step‑by‑step guidance, and avoid dense text. 3) Privacy‑by‑design and transparency: clear consent flows, minimal data collection, and user‑facing explanations of how data are used are essential to build trust. 4) Human‑in‑the‑loop integration: AI should augment—not replace—patient navigators and clinicians, providing suggestions that are verified by a human before delivery.

The paper situates its contributions within prior work on interpreter services, digital health adoption among marginalized groups, and known biases in large language models. It highlights that most existing AI health tools target digitally literate, English‑speaking users, leaving LEP populations at risk of further exclusion. By foregrounding the perspectives of patient navigators—who act as cultural brokers—the study offers a nuanced view of how AI can be woven into existing care workflows without disrupting essential human relationships.

Limitations include the focus on Spanish‑speaking LEP patients (other language groups may face distinct challenges), reliance on navigator rather than direct patient input, and a relatively small sample size. The authors propose future work involving co‑design workshops with LEP patients, pilot deployments of prototype AI assistants, and longitudinal evaluation of trust, health outcomes, and workflow impact.

Overall, the paper argues that successful AI health interventions for LEP populations must be socially aware, culturally attuned, privacy‑respectful, and seamlessly integrated with the human support structures already in place. By doing so, AI can help reduce language barriers while preserving the rapport and advocacy that patient navigators provide, ultimately moving toward more equitable healthcare delivery.

Comments & Academic Discussion

Loading comments...

Leave a Comment