Uni-DPO: A Unified Paradigm for Dynamic Preference Optimization of LLMs

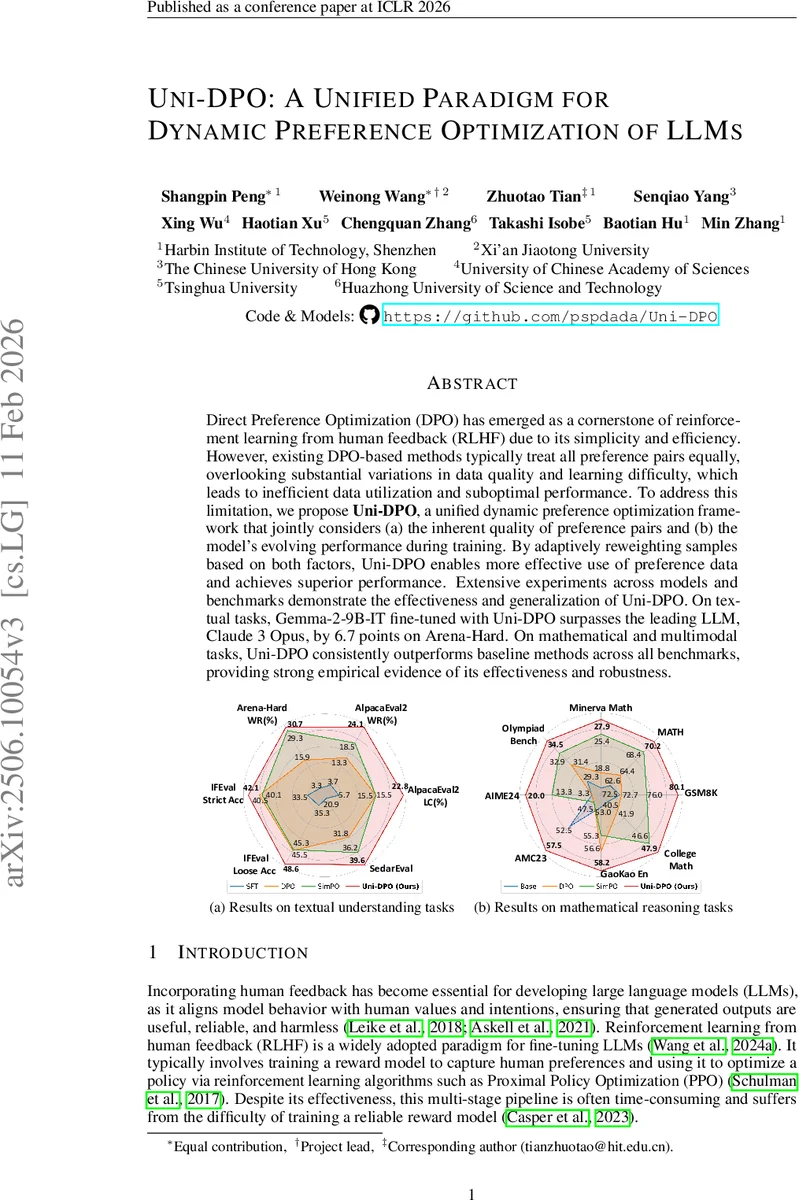

Direct Preference Optimization (DPO) has emerged as a cornerstone of reinforcement learning from human feedback (RLHF) due to its simplicity and efficiency. However, existing DPO-based methods typically treat all preference pairs equally, overlooking substantial variations in data quality and learning difficulty, which leads to inefficient data utilization and suboptimal performance. To address this limitation, we propose Uni-DPO, a unified dynamic preference optimization framework that jointly considers (a) the inherent quality of preference pairs and (b) the model’s evolving performance during training. By adaptively reweighting samples based on both factors, Uni-DPO enables more effective use of preference data and achieves superior performance. Extensive experiments across models and benchmarks demonstrate the effectiveness and generalization of Uni-DPO. On textual tasks, Gemma-2-9B-IT fine-tuned with Uni-DPO surpasses the leading LLM, Claude 3 Opus, by 6.7 points on Arena-Hard. On mathematical and multimodal tasks, Uni-DPO consistently outperforms baseline methods across all benchmarks, providing strong empirical evidence of its effectiveness and robustness.

💡 Research Summary

Uni‑DPO addresses a fundamental limitation of Direct Preference Optimization (DPO) and its recent variants: the assumption that every preference pair contributes equally to model training. In practice, human‑annotated or model‑generated preference data exhibit large variations in quality, and the difficulty of learning from each pair changes as the policy evolves. Ignoring these factors leads to inefficient data utilization, potential over‑fitting on easy high‑quality pairs, and sub‑optimal alignment with human preferences.

The authors propose a unified dynamic preference optimization framework that simultaneously accounts for (a) the intrinsic quality of each preference pair and (b) the model’s current performance on that pair. Two adaptive weighting factors are introduced. The quality‑aware weight, w₍qual₎, is derived from the score margin (S_w − S_l) supplied by human annotators or strong LLMs (e.g., GPT‑4). After scaling by a hyper‑parameter η and passing through a sigmoid, w₍qual₎ ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment