Bootstrapping Action-Grounded Visual Dynamics in Unified Vision-Language Models

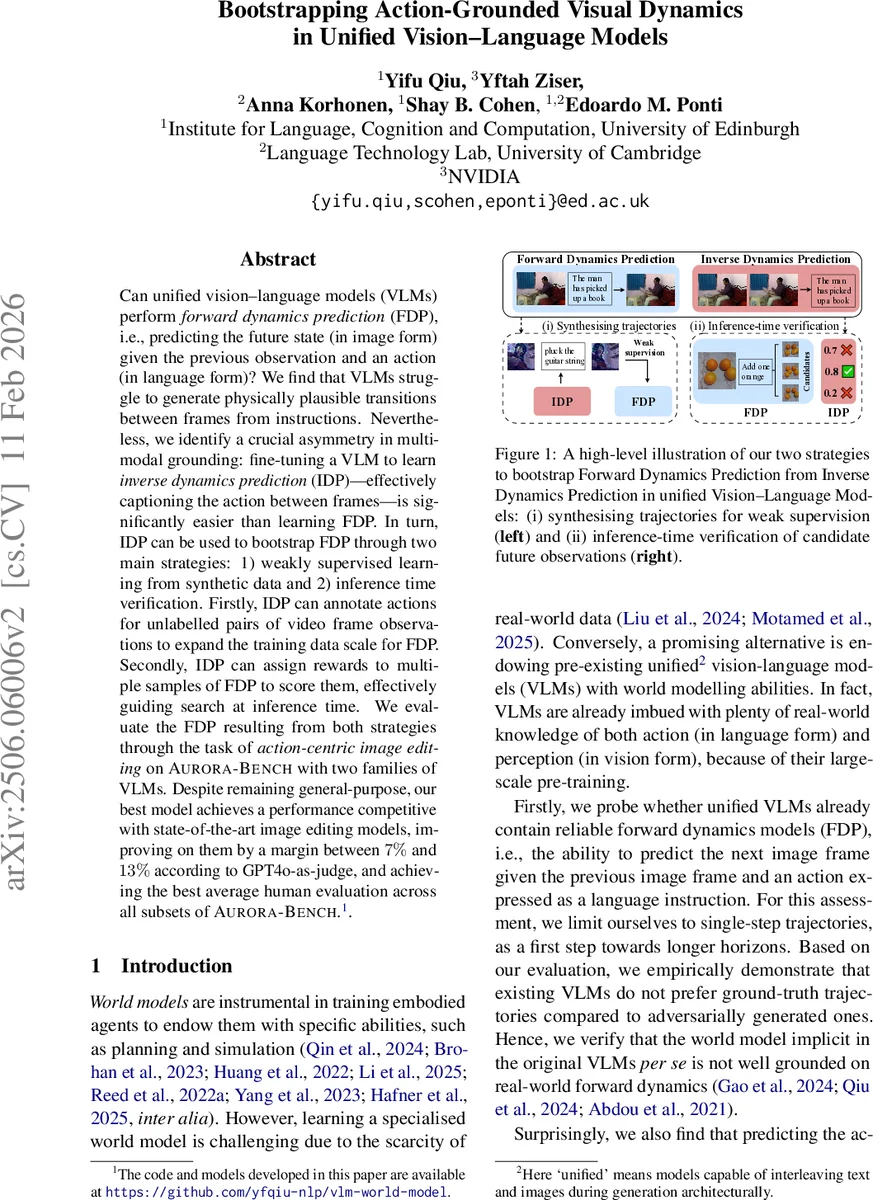

Can unified vision-language models (VLMs) perform forward dynamics prediction (FDP), i.e., predicting the future state (in image form) given the previous observation and an action (in language form)? We find that VLMs struggle to generate physically plausible transitions between frames from instructions. Nevertheless, we identify a crucial asymmetry in multimodal grounding: fine-tuning a VLM to learn inverse dynamics prediction (IDP), effectively captioning the action between frames, is significantly easier than learning FDP. In turn, IDP can be used to bootstrap FDP through two main strategies: 1) weakly supervised learning from synthetic data and 2) inference time verification. Firstly, IDP can annotate actions for unlabelled pairs of video frame observations to expand the training data scale for FDP. Secondly, IDP can assign rewards to multiple samples of FDP to score them, effectively guiding search at inference time. We evaluate the FDP resulting from both strategies through the task of action-centric image editing on Aurora-Bench with two families of VLMs. Despite remaining general-purpose, our best model achieves a performance competitive with state-of-the-art image editing models, improving on them by a margin between $7%$ and $13%$ according to GPT4o-as-judge, and achieving the best average human evaluation across all subsets of Aurora-Bench.

💡 Research Summary

This paper investigates whether large unified vision‑language models (VLMs) can perform forward dynamics prediction (FDP): given a current image observation and a textual description of an action, can the model generate a plausible future image? The authors first conduct a systematic zero‑shot evaluation of nine state‑of‑the‑art VLMs (including Qwen2‑VL, LLaVA, Chameleon, etc.) on five subsets of the Aurora‑Bench benchmark (MagicBrush, Action‑Genome, Something‑Something, WhatsUp, Kubric). For each test case they compare the model’s log‑likelihood on the ground‑truth (observation‑action‑observation) triplet against four types of adversarially manipulated negatives (random action, random observation, copied observation, swapped observation). The results show that VLMs do not consistently prefer the real trajectories: preference rates hover around 50 % for forward prediction and only modestly above chance (up to ~60 %) for inverse dynamics prediction (IDP), i.e., captioning the action between two frames. This demonstrates that, despite massive pre‑training on image‑text data, VLMs lack a grounded notion of temporal causality.

Nevertheless, the same models can be fine‑tuned on a modest amount of labeled triplets (Aurora and EPIC‑Kitchen) to achieve IDP performance well above random. The authors exploit this asymmetry—IDP is learnable, FDP is not—to bootstrap forward dynamics abilities. They propose two complementary strategies:

-

Weakly supervised learning from synthetic data

A high‑performing IDP model is used to annotate large collections of unlabeled video clips (45 h total from Moments‑in‑Time, Kinetics‑700, UCF‑101). Key‑frame pairs are selected based on optical‑flow magnitude (top‑K frames with a minimum interval). The IDP predicts an action label for each pair, yielding roughly 20 K–46 K synthetic (source image, action, target image) triplets. These synthetic triplets are combined with the original Aurora supervised set to fine‑tune the same VLM as a forward‑dynamics model (FDM). Crucially, the authors introduce a recognition‑weighted loss: a pre‑trained VLM encoder computes token‑wise similarity between source and target; tokens that change most receive higher loss weight, encouraging the model to focus on regions directly affected by the action rather than copying the whole image. -

Inference‑time verification

At test time the FDM samples N candidate future images. Each candidate, together with the source image, is scored by the IDP, which returns p_IDM(action | source, candidate). The candidate with the highest score is selected as the final prediction. This “test‑time verification” requires no additional training but incurs extra compute proportional to N. Experiments show that even with modest N (e.g., 5–20) this step yields performance gains comparable to the weakly supervised training route.

The authors evaluate both bootstrapping routes on the five Aurora‑Bench subsets using two VLM families: Chameleon‑7B and Liquid‑8B. Baselines include zero‑shot VLMs, VLMs fine‑tuned only on Aurora supervised data, and three diffusion‑based image‑editing models (PixInstruct, GoT, SmartEdit). Metrics comprise GPT‑4o‑as‑judge scores and human preference studies. The best configuration (Liquid‑8B fine‑tuned with recognition‑weighted loss and test‑time verification) achieves an average human rating of 84.3, surpassing the diffusion baselines by 7 %–13 % and attaining the highest scores across all Aurora subsets. Notably, the verification‑only variant (no extra training) still closes most of the gap to the fully fine‑tuned model, demonstrating the practical value of the IDP verifier.

Key insights from the work are:

- Asymmetry in multimodal grounding – VLMs can learn to map two images to an action (IDP) far more easily than to map an image and an action to a future image (FDP).

- Data amplification via IDP – High‑quality synthetic trajectories can be generated at scale without manual annotation, effectively turning a weak IDP into a data source for FDP.

- Region‑aware loss improves FDP – Weighting token‑wise loss by visual change focuses learning on dynamic regions, mitigating the degenerate solution of simply copying the source image.

- Test‑time verification as a training‑free boost – Leveraging the IDP as a scorer aligns generated candidates with plausible actions, offering a flexible, compute‑adjustable improvement.

The paper concludes that while current VLMs lack built‑in forward dynamics, they can be endowed with such capabilities through relatively inexpensive bootstrapping. Future directions include extending to multi‑step (long‑horizon) predictions, integrating with video generation pipelines, and combining learned dynamics with explicit physics simulators to build more robust world models for embodied agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment