Quantifying and Improving the Robustness of Retrieval-Augmented Language Models Against Spurious Features in Grounding Data

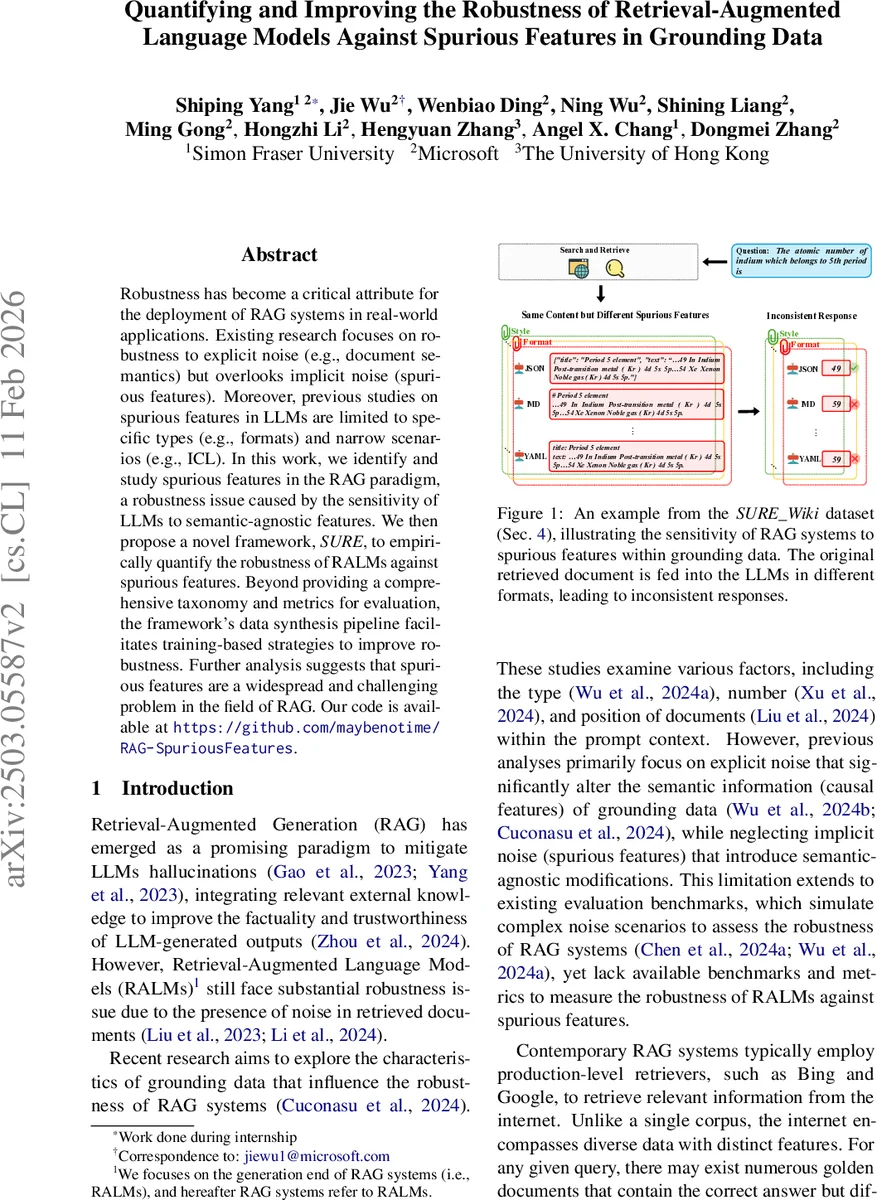

Robustness has become a critical attribute for the deployment of RAG systems in real-world applications. Existing research focuses on robustness to explicit noise (e.g., document semantics) but overlooks implicit noise (spurious features). Moreover, previous studies on spurious features in LLMs are limited to specific types (e.g., formats) and narrow scenarios (e.g., ICL). In this work, we identify and study spurious features in the RAG paradigm, a robustness issue caused by the sensitivity of LLMs to semantic-agnostic features. We then propose a novel framework, SURE, to empirically quantify the robustness of RALMs against spurious features. Beyond providing a comprehensive taxonomy and metrics for evaluation, the framework’s data synthesis pipeline facilitates training-based strategies to improve robustness. Further analysis suggests that spurious features are a widespread and challenging problem in the field of RAG. Our code is available at https://github.com/maybenotime/RAG-SpuriousFeatures .

💡 Research Summary

The paper addresses a previously under‑explored robustness problem in Retrieval‑Augmented Generation (RAG) systems: the sensitivity of large language model (LLM) readers to “spurious features”—semantic‑agnostic modifications such as changes in document style, format, source, logical ordering, or metadata that do not alter the factual content (causal features). While prior work has focused on explicit noise that changes the meaning of retrieved documents, this study systematically defines, categorizes, and quantifies the impact of implicit, non‑meaningful noise on RAG performance.

The authors introduce a comprehensive taxonomy comprising five high‑level categories (Style, Source, Logic, Format, Metadata) and 13 concrete perturbations (e.g., Simple vs. Complex style, LLM‑generated vs. self‑generated source, random/reverse/LLM‑reranked sentence order, HTML/Markdown/JSON/YAML formats, timestamp and data‑source metadata). To evaluate robustness, they propose the SURE framework (Spurious Feature Robustness Evaluation), which consists of four stages: (1) preparing raw instances (1,000 queries from NQ‑Open, each paired with 100 Wikipedia documents, yielding 100 k instances), (2) automatically injecting spurious features using a mix of LLM‑based generation (Llama‑3.1‑70B‑Instruct) and rule‑based transformations, (3) preserving causal features via bidirectional entailment checks (NLI) and string‑matching to ensure semantic equivalence and answer aliasing are avoided, and (4) measuring performance with three metrics—Win Rate (answers remain correct after perturbation), Lose Rate (answers become incorrect), and Robustness Rate (Win / (Win + Lose)).

From the full synthetic dataset, the authors extract the most challenging cases to form a lightweight benchmark called SIG_Wiki, enabling rapid evaluation of spurious‑feature robustness. Experiments span multiple LLMs (Claude‑2, GPT‑4, Llama‑2‑70B, Llama‑3.1‑70B) and retrievers (Bing, Google, dense retriever), revealing that most models suffer low robustness, especially to format and metadata perturbations (Robustness Rate often below 45 %). Some style simplifications even improve accuracy for certain models, indicating that not all spurious features are harmful.

To mitigate the identified weakness, two training‑based strategies are proposed: (a) fine‑tuning on a mixture of original and spurious‑feature‑augmented data, and (b) multi‑task learning where the model is explicitly trained to ignore spurious cues. Both approaches substantially raise Robustness Rate on SIG_Wiki (by 12–18 percentage points) and dramatically improve resistance to format changes (up to 25 pp gain).

The contributions are threefold: (1) a first‑of‑its‑kind systematic study of spurious features in RAG, (2) the SURE framework and SIG_Wiki benchmark for automated evaluation, and (3) practical data‑driven methods to enhance RAG robustness. The work highlights that real‑world web content, with its diverse formats and metadata, can unintentionally bias LLM readers, and that synthetic data generation combined with targeted training can substantially alleviate this bias. Limitations include focus on English Wikipedia/NQ data and the need for multilingual extensions, but the methodology provides a solid foundation for future robustness research in retrieval‑augmented generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment