Hardware Co-Design Scaling Laws via Roofline Modelling for On-Device LLMs

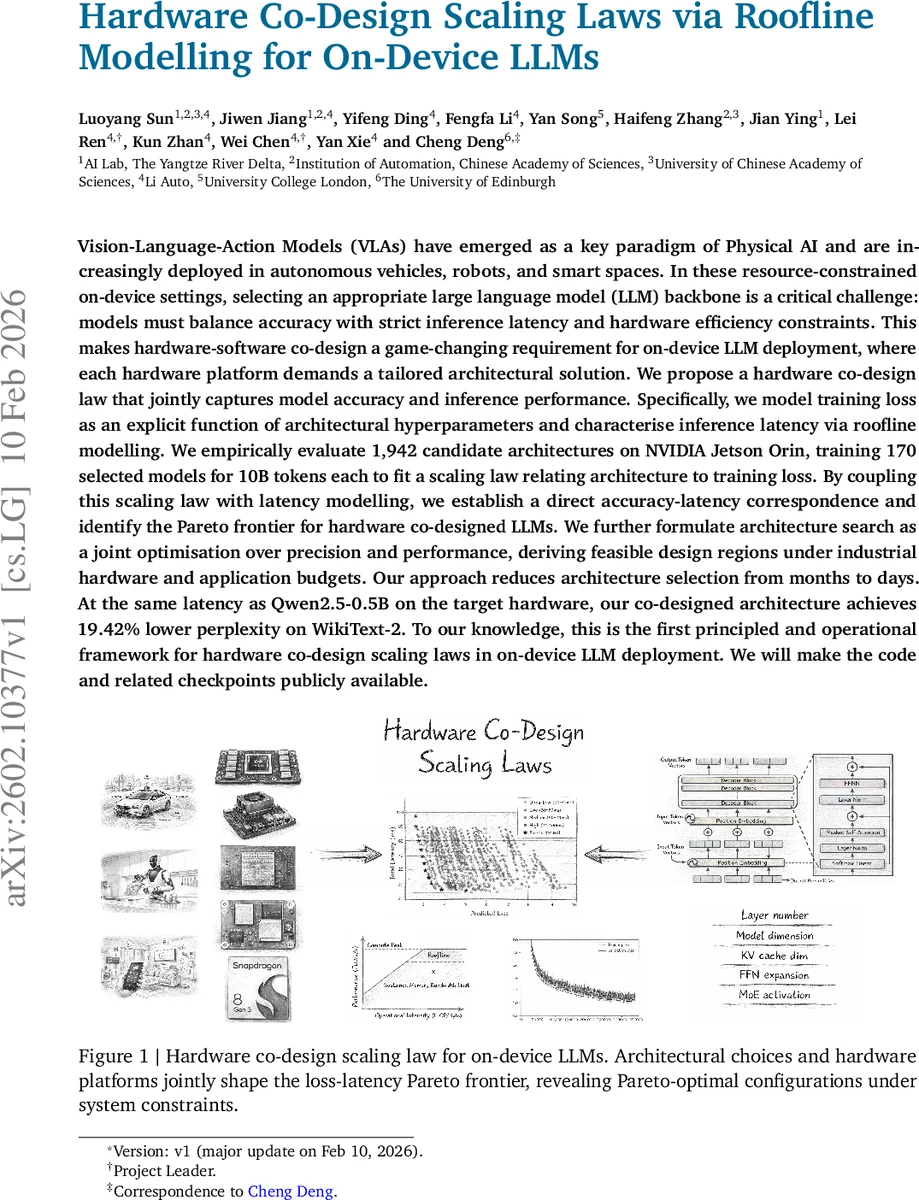

Vision-Language-Action Models (VLAs) have emerged as a key paradigm of Physical AI and are increasingly deployed in autonomous vehicles, robots, and smart spaces. In these resource-constrained on-device settings, selecting an appropriate large language model (LLM) backbone is a critical challenge: models must balance accuracy with strict inference latency and hardware efficiency constraints. This makes hardware-software co-design a game-changing requirement for on-device LLM deployment, where each hardware platform demands a tailored architectural solution. We propose a hardware co-design law that jointly captures model accuracy and inference performance. Specifically, we model training loss as an explicit function of architectural hyperparameters and characterise inference latency via roofline modelling. We empirically evaluate 1,942 candidate architectures on NVIDIA Jetson Orin, training 170 selected models for 10B tokens each to fit a scaling law relating architecture to training loss. By coupling this scaling law with latency modelling, we establish a direct accuracy-latency correspondence and identify the Pareto frontier for hardware co-designed LLMs. We further formulate architecture search as a joint optimisation over precision and performance, deriving feasible design regions under industrial hardware and application budgets. Our approach reduces architecture selection from months to days. At the same latency as Qwen2.5-0.5B on the target hardware, our co-designed architecture achieves 19.42% lower perplexity on WikiText-2. To our knowledge, this is the first principled and operational framework for hardware co-design scaling laws in on-device LLM deployment. We will make the code and related checkpoints publicly available.

💡 Research Summary

The paper tackles the pressing problem of selecting an appropriate large language model (LLM) backbone for on‑device deployment in Vision‑Language‑Action (VLA) systems such as autonomous vehicles, robots, and smart spaces. In edge environments, models must satisfy a three‑way trade‑off among accuracy, inference latency, and hardware efficiency, which makes hardware‑software co‑design essential. The authors propose a “hardware co‑design law” that jointly captures model loss (as a proxy for accuracy) and inference latency, enabling a principled Pareto analysis of the accuracy‑latency frontier for a given hardware platform.

Formulation

The architecture is parameterised by θ = (l, d, dm, r, ρ), where l is depth, d hidden dimension, dm the KV‑cache size (derived from d and the grouped‑query‑key‑value ratio gqa), r the feed‑forward expansion ratio, and ρ the expert activation rate for Mixture‑of‑Experts (MoE). The optimisation problem is:

minθ L(θ) subject to T(θ; H, W) ≤ T_lat and M(θ; W) ≤ M_budget,

where L(θ) is validation loss, T the latency surrogate, M the memory surrogate, H the hardware characteristics (peak FLOPS π_H and memory bandwidth β_H), and W the workload (batch size, input/output sequence lengths).

Loss Scaling Law

Building on classic scaling analyses (e.g., Kaplan et al.), the authors fit an explicit polynomial model to empirical loss data:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment