Causal Effect Estimation with Learned Instrument Representations

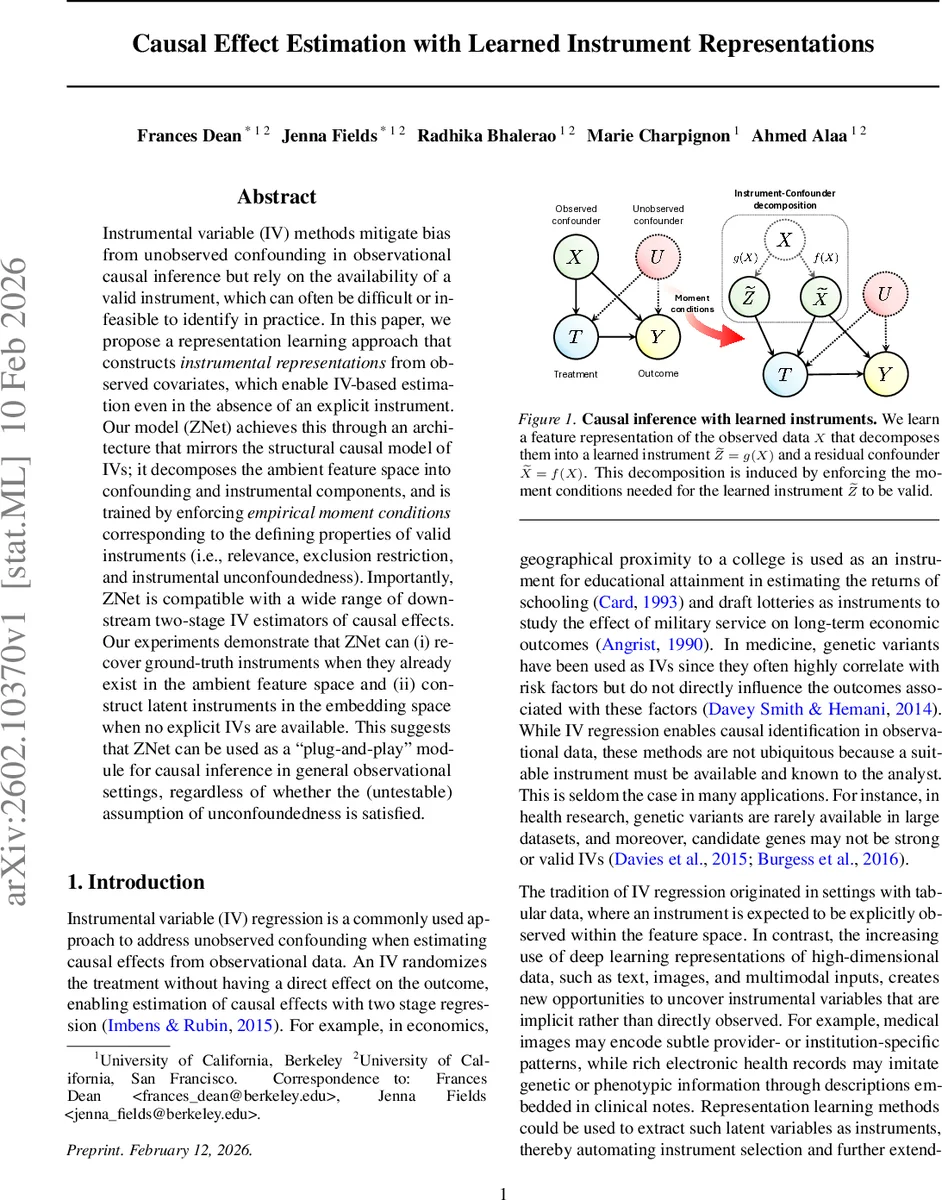

Instrumental variable (IV) methods mitigate bias from unobserved confounding in observational causal inference but rely on the availability of a valid instrument, which can often be difficult or infeasible to identify in practice. In this paper, we propose a representation learning approach that constructs instrumental representations from observed covariates, which enable IV-based estimation even in the absence of an explicit instrument. Our model (ZNet) achieves this through an architecture that mirrors the structural causal model of IVs; it decomposes the ambient feature space into confounding and instrumental components, and is trained by enforcing empirical moment conditions corresponding to the defining properties of valid instruments (i.e., relevance, exclusion restriction, and instrumental unconfoundedness). Importantly, ZNet is compatible with a wide range of downstream two-stage IV estimators of causal effects. Our experiments demonstrate that ZNet can (i) recover ground-truth instruments when they already exist in the ambient feature space and (ii) construct latent instruments in the embedding space when no explicit IVs are available. This suggests that ZNet can be used as a ``plug-and-play’’ module for causal inference in general observational settings, regardless of whether the (untestable) assumption of unconfoundedness is satisfied.

💡 Research Summary

The paper addresses a fundamental obstacle in instrumental variable (IV) analysis: the difficulty of finding a valid instrument in many observational settings, especially when dealing with high‑dimensional, non‑tabular data. The authors propose a representation‑learning framework called ZNet that automatically constructs an instrumental representation from the observed covariates X, thereby enabling IV‑based causal effect estimation even when no explicit instrument is available.

Core Idea and Model Architecture

ZNet decomposes the observed feature vector X into two latent components: a confounding representation C = f(X) and an instrumental representation Z = g(X). Two neural encoders, f and g, are trained jointly with three downstream networks: Φ predicts the conditional mean of Y given (X,T) to obtain residuals, φ predicts Y given (C,T), and π predicts T given Z. The architecture mirrors the structural causal model (SCM) of a classic IV setting, where Z influences T but has no direct effect on Y, and all direct effects of X on Y are mediated through C.

Moment‑Based Regularization

To ensure that the learned Z satisfies the three IV conditions, the authors impose empirical moment constraints during training:

- Relevance – Cov(Z, T) > 0, enforced by minimizing the supervised loss for π (treatment prediction) and by a Lagrange term encouraging a positive correlation.

- Exclusion Restriction – Cov(Z, ε̃Y) = 0, where ε̃Y = Y − E

Comments & Academic Discussion

Loading comments...

Leave a Comment