Latent Thoughts Tuning: Bridging Context and Reasoning with Fused Information in Latent Tokens

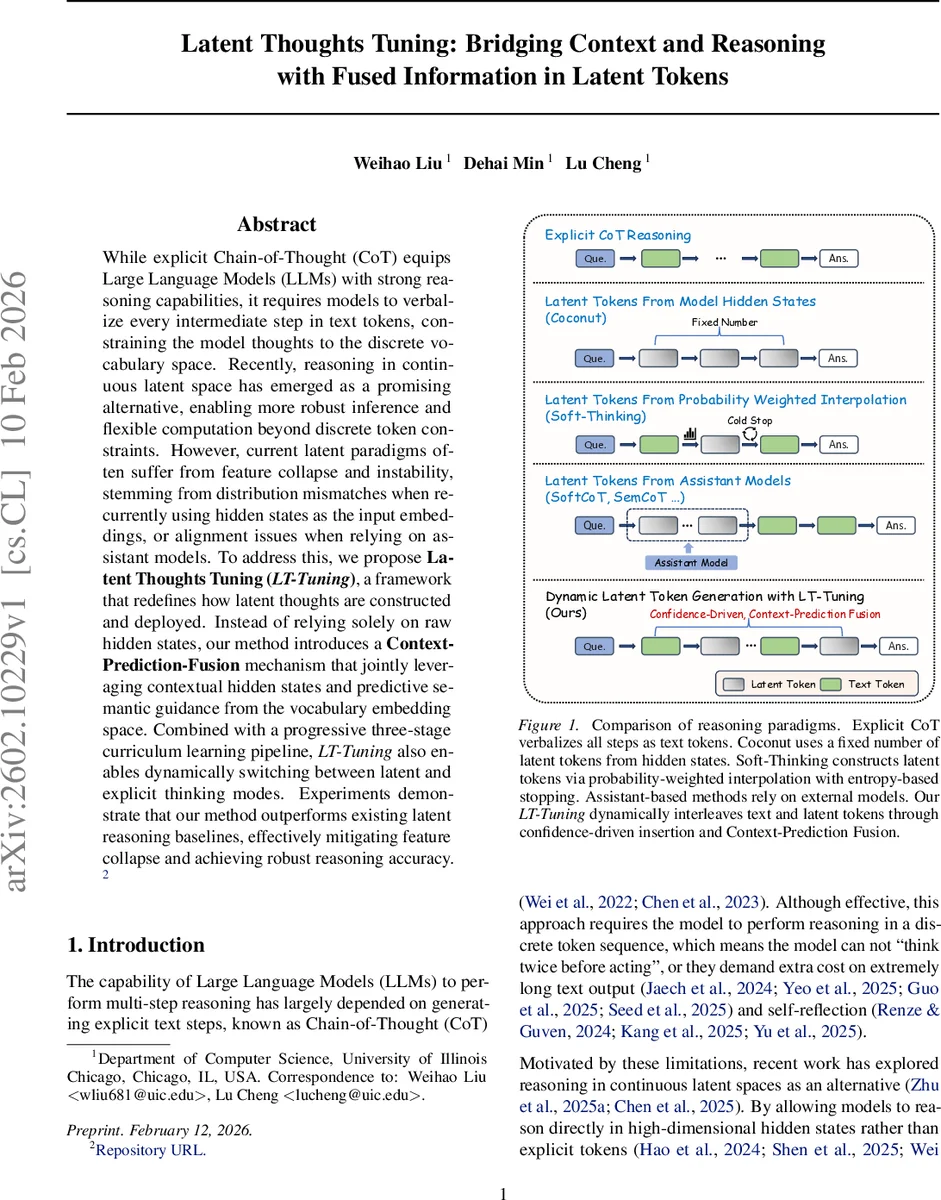

While explicit Chain-of-Thought (CoT) equips Large Language Models (LLMs) with strong reasoning capabilities, it requires models to verbalize every intermediate step in text tokens, constraining the model thoughts to the discrete vocabulary space. Recently, reasoning in continuous latent space has emerged as a promising alternative, enabling more robust inference and flexible computation beyond discrete token constraints. However, current latent paradigms often suffer from feature collapse and instability, stemming from distribution mismatches when recurrently using hidden states as the input embeddings, or alignment issues when relying on assistant models. To address this, we propose Latent Thoughts Tuning (LT-Tuning), a framework that redefines how latent thoughts are constructed and deployed. Instead of relying solely on raw hidden states, our method introduces a Context-Prediction-Fusion mechanism that jointly leveraging contextual hidden states and predictive semantic guidance from the vocabulary embedding space. Combined with a progressive three-stage curriculum learning pipeline, LT-Tuning also enables dynamically switching between latent and explicit thinking modes. Experiments demonstrate that our method outperforms existing latent reasoning baselines, effectively mitigating feature collapse and achieving robust reasoning accuracy.

💡 Research Summary

The paper addresses the limitations of explicit Chain‑of‑Thought (CoT) prompting, which forces large language models (LLMs) to verbalize every intermediate reasoning step as discrete tokens. While recent work has explored reasoning directly in continuous latent spaces, existing approaches suffer from two major problems: (1) feature collapse and instability caused by a distribution mismatch when raw hidden states are reused as input embeddings, especially in models with untied input‑output embeddings; and (2) static allocation of latent reasoning steps, which wastes computation on easy sub‑problems and provides insufficient depth for hard ones.

LT‑Tuning (Latent Thoughts Tuning) proposes a unified solution. Its core is the Context‑Prediction Fusion mechanism, which constructs each latent token by linearly blending the contextual hidden state from a chosen transformer layer with a probability‑weighted embedding derived from the model’s vocabulary distribution. This fusion aligns the latent input with the model’s embedding manifold while preserving contextual information, thereby mitigating feature collapse.

A confidence‑driven dynamic insertion strategy further improves efficiency: a special

Training proceeds through a three‑stage curriculum. Stage 1 fine‑tunes the base model on standard CoT data to acquire basic step‑by‑step reasoning. Stage 2 introduces

Experiments on Llama‑3.2‑1B, 3B, and 8B models across four mathematical reasoning benchmarks (GSM8K, ASDiv‑Aug, MultiArith, SVAMP) show consistent gains over prior latent reasoning baselines such as Coconut and Soft‑Thinking, with up to a 4.3 percentage‑point improvement. The method scales well: unlike earlier approaches that degrade on larger models, LT‑Tuning remains robust, and a lightweight adapter suffices for models with separate input and output embeddings.

In summary, LT‑Tuning simultaneously resolves the distribution mismatch and static computation issues that have limited latent‑space reasoning, offering a post‑training plug‑in that can be applied to off‑the‑shelf LLMs. It delivers dynamic, stable, and semantically rich latent reasoning while preserving the strengths of explicit CoT, narrowing the performance gap between discrete and continuous reasoning paradigms.

Comments & Academic Discussion

Loading comments...

Leave a Comment