AD$^2$: Analysis and Detection of Adversarial Threats in Visual Perception for End-to-End Autonomous Driving Systems

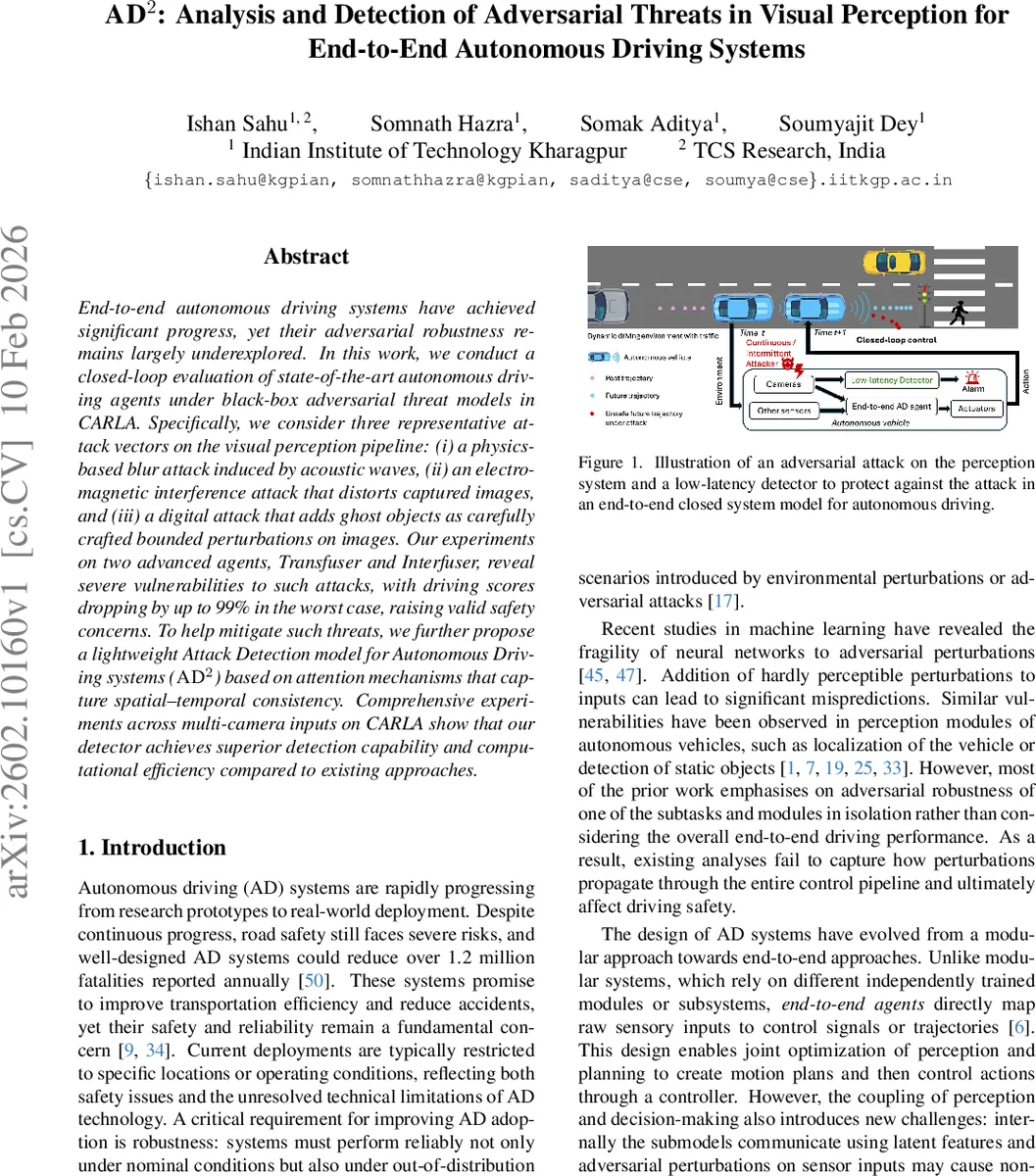

End-to-end autonomous driving systems have achieved significant progress, yet their adversarial robustness remains largely underexplored. In this work, we conduct a closed-loop evaluation of state-of-the-art autonomous driving agents under black-box adversarial threat models in CARLA. Specifically, we consider three representative attack vectors on the visual perception pipeline: (i) a physics-based blur attack induced by acoustic waves, (ii) an electromagnetic interference attack that distorts captured images, and (iii) a digital attack that adds ghost objects as carefully crafted bounded perturbations on images. Our experiments on two advanced agents, Transfuser and Interfuser, reveal severe vulnerabilities to such attacks, with driving scores dropping by up to 99% in the worst case, raising valid safety concerns. To help mitigate such threats, we further propose a lightweight Attack Detection model for Autonomous Driving systems (AD$^2$) based on attention mechanisms that capture spatial-temporal consistency. Comprehensive experiments across multi-camera inputs on CARLA show that our detector achieves superior detection capability and computational efficiency compared to existing approaches.

💡 Research Summary

**

This paper investigates the adversarial robustness of state‑of‑the‑art end‑to‑end autonomous driving (AD) agents with respect to attacks on their visual perception pipeline. The authors focus on three representative black‑box threat models that target camera inputs: (i) a physics‑based blur attack (named Poltergeist) that simulates acoustic interference with image stabilizers, (ii) an electromagnetic signal injection attack (ESIA) that creates colored strip distortions in captured frames, and (iii) a digital “ghost‑object” attack (SNAL) that injects carefully bounded perturbations to fabricate false objects. All attacks are evaluated in a closed‑loop setting using the CARLA simulator, where the agents receive feedback from their own actions, thereby exposing cascading effects of perception errors on planning and control.

Two leading multimodal agents, Transfuser and Interfuser, are tested across multiple urban and suburban scenarios. The attacks are applied either continuously at every timestep or intermittently every ten frames, allowing the authors to compare sustained versus sparse perturbations. Results show dramatic degradation: driving scores drop by up to 99 % in the worst case, with frequent collisions, lane violations, and premature halts. The blur and electromagnetic attacks degrade overall image quality, breaking the implicit sensor‑fusion corrections that rely on consistent visual cues, while the ghost‑object attack confuses the latent object‑detection component, leading to spurious avoidance maneuvers.

To mitigate these threats, the authors propose AD², a lightweight adversarial attack detector designed for end‑to‑end AD systems. AD² operates externally, requiring only raw multi‑camera images (left, centre, right) from two consecutive timesteps, and does not need access to internal latent representations or model parameters. The architecture consists of a compact ResNet‑18 backbone that extracts a 64‑dimensional feature vector per camera, followed by two transformer modules: a spatial transformer that models consistency among the three overlapping camera views, and a temporal transformer that captures coherence between successive frames. Each transformer uses a single multi‑head self‑attention layer (four heads) with a classification token that aggregates global relationships. The final classification head predicts one of four classes: benign, Poltergeist, SNAL, or ESIA.

Extensive experiments demonstrate that AD² achieves an average detection accuracy above 94 % and per‑attack F1‑scores ranging from 0.90 to 0.95, while requiring roughly half the FLOPs of comparable CNN‑only detectors. Its real‑time performance (≈30 fps) and non‑intrusive design make it suitable for deployment on existing AD stacks without modifying the driving agents. The paper also discusses limitations: the attacks are simulated rather than physically realized on real hardware, and the detector’s generalization to unseen attack types remains to be validated. Future work is suggested to incorporate additional sensor modalities (LiDAR, radar, IMU) into the consistency checks and to test the system on real vehicles.

In summary, the study reveals that end‑to‑end autonomous driving agents are highly vulnerable to relatively simple visual perturbations, and it offers a practical, efficient detection framework (AD²) that leverages spatial‑temporal consistency to safeguard against such adversarial threats.

Comments & Academic Discussion

Loading comments...

Leave a Comment