ConsID-Gen: View-Consistent and Identity-Preserving Image-to-Video Generation

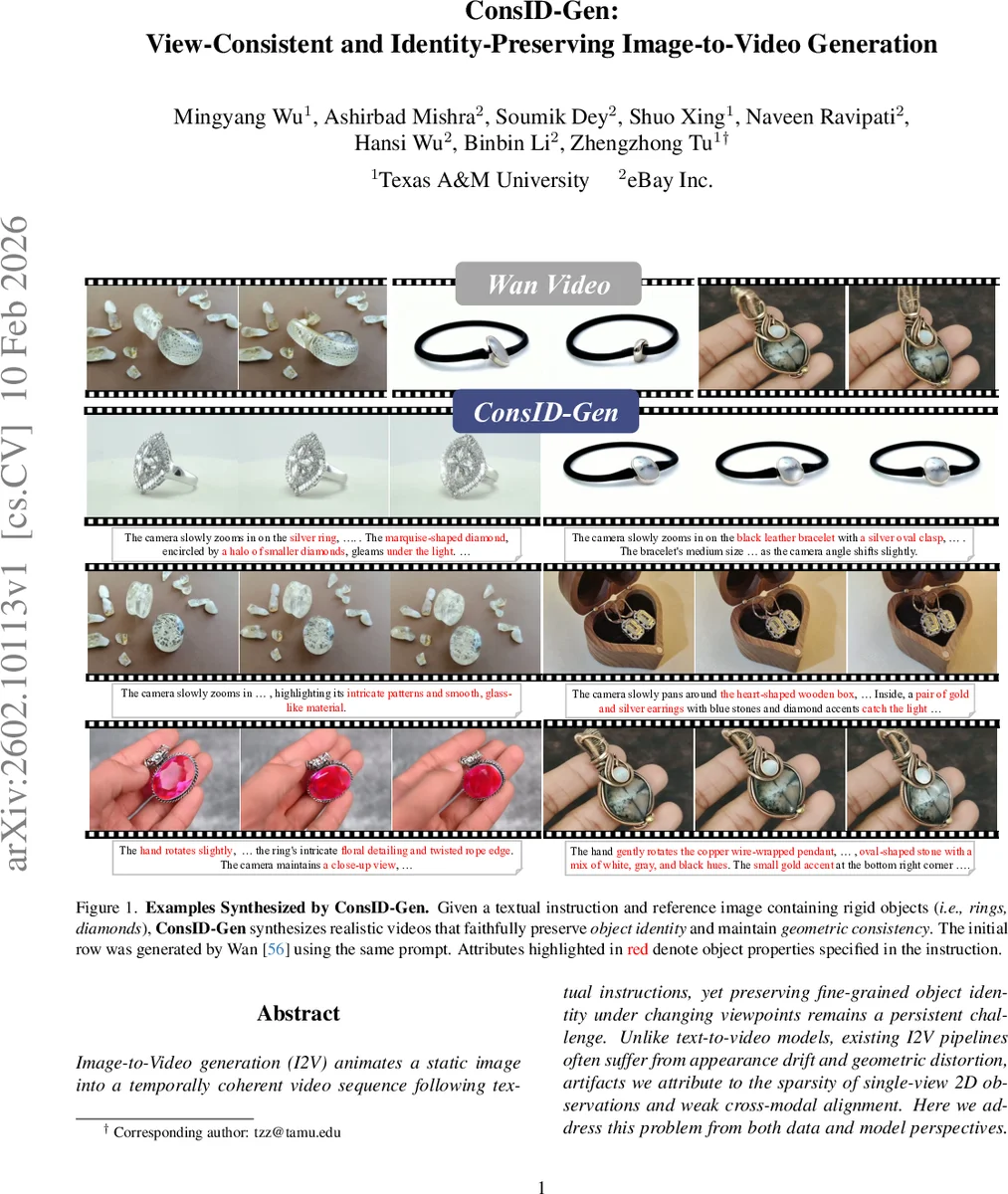

Image-to-Video generation (I2V) animates a static image into a temporally coherent video sequence following textual instructions, yet preserving fine-grained object identity under changing viewpoints remains a persistent challenge. Unlike text-to-video models, existing I2V pipelines often suffer from appearance drift and geometric distortion, artifacts we attribute to the sparsity of single-view 2D observations and weak cross-modal alignment. Here we address this problem from both data and model perspectives. First, we curate ConsIDVid, a large-scale object-centric dataset built with a scalable pipeline for high-quality, temporally aligned videos, and establish ConsIDVid-Bench, where we present a novel benchmarking and evaluation framework for multi-view consistency using metrics sensitive to subtle geometric and appearance deviations. We further propose ConsID-Gen, a view-assisted I2V generation framework that augments the first frame with unposed auxiliary views and fuses semantic and structural cues via a dual-stream visual-geometric encoder as well as a text-visual connector, yielding unified conditioning for a Diffusion Transformer backbone. Experiments across ConsIDVid-Bench demonstrate that ConsID-Gen consistently outperforms in multiple metrics, with the best overall performance surpassing leading video generation models like Wan2.1 and HunyuanVideo, delivering superior identity fidelity and temporal coherence under challenging real-world scenarios. We will release our model and dataset at https://myangwu.github.io/ConsID-Gen.

💡 Research Summary

ConsID‑Gen tackles the long‑standing problem of preserving fine‑grained object identity when animating a single image into a video under changing viewpoints. The authors approach the issue from both data and model perspectives.

Dataset (ConsIDVid) and Benchmark (ConsIDVid‑Bench)

To overcome the scarcity of multi‑view, object‑centric video data, the authors assemble a large‑scale dataset by aggregating existing resources (Co3D, OmniObject3D, Objectron), proprietary e‑commerce clips, and synthetic videos. A multi‑stage curation pipeline filters out low‑quality clips based on duration (≥81 frames), resolution (≥320p), illumination, blur, and aesthetic scores (LAION‑5B predictor). Images are also cleaned of duplicates, OCR‑heavy content, and semantic outliers using CLIP‑based clustering. For each video, a two‑stage hierarchical captioning process with Qwen2.5‑VL generates detailed appearance captions (color, material, shape, etc.) followed by temporally aware captions describing camera motion and object dynamics. This yields high‑quality video‑text pairs suitable for training.

ConsIDVid‑Bench redefines I2V evaluation as a multi‑view consistency problem. Instead of relying solely on distributional metrics like FVD, the benchmark introduces geometry‑aware metrics: Chamfer Distance for shape fidelity, Multi‑Modal MMD for appearance similarity, and MET3R (a composite metric sensitive to subtle geometric and texture deviations). Human studies are also incorporated to assess perceived identity preservation.

Model Architecture (ConsID‑Gen)

Traditional I2V pipelines encode the reference image and text separately and fuse them only late in the diffusion network, which limits cross‑modal interaction and hampers identity consistency. ConsID‑Gen introduces a “view‑assisted” paradigm: the first frame is supplemented with two unposed auxiliary views of the same object. These three images pass through a dual‑stream encoder.

- Visual stream: a CLIP‑style visual encoder extracts semantic appearance features (color, texture, fine details).

- Geometry stream: a dedicated Geometry Encoder (VGGT) processes each view to produce pose and depth tokens, capturing multi‑view geometric relationships.

A multimodal Text‑Visual connector aligns the textual instruction (encoded by a T5 model) with the combined visual‑geometric tokens via fine‑grained cross‑attention, producing unified conditioning tokens. These tokens are fed into a Diffusion Transformer backbone (MMDiT), which performs the denoising diffusion process to generate the video. By integrating geometry early and aligning modalities before diffusion, the model maintains a stable representation of object identity throughout temporal evolution.

Experiments and Findings

ConsID‑Gen is trained on ConsIDVid and evaluated on ConsIDVid‑Bench. Compared to state‑of‑the‑art video generators such as Wan2.1 and HunyuanVideo, ConsID‑Gen achieves:

- MET3R improvement of +30.2% on the proprietary test set.

- Chamfer Distance reduction of +7.26% on the public test set.

- Higher human preference scores for identity preservation (≈92.5%).

Ablation studies show that (1) adding auxiliary views significantly reduces appearance drift, (2) the geometry encoder contributes most to shape consistency, and (3) early multimodal alignment outperforms late fusion.

Contributions

- A large, high‑quality, object‑centric video dataset with multi‑view coverage and a benchmark that measures identity preservation at the object level.

- A novel view‑assisted I2V architecture that fuses semantic and geometric cues before diffusion, improving cross‑modal alignment.

- Empirical evidence that the proposed system surpasses existing open‑source models in both quantitative metrics and human evaluation.

The authors will release the ConsID‑Gen model, code, and ConsIDVid dataset, aiming to accelerate research and practical deployment of identity‑preserving image‑to‑video generation in e‑commerce, advertising, and educational content creation.

Comments & Academic Discussion

Loading comments...

Leave a Comment