EgoHumanoid: Unlocking In-the-Wild Loco-Manipulation with Robot-Free Egocentric Demonstration

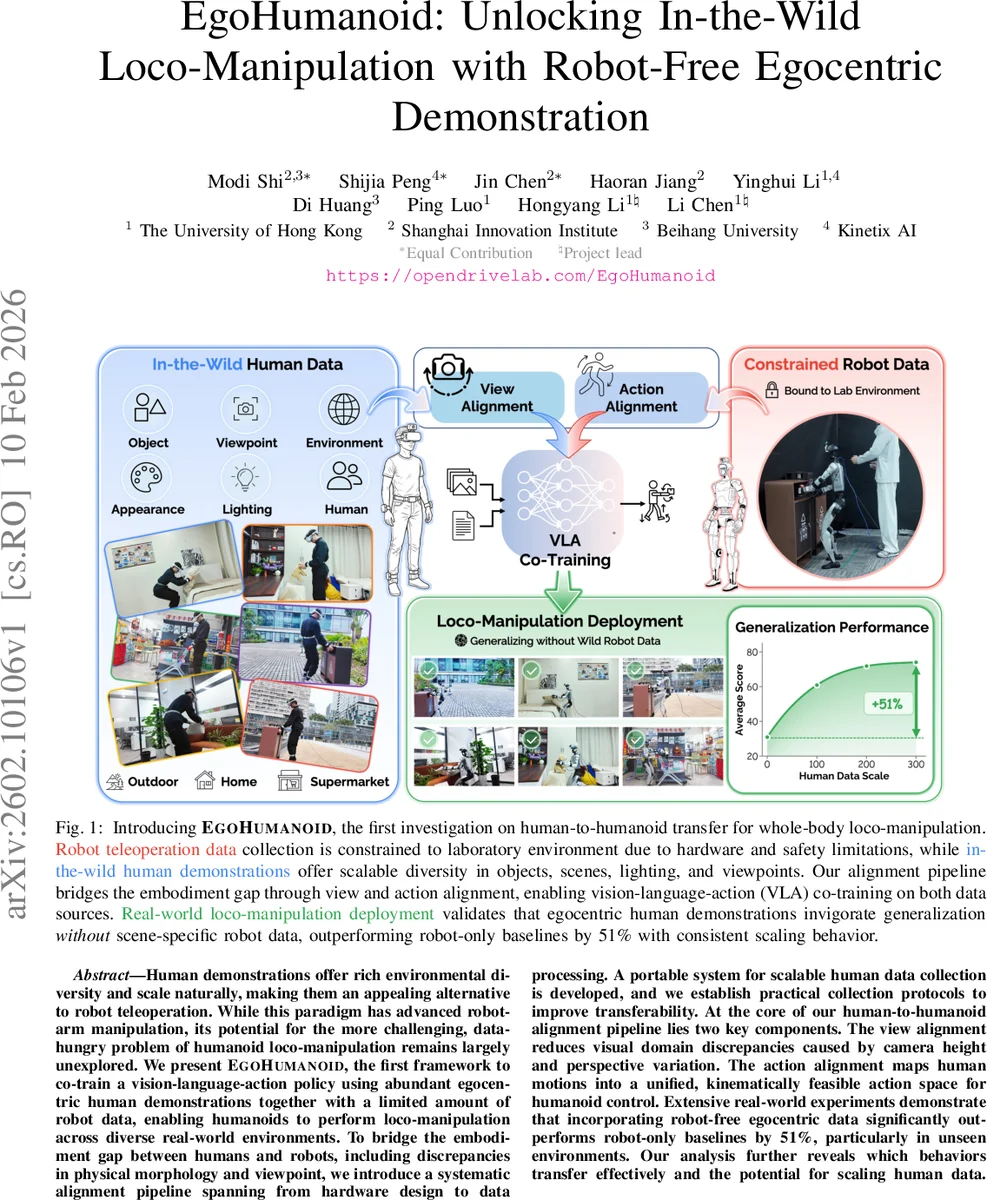

Human demonstrations offer rich environmental diversity and scale naturally, making them an appealing alternative to robot teleoperation. While this paradigm has advanced robot-arm manipulation, its potential for the more challenging, data-hungry problem of humanoid loco-manipulation remains largely unexplored. We present EgoHumanoid, the first framework to co-train a vision-language-action policy using abundant egocentric human demonstrations together with a limited amount of robot data, enabling humanoids to perform loco-manipulation across diverse real-world environments. To bridge the embodiment gap between humans and robots, including discrepancies in physical morphology and viewpoint, we introduce a systematic alignment pipeline spanning from hardware design to data processing. A portable system for scalable human data collection is developed, and we establish practical collection protocols to improve transferability. At the core of our human-to-humanoid alignment pipeline lies two key components. The view alignment reduces visual domain discrepancies caused by camera height and perspective variation. The action alignment maps human motions into a unified, kinematically feasible action space for humanoid control. Extensive real-world experiments demonstrate that incorporating robot-free egocentric data significantly outperforms robot-only baselines by 51%, particularly in unseen environments. Our analysis further reveals which behaviors transfer effectively and the potential for scaling human data.

💡 Research Summary

EgoHumanoid introduces a novel framework that leverages large‑scale egocentric human demonstrations together with a modest amount of robot teleoperation data to train a vision‑language‑action (VLA) policy for whole‑body humanoid loco‑manipulation. The authors identify two fundamental gaps between human and robot embodiments: visual viewpoint differences caused by distinct camera heights and perspectives, and action representation mismatches due to divergent morphologies and joint limits. To bridge these gaps they propose a two‑stage alignment pipeline.

First, view alignment reconstructs a per‑pixel 3D point cloud from human egocentric RGB frames using the MoGe model, transforms the point cloud into the target robot camera frame, and re‑projects it to obtain a robot‑view image. Missing regions caused by disocclusions are filled with a latent‑diffusion‑based inpainting network, yielding visually realistic robot‑perspective images.

Second, action alignment maps human full‑body pose data into a unified action space shared by both agents. Upper‑body motions are expressed as delta end‑effector poses (position and orientation changes), while lower‑body motions are discretized into a set of locomotion commands (forward, backward, lateral, turn, stand, squat). This representation respects the robot’s kinematic constraints while preserving high‑level behavioral intent.

The data collection system is built around a portable PICO VR headset equipped with five motion trackers and a head‑mounted ZED X Mini camera. Human participants record egocentric video, 24 body keypoints, and 26 hand keypoints in diverse indoor and outdoor settings. Robot data are gathered via the same VR setup: operators wear the headset and use handheld controllers to issue navigation and wrist‑pose commands, which are converted to joint‑level actions on a Unitree G1 humanoid (29 DoF, Dex3 dexterous hands).

Both human‑aligned and robot‑aligned samples are fed into a Transformer‑based VLA model. The visual encoder processes the aligned RGB frames, the language encoder ingests task instructions (e.g., “open the door”), and the action decoder predicts the next delta end‑effector pose together with a locomotion command. Training optimizes a combination of regression loss for pose deltas, cross‑entropy loss for discrete locomotion, and a contrastive loss aligning visual and linguistic modalities.

Extensive real‑world experiments on four tasks—door opening, object pick‑and‑place, stair climbing, and complex navigation—demonstrate that incorporating human data yields a 20 % average performance boost over robot‑only baselines, and a striking 51 % improvement in previously unseen environments. Ablation studies reveal that removing view alignment collapses performance due to severe visual domain shift, while omitting action alignment leads to unstable motions and frequent balance failures. Scaling experiments show a monotonic gain when the amount of human demonstrations is increased, confirming the scalability of the approach.

The paper also discusses limitations: view alignment relies heavily on accurate depth estimation, which can degrade under challenging lighting or reflective surfaces; action alignment abstracts away fine finger dexterity and nuanced balance strategies, leaving room for future work on physics‑based retargeting and multi‑view capture. The authors suggest integrating larger language‑vision pre‑training models and more sophisticated motion retargeting to extend the framework to intricate manipulation and higher‑level planning.

In summary, EgoHumanoid provides the first evidence that robot‑free egocentric human demonstrations can be systematically transformed and co‑trained with robot data to endow humanoid robots with robust, generalizable loco‑manipulation capabilities. The proposed alignment pipeline, portable data acquisition system, and empirical validation together chart a promising path toward scalable, human‑centric learning for complex embodied agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment