CAPID: Context-Aware PII Detection for Question-Answering Systems

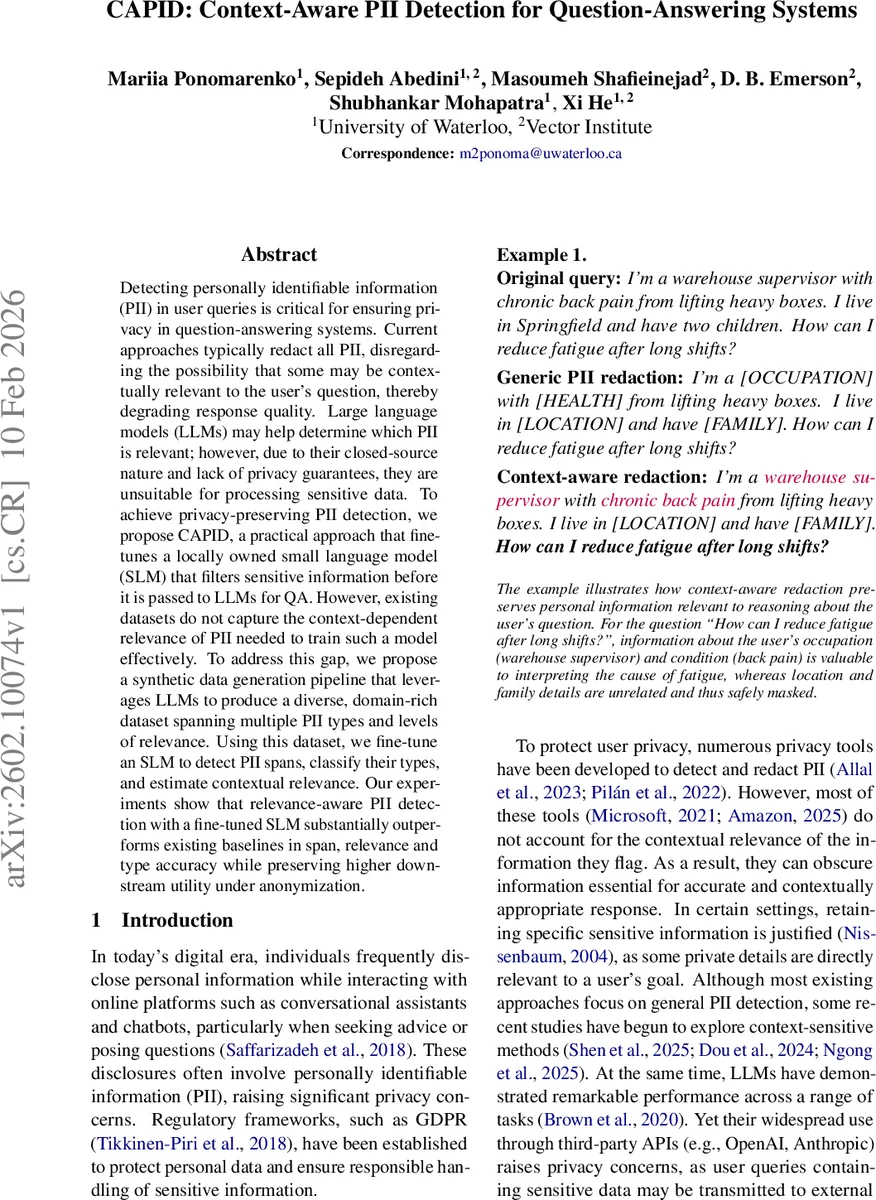

Detecting personally identifiable information (PII) in user queries is critical for ensuring privacy in question-answering systems. Current approaches mainly redact all PII, disregarding the fact that some of them may be contextually relevant to the user’s question, resulting in a degradation of response quality. Large language models (LLMs) might be able to help determine which PII are relevant, but due to their closed source nature and lack of privacy guarantees, they are unsuitable for sensitive data processing. To achieve privacy-preserving PII detection, we propose CAPID, a practical approach that fine-tunes a locally owned small language model (SLM) that filters sensitive information before it is passed to LLMs for QA. However, existing datasets do not capture the context-dependent relevance of PII needed to train such a model effectively. To fill this gap, we propose a synthetic data generation pipeline that leverages LLMs to produce a diverse, domain-rich dataset spanning multiple PII types and relevance levels. Using this dataset, we fine-tune an SLM to detect PII spans, classify their types, and estimate contextual relevance. Our experiments show that relevance-aware PII detection with a fine-tuned SLM substantially outperforms existing baselines in span, relevance and type accuracy while preserving significantly higher downstream utility under anonymization.

💡 Research Summary

The paper addresses a critical privacy‑utility trade‑off in question‑answering (QA) systems that handle user‑generated queries containing personally identifiable information (PII). Existing privacy tools typically redact all PII indiscriminately, which often removes information that is essential for answering the user’s question (e.g., occupation or medical condition). The authors propose CAPID (Context‑Aware PII Detection), a framework that fine‑tunes a locally hosted small language model (SLM) to (i) locate PII spans, (ii) classify their type, and (iii) predict a binary relevance label indicating whether each span should be retained or masked given the specific question.

A major obstacle is the lack of datasets that capture the contextual relevance of PII. To fill this gap, the authors design a three‑stage synthetic data generation pipeline powered by large language models (GPT‑4.1‑mini and GPT‑5).

-

Topic Generation – Starting from a taxonomy of 14 fine‑grained PII categories (occupation, health, demographic, finance, etc.), they enumerate all unordered pairs (except name‑code) yielding 78 type combinations. For each pair, 10 high‑level topics and 20 sub‑topics are generated, resulting in 15,600 topic‑subtopic pairs. After deduplication, 11,663 unique (type1, type2, sub‑topic) triples remain.

-

PII, Context, and Question Generation – Each triple is used to construct a “situation” paragraph containing both high‑relevance PII (directly needed to answer the question) and a “peripheral” paragraph containing low‑relevance PII (irrelevant details). The generation proceeds in a step‑wise fashion: first, the model creates concrete PII values conditioned on the topic; second, it writes a question that implicitly relies on the high‑relevance PII; third, a refinement prompt abstracts the question to a neutral form while preserving intent. This design ensures that the relevance of each PII span is implicit, mimicking real‑world conversational behavior where users often ask abstract questions but embed crucial personal details in the context.

-

Context Enhancement – The raw context is paraphrased by an LLM to improve fluency and diversity, while a consistency check guarantees that the original PII strings remain unchanged. If a span is altered, the system automatically updates the annotation to preserve alignment.

Human validation follows: five annotators, equipped with detailed guidelines and a custom Streamlit editing tool, manually verify and correct the automatically generated annotations. They re‑classify any PII whose relevance was mis‑assigned and ensure that questions do not leak abstracted PII. The final curated dataset comprises 2,307 examples (2,107 training, 200 test). Table 1 in the paper shows the distribution of high vs. low relevance across PII types; categories such as occupation, health, demographic, and location are roughly balanced, whereas name and code are almost always low relevance, reflecting real privacy concerns.

For model training, the authors fine‑tune Llama‑3.2‑3B and Llama‑3.1‑8B using the Unsloth framework with 4‑bit quantization and LoRA adapters. The training objective is causal language modeling where only the JSON‑formatted label segment contributes to loss, enabling simultaneous learning of span extraction, type classification, and relevance estimation.

Evaluation compares CAPID against three baselines: (a) GPT‑4.1‑mini (zero‑shot) for relevance prediction, (b) the method of Ngong et al. (2025) that detects “contextually unnecessary” details and reformulates prompts, and (c) Microsoft Presidio, a rule‑based NER system. Metrics include span‑level F1, type accuracy, and relevance accuracy. CAPID achieves a relevance accuracy jump from 0.68 (GPT‑4.1‑mini) to 0.79, and type accuracy improvements of ~7 %.

To assess downstream utility, the authors adopt an “LLM‑as‑judge” protocol: the same downstream LLM (e.g., GPT‑4) generates answers to the original queries after applying different anonymization strategies (full redaction, CAPID‑based selective masking, Ngong’s approach). Human‑aligned judges rate answer usefulness. CAPID‑masked queries retain significantly higher utility—approximately 12 % higher average usefulness scores than full redaction, and outperform Ngong’s method by ~5 %.

Key contributions:

- Introduction of CAPID, a synthetic dataset explicitly designed for context‑aware PII detection, covering diverse domains and relevance levels.

- Demonstration that fine‑tuned SLMs can reliably predict PII span, type, and relevance, surpassing large closed‑source models while keeping data on‑premise.

- Empirical evidence that relevance‑aware anonymization preserves downstream QA performance far better than blanket redaction.

The paper concludes by highlighting future directions: scaling the dataset with real user logs, extending to multilingual settings, and improving automatic relevance annotation to reduce human effort. Overall, CAPID offers a practical, privacy‑preserving solution that bridges the gap between data protection and answer quality in modern QA systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment