ATTNPO: Attention-Guided Process Supervision for Efficient Reasoning

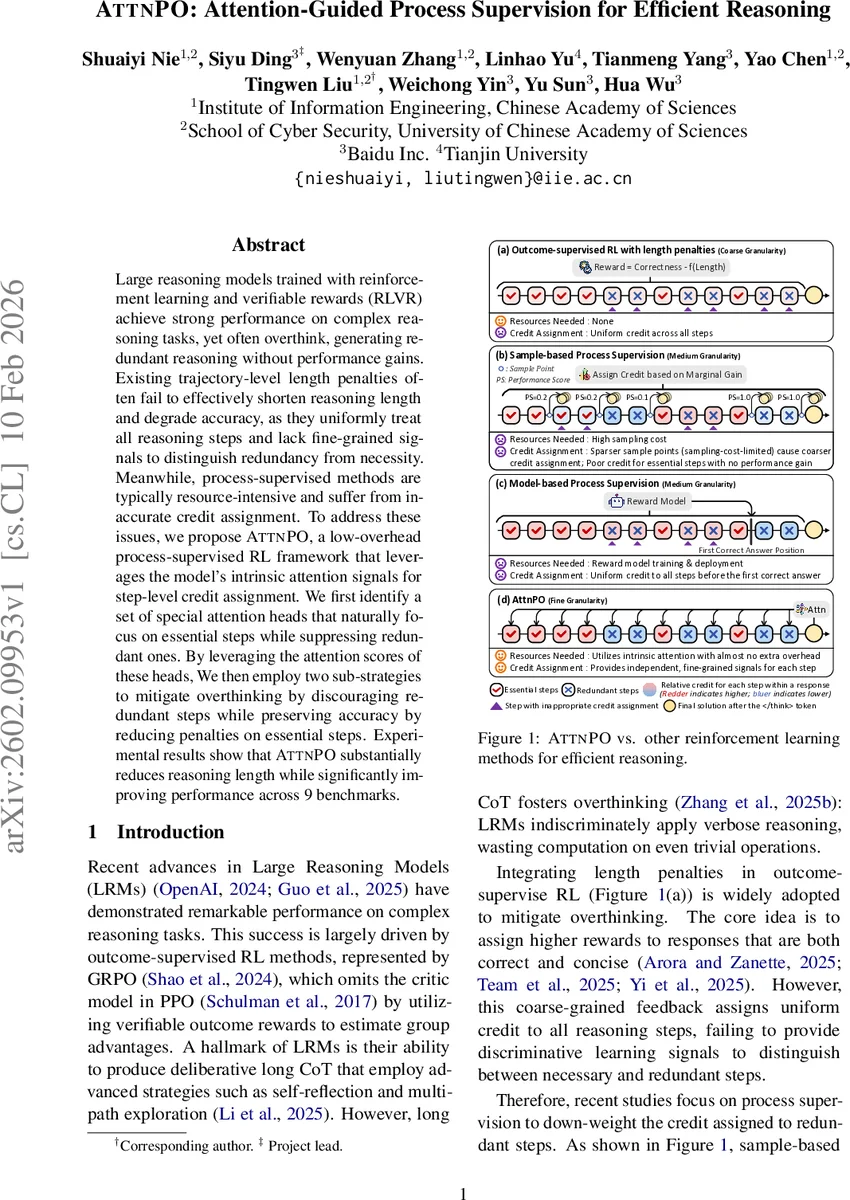

Large reasoning models trained with reinforcement learning and verifiable rewards (RLVR) achieve strong performance on complex reasoning tasks, yet often overthink, generating redundant reasoning without performance gains. Existing trajectory-level length penalties often fail to effectively shorten reasoning length and degrade accuracy, as they uniformly treat all reasoning steps and lack fine-grained signals to distinguish redundancy from necessity. Meanwhile, process-supervised methods are typically resource-intensive and suffer from inaccurate credit assignment. To address these issues, we propose ATTNPO, a low-overhead process-supervised RL framework that leverages the model’s intrinsic attention signals for step-level credit assignment. We first identify a set of special attention heads that naturally focus on essential steps while suppressing redundant ones. By leveraging the attention scores of these heads, We then employ two sub-strategies to mitigate overthinking by discouraging redundant steps while preserving accuracy by reducing penalties on essential steps. Experimental results show that ATTNPO substantially reduces reasoning length while significantly improving performance across 9 benchmarks.

💡 Research Summary

The paper tackles the “overthinking” problem of large reasoning models (LRMs), which often generate excessively long chain‑of‑thought (CoT) sequences that do not improve performance. Existing length‑penalty methods apply a uniform penalty to all steps, failing to distinguish essential reasoning from redundant steps, while process‑supervised approaches either require costly sampling or an auxiliary reward model and suffer from inaccurate credit assignment.

The authors discover that, during the final answer generation phase (after the special token), a small subset of attention heads in the transformer naturally assign higher attention weights to essential reasoning steps and suppress redundant ones. They call these heads “Key‑Focus Heads” (KFH). Through a probing study on a carefully annotated dataset (300 questions with step‑level essential/redundant labels), they compute a Step Ranking Accuracy (SRA) for each head. A few heads in the middle‑to‑late layers achieve SRA > 0.9, while most heads perform at chance. The behavior of KFHs remains stable under RL training with length penalties, and their signals generalize to harder problems (e.g., AIME24).

Building on this insight, the paper proposes ATTNPO (Attention‑guided Policy Optimization), a low‑overhead process‑supervised reinforcement learning framework. The core idea is Stepwise Advantage Rescaling: the outcome‑level advantage A (derived from a correctness‑plus‑length reward) is multiplied by a non‑negative scaling factor γₛₖ for each step sₖ, where γₛₖ is computed from the attention scores of the selected KFHs. This yields a step‑wise advantage ˆAₛₖ = γₛₖ·A, enabling fine‑grained credit assignment without altering the sign of the original advantage.

Two complementary attenuation strategies are applied only to correct responses (to avoid noisy credit on incorrect ones).

- Positive‑Adv Attenuation: When A > 0, γₛₖ is reduced for steps identified as redundant, decreasing their positive reinforcement and thus discouraging overthinking.

- Negative‑Adv Attenuation: When A < 0, γₛₖ is increased for essential steps, softening the penalty on valid reasoning and preventing performance loss.

Because the method relies solely on the model’s intrinsic attention, it incurs negligible additional computation compared with baseline outcome‑supervised RL methods such as GRPO or RLOO.

Empirical evaluation on nine benchmarks (including six math suites, logical reasoning, and code generation) using DeepSeek‑R1‑Distill‑Qwen‑1.5B and a 7B variant shows that ATTNPO reduces reasoning length by about 60 % while improving accuracy by an average of 7.3 percentage points. Ablation studies confirm that only a few top‑ranked KFHs are needed; random heads provide no benefit, and the approach outperforms existing sample‑based and model‑based process supervision in both effectiveness and computational cost.

In summary, ATTNPO demonstrates that internal attention signals can serve as reliable, fine‑grained supervision cues for distinguishing essential from redundant reasoning steps. By rescaling advantages at the step level, it achieves simultaneous gains in efficiency and performance with virtually no extra training overhead. The work opens avenues for further research on automatic KFH discovery across architectures, dynamic head selection, and application to multimodal or more complex reasoning tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment