Environment-in-the-Loop: Rethinking Code Migration with LLM-based Agents

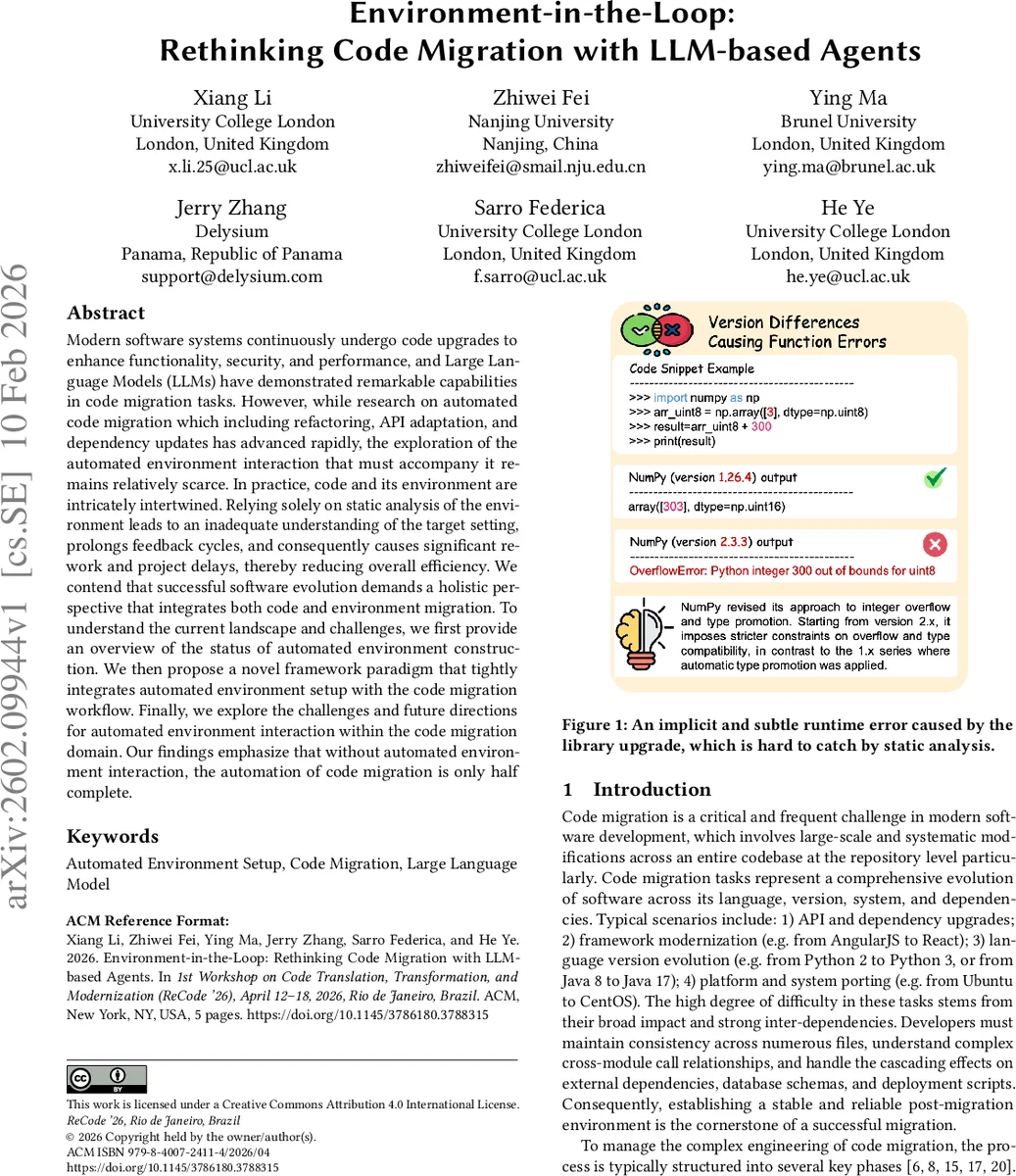

Modern software systems continuously undergo code upgrades to enhance functionality, security, and performance, and Large Language Models (LLMs) have demonstrated remarkable capabilities in code migration tasks. However, while research on automated code migration which including refactoring, API adaptation, and dependency updates has advanced rapidly, the exploration of the automated environment interaction that must accompany it remains relatively scarce. In practice, code and its environment are intricately intertwined. Relying solely on static analysis of the environment leads to an inadequate understanding of the target setting, prolongs feedback cycles, and consequently causes significant rework and project delays, thereby reducing overall efficiency. We contend that successful software evolution demands a holistic perspective that integrates both code and environment migration. To understand the current landscape and challenges, we first provide an overview of the status of automated environment construction. We then propose a novel framework paradigm that tightly integrates automated environment setup with the code migration workflow. Finally, we explore the challenges and future directions for automated environment interaction within the code migration domain. Our findings emphasize that without automated environment interaction, the automation of code migration is only half complete.

💡 Research Summary

The paper addresses a critical gap in automated code migration: the lack of systematic interaction with the execution environment. While large language models (LLMs) have shown impressive abilities in generating migration patches, current workflows rely heavily on static analysis of the original environment, which cannot capture subtle runtime incompatibilities such as version‑specific internal constraints (e.g., NumPy 1.x vs. 2.x). The authors argue that successful software evolution requires a holistic approach that treats code and environment as co‑evolving entities.

To map the current landscape, the authors review existing automated environment construction techniques, noting that rule‑based or template‑based methods still demand substantial manual input and are often limited to particular languages or platforms. They also survey recent advances in LLM‑based agents, which typically consist of planning, memory, perception, and action modules, and highlight successful prototypes such as ExecutionAgent and the EnvBench benchmark that evaluate environment‑setup success across multiple repositories.

The core contribution is a novel “environment‑in‑the‑loop” framework built around three specialized LLM agents:

- Migration Agent (M‑Agent) – parses the source repository and migration specifications, produces an initial migrated codebase, dependency upgrade plans, and identifies risk points.

- Environment Agent (E‑Agent) – receives the migrated code and manifests, automatically provisions a reproducible build and runtime environment (selecting base images, installing packages, configuring build tools, and launching an isolated sandbox). It captures build and runtime logs, diagnoses failures, and generates structured diagnostic reports.

- Testsuite Agent (T‑Agent) – automatically creates, repairs, or extends unit, integration, and regression tests based on legacy tests and behavioral specifications, then executes them inside the environment supplied by the E‑Agent.

The workflow is triggered automatically by CI/CD pipelines when version upgrades, framework migrations, or dependency changes are detected. The M‑Agent first drafts a migration plan; the E‑Agent then builds the environment and runs the code. If the build fails, the E‑Agent attempts self‑repair for configuration issues; otherwise, it forwards semantic errors to the M‑Agent. Test failures are routed to the T‑Agent for test generation and refinement. All feedback loops are anchored on the E‑Agent, turning the migration process into a closed‑loop system where code changes, environment adjustments, and test validations continuously inform each other. Successful outcomes—including migrated code, environment images, test suites, coverage reports, and execution logs—are archived as CI/CD artifacts, enabling automatic re‑validation whenever drift is detected.

The authors discuss several open challenges: (a) achieving reliable environment reproducibility across diverse platforms, possibly by learning environment semantics from build scripts, CI logs, or runtime traces; (b) designing robust multi‑agent coordination mechanisms, perhaps using reinforcement learning or cooperative multi‑agent strategies, to ensure that feedback leads to meaningful code or configuration fixes rather than blind retries; and (c) addressing industrial concerns such as security, resource isolation, and scalability of sandboxed environments.

In conclusion, the paper posits that code migration automation is only “half complete” without automated environment interaction. By integrating LLM‑driven agents for migration planning, environment provisioning, and test generation into a unified feedback loop, the proposed paradigm promises substantial reductions in rework, higher migration success rates, and greater resilience against dependency and configuration drift in modern software development pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment