TaCo: A Benchmark for Lossless and Lossy Codecs of Heterogeneous Tactile Data

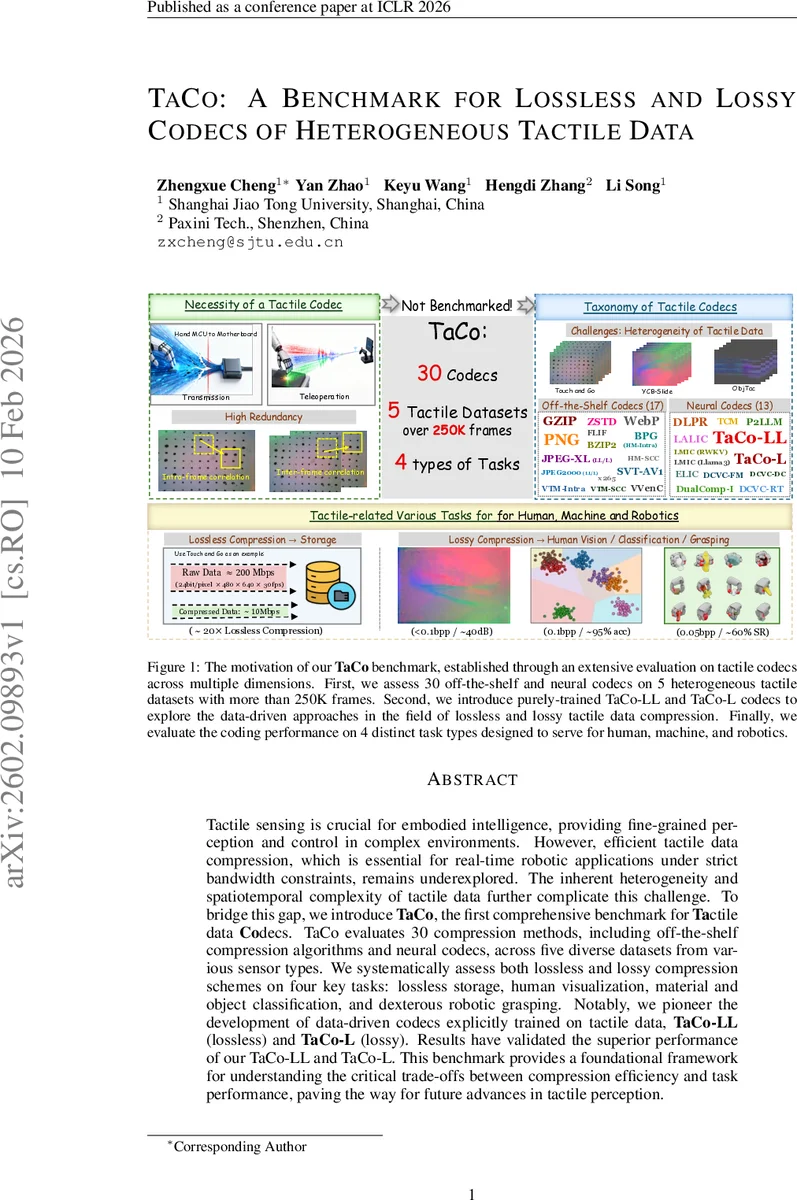

Tactile sensing is crucial for embodied intelligence, providing fine-grained perception and control in complex environments. However, efficient tactile data compression, which is essential for real-time robotic applications under strict bandwidth constraints, remains underexplored. The inherent heterogeneity and spatiotemporal complexity of tactile data further complicate this challenge. To bridge this gap, we introduce TaCo, the first comprehensive benchmark for Tactile data Codecs. TaCo evaluates 30 compression methods, including off-the-shelf compression algorithms and neural codecs, across five diverse datasets from various sensor types. We systematically assess both lossless and lossy compression schemes on four key tasks: lossless storage, human visualization, material and object classification, and dexterous robotic grasping. Notably, we pioneer the development of data-driven codecs explicitly trained on tactile data, TaCo-LL (lossless) and TaCo-L (lossy). Results have validated the superior performance of our TaCo-LL and TaCo-L. This benchmark provides a foundational framework for understanding the critical trade-offs between compression efficiency and task performance, paving the way for future advances in tactile perception.

💡 Research Summary

The paper introduces TaCo, the first comprehensive benchmark dedicated to evaluating compression codecs for tactile data, a domain that has received little systematic attention despite its importance for embodied AI, teleoperation, and dexterous manipulation. The authors collect five publicly available tactile datasets that span a wide range of sensor modalities: two GelSight‑based vision tactile datasets (Touch and Go, ObjectFolder 1.0), two DIGIT‑based video tactile datasets (SSVTP, YCB‑Slide), and one force‑sensor dataset (ObjTac). These datasets differ in resolution (120 × 160 to 640 × 480), frame rate (30 Hz to 200 Hz), and data format (image sequences, video streams, and stacked force vectors), thereby representing the heterogeneity that characterizes real‑world tactile sensing.

TaCo evaluates 30 compression methods, divided into two major families. The first family consists of off‑the‑shelf, signal‑processing based codecs originally designed for text, images, or video: general‑purpose lossless compressors (gzip, zstd, bzip2), image lossless codecs (PNG, FLIF, WebP, JPEG‑XL, JPEG2000, BPG), image lossy codecs (JPEG‑XL, JPEG2000, HM‑Intra, HM‑SCC, VTM‑Intra, VTM‑SCC), and video lossy codecs (VVenC, x265, SVT‑AV1). The second family comprises neural codecs that learn data‑driven representations: pretrained image compressors (ELIC, TCM, LALIC), pretrained video compressors (DCVC‑DC, DCVC‑FM), and large‑model based compressors (LMIC built on RWKV‑7B and Llama‑3). Importantly, the neural codecs are applied to tactile data without any domain‑specific fine‑tuning, allowing the authors to assess cross‑modal generalization.

In addition to the off‑the‑shelf and pretrained neural codecs, the authors propose two novel data‑driven tactile codecs trained end‑to‑end on the collected datasets. TaCo‑LL (lossless) follows an autoregressive architecture that predicts the probability distribution of each symbol conditioned on previous symbols and then uses arithmetic coding to achieve near‑entropy compression. TaCo‑L (lossy) employs an analysis‑synthesis transform pair, quantization, and a hyper‑autoencoder to model the latent distribution, optimizing a rate‑distortion loss L = λ·D + R. Both models are trained on a 70 %/30 % train‑test split across all datasets.

Four task categories are defined to measure the practical impact of compression: (1) lossless storage – measuring compression ratio and reconstruction fidelity; (2) human visualization – using PSNR, SSIM, and LPIPS to evaluate how well compressed tactile images retain perceptual quality; (3) material and object classification – training a CNN on compressed data and reporting top‑1 accuracy; (4) dexterous robotic grasping – feeding compressed tactile streams to a grasping controller and measuring success rate. This multi‑task evaluation reveals how compression choices affect downstream perception and control, not just raw bit savings.

Experimental results show that TaCo‑LL consistently achieves around 20× compression over raw data while preserving exact reconstruction, outperforming all traditional lossless codecs. TaCo‑L reaches approximately 0.1 bpp, delivering PSNR ≈ 40 dB and SSIM ≈ 0.95, and maintains classification accuracy and grasping success rates above 95 %—substantially higher than any pretrained neural codec or conventional lossy codec. Conventional image/video codecs, while sometimes competitive in visual quality, suffer steep drops in downstream task performance as bitrate decreases, highlighting the importance of learning tactile‑specific redundancies. The large‑model based LMIC codecs perform poorly relative to domain‑specific models, indicating that tactile data distribution differs markedly from natural images or text and that dedicated pretraining is needed.

The authors also analyze the non‑linear relationship between bitrate and task performance, providing guidelines for selecting operating points under strict bandwidth constraints (e.g., <0.1 bpp for teleoperation with ≤95 % task fidelity). They note that vision‑based tactile data benefit from spatial‑temporal codecs (video compressors), whereas force‑based data are better served by 1‑D sequence compressors.

Finally, the paper outlines future research directions: (i) multimodal compression frameworks that jointly encode vision, force, audio, and language streams; (ii) end‑to‑end compression‑for‑recognition pipelines that directly optimize downstream task loss; (iii) lightweight hardware‑friendly neural codecs for embedded tactile processors; and (iv) adaptive bitrate control mechanisms responsive to network latency and robot control loops. By releasing the datasets, code, and benchmark suite, TaCo establishes a standardized platform that will accelerate progress in tactile data compression and its integration into real‑time robotic systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment