AdaTSQ: Pushing the Pareto Frontier of Diffusion Transformers via Temporal-Sensitivity Quantization

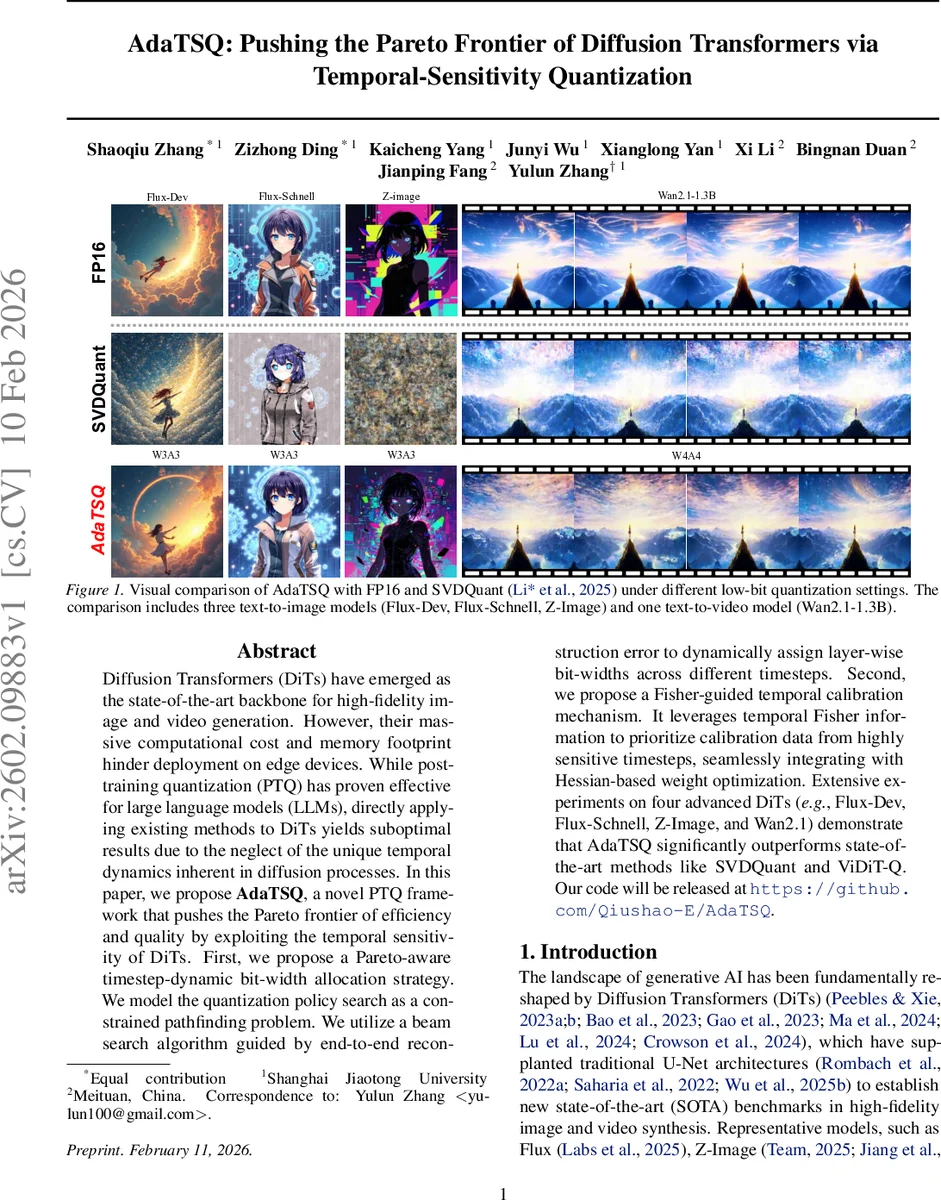

Diffusion Transformers (DiTs) have emerged as the state-of-the-art backbone for high-fidelity image and video generation. However, their massive computational cost and memory footprint hinder deployment on edge devices. While post-training quantization (PTQ) has proven effective for large language models (LLMs), directly applying existing methods to DiTs yields suboptimal results due to the neglect of the unique temporal dynamics inherent in diffusion processes. In this paper, we propose AdaTSQ, a novel PTQ framework that pushes the Pareto frontier of efficiency and quality by exploiting the temporal sensitivity of DiTs. First, we propose a Pareto-aware timestep-dynamic bit-width allocation strategy. We model the quantization policy search as a constrained pathfinding problem. We utilize a beam search algorithm guided by end-to-end reconstruction error to dynamically assign layer-wise bit-widths across different timesteps. Second, we propose a Fisher-guided temporal calibration mechanism. It leverages temporal Fisher information to prioritize calibration data from highly sensitive timesteps, seamlessly integrating with Hessian-based weight optimization. Extensive experiments on four advanced DiTs (e.g., Flux-Dev, Flux-Schnell, Z-Image, and Wan2.1) demonstrate that AdaTSQ significantly outperforms state-of-the-art methods like SVDQuant and ViDiT-Q. Our code will be released at https://github.com/Qiushao-E/AdaTSQ.

💡 Research Summary

AdaTSQ introduces a novel post‑training quantization (PTQ) framework specifically designed for diffusion transformers (DiTs), which have become the dominant architecture for high‑fidelity image and video generation. While DiTs achieve state‑of‑the‑art quality, their massive parameter counts and the iterative denoising process (often hundreds of timesteps) make them prohibitive for edge deployment. Existing PTQ methods, originally crafted for large language models (LLMs), assume static activation statistics and therefore perform poorly when applied directly to DiTs. AdaTSQ tackles this gap by explicitly modeling the temporal heterogeneity of DiTs and using it to guide both bit‑width allocation and weight calibration.

Temporal Sensitivity via Fisher Information

The authors first quantify how sensitive each layer is to quantization noise at each diffusion timestep. They approximate Fisher information (I_{t,l}=E

Comments & Academic Discussion

Loading comments...

Leave a Comment