Free-GVC: Towards Training-Free Extreme Generative Video Compression with Temporal Coherence

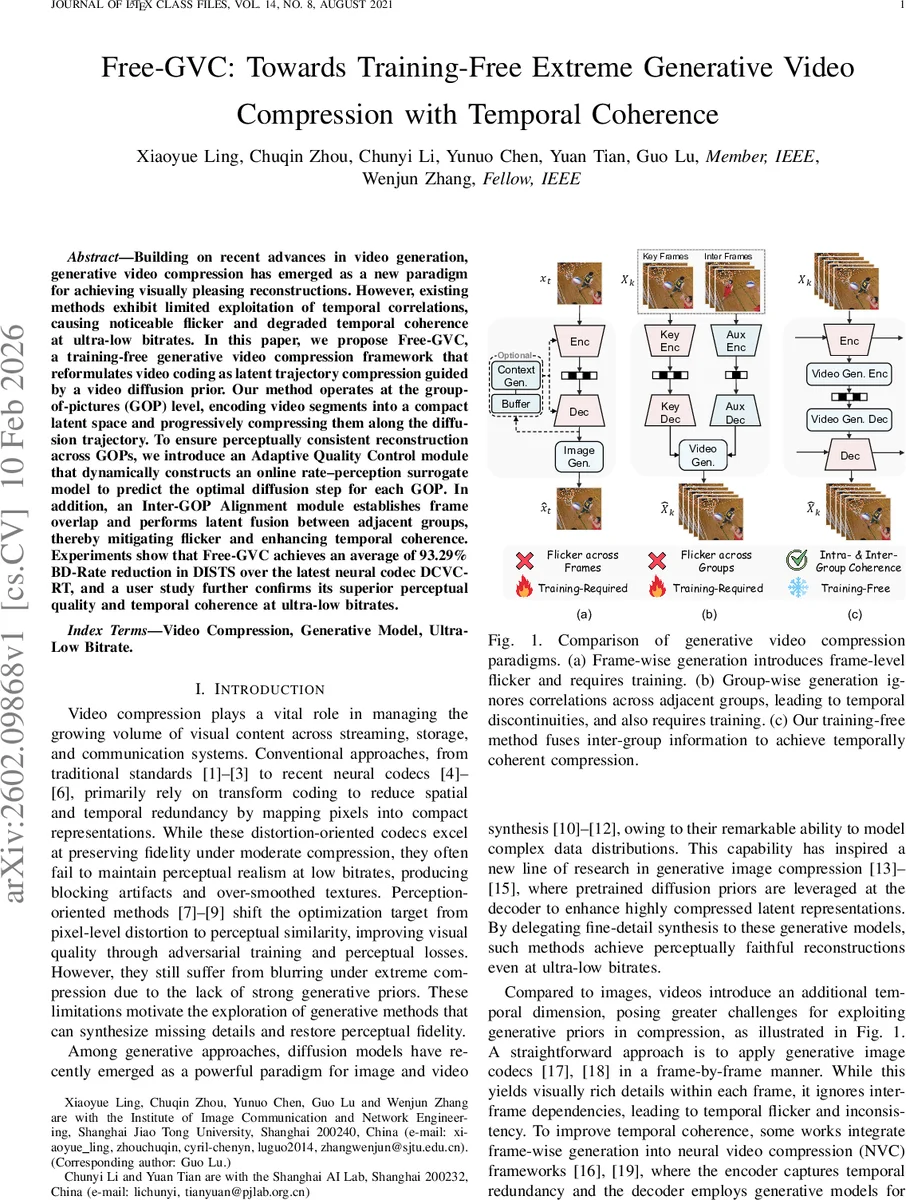

Building on recent advances in video generation, generative video compression has emerged as a new paradigm for achieving visually pleasing reconstructions. However, existing methods exhibit limited exploitation of temporal correlations, causing noticeable flicker and degraded temporal coherence at ultra-low bitrates. In this paper, we propose Free-GVC, a training-free generative video compression framework that reformulates video coding as latent trajectory compression guided by a video diffusion prior. Our method operates at the group-of-pictures (GOP) level, encoding video segments into a compact latent space and progressively compressing them along the diffusion trajectory. To ensure perceptually consistent reconstruction across GOPs, we introduce an Adaptive Quality Control module that dynamically constructs an online rate-perception surrogate model to predict the optimal diffusion step for each GOP. In addition, an Inter-GOP Alignment module establishes frame overlap and performs latent fusion between adjacent groups, thereby mitigating flicker and enhancing temporal coherence. Experiments show that Free-GVC achieves an average of 93.29% BD-Rate reduction in DISTS over the latest neural codec DCVC-RT, and a user study further confirms its superior perceptual quality and temporal coherence at ultra-low bitrates.

💡 Research Summary

Free‑GVC introduces a training‑free generative video compression framework that leverages a pretrained video diffusion prior to compress video at the group‑of‑pictures (GOP) level. The authors first split the input video into GOPs and map each segment into a compact latent representation using a frozen VAE‑style encoder from the diffusion model. These latents are then progressively noised along the diffusion trajectory, where the amount of noise directly corresponds to the target bitrate. Using Reverse Channel Coding (RCC) together with the Poisson Functional Representation (PFR) algorithm, each noisy latent zₜ is efficiently encoded, turning the diffusion process into a controllable rate‑distortion mechanism.

A central challenge in diffusion‑based compression is the stochastic nature of generation: the same bitrate can yield widely varying perceptual quality. To address this, Free‑GVC proposes an Adaptive Quality Control (AQC) module that builds an online rate‑perception surrogate during encoding. For each candidate diffusion step t, a small set of decoded frames is generated and evaluated with perceptual metrics such as DISTS and LPIPS. These measurements are fed into an online regression (or Bayesian optimization) model that predicts the optimal timestep t* for the current GOP, ensuring a stable perceptual quality while minimizing bitrate.

Temporal coherence is another major issue for GOP‑wise generative codecs, which often treat each group independently and thus produce flicker at GOP boundaries. The Inter‑GOP Alignment (IGA) module mitigates this by introducing overlapping frames between consecutive GOPs and fusing their latent representations. Overlap frames are encoded twice (once in each GOP) and, during decoding, the corresponding latents are merged via averaging or attention‑based weighting. This latent fusion yields smooth transitions across GOP boundaries, dramatically reducing flicker and preserving motion continuity.

Extensive experiments on HEVC Class B–E, UVG, and MCL‑JCV datasets demonstrate that Free‑GVC achieves an average 93.29 % BD‑Rate reduction in DISTS compared with the state‑of‑the‑art neural codec DCVC‑RT. It also outperforms existing diffusion‑based video codecs (e.g., GLC‑Video) in temporal consistency, as measured by tOF, and in a user study it is preferred both for visual fidelity and temporal smoothness even at half the bitrate of DCVC‑RT. Qualitative examples show that Free‑GVC maintains high‑frequency texture details while keeping frame‑to‑frame motion coherent, something that conventional distortion‑oriented codecs (VVC, DCVC‑FM) cannot achieve at such low bitrates.

In summary, Free‑GVC makes three key contributions: (1) it reframes video compression as diffusion‑guided latent trajectory coding, enabling a training‑free pipeline that directly exploits powerful generative priors; (2) it introduces an online adaptive quality control mechanism that aligns bitrate with perceptual quality on a per‑GOP basis; and (3) it proposes an inter‑GOP alignment strategy that fuses overlapping latent features to eliminate flicker. By combining these components, the method delivers perceptually superior reconstructions with strong temporal coherence at ultra‑low bitrates, opening a practical path for generative video compression without the need for costly model retraining. Future work may explore larger diffusion models, real‑time encoding optimizations, and multimodal conditioning (e.g., text or audio) to further enhance compression efficiency and flexibility.

Comments & Academic Discussion

Loading comments...

Leave a Comment