CompSplat: Compression-aware 3D Gaussian Splatting for Real-world Video

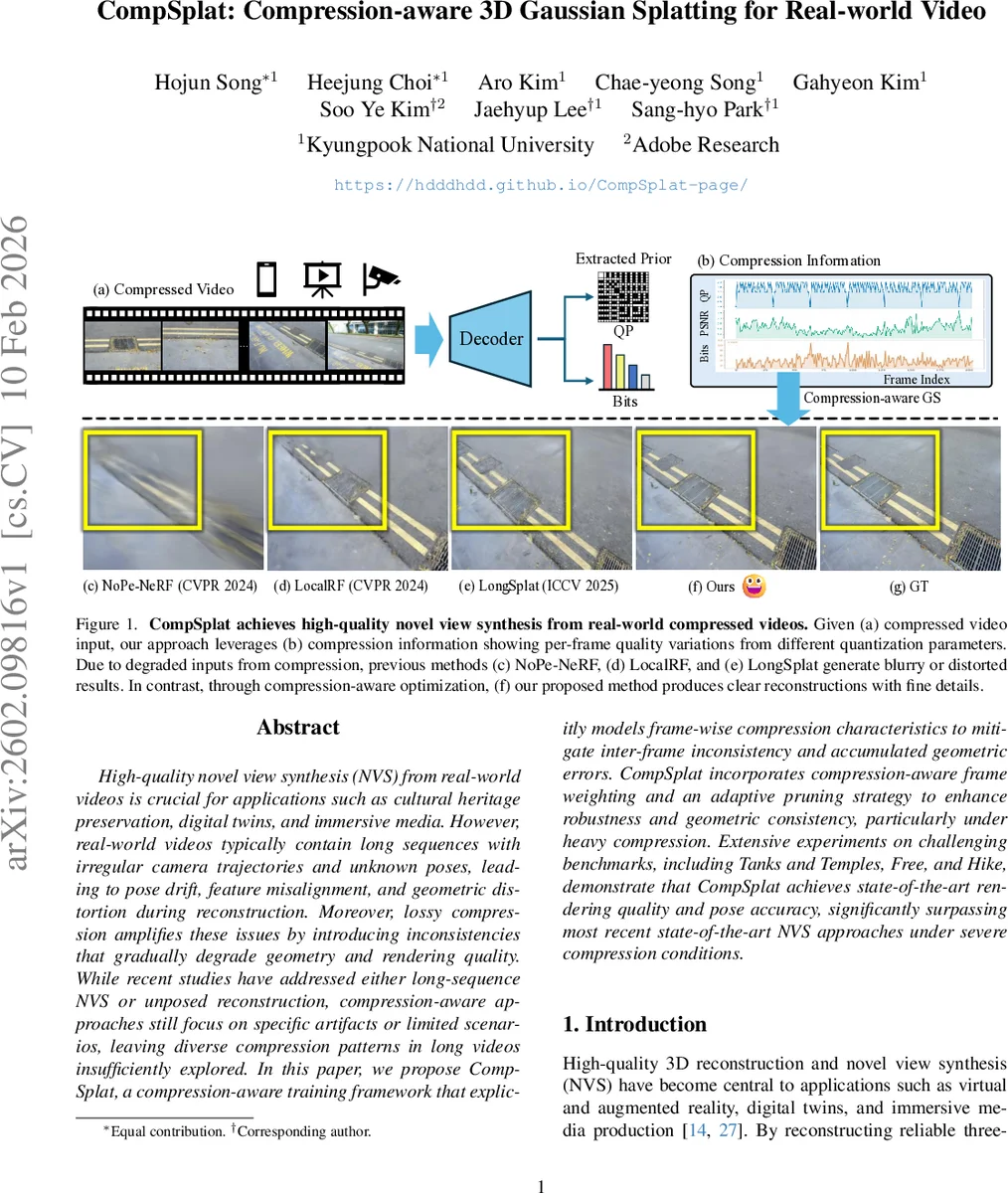

High-quality novel view synthesis (NVS) from real-world videos is crucial for applications such as cultural heritage preservation, digital twins, and immersive media. However, real-world videos typically contain long sequences with irregular camera trajectories and unknown poses, leading to pose drift, feature misalignment, and geometric distortion during reconstruction. Moreover, lossy compression amplifies these issues by introducing inconsistencies that gradually degrade geometry and rendering quality. While recent studies have addressed either long-sequence NVS or unposed reconstruction, compression-aware approaches still focus on specific artifacts or limited scenarios, leaving diverse compression patterns in long videos insufficiently explored. In this paper, we propose CompSplat, a compression-aware training framework that explicitly models frame-wise compression characteristics to mitigate inter-frame inconsistency and accumulated geometric errors. CompSplat incorporates compression-aware frame weighting and an adaptive pruning strategy to enhance robustness and geometric consistency, particularly under heavy compression. Extensive experiments on challenging benchmarks, including Tanks and Temples, Free, and Hike, demonstrate that CompSplat achieves state-of-the-art rendering quality and pose accuracy, significantly surpassing most recent state-of-the-art NVS approaches under severe compression conditions.

💡 Research Summary

CompSplat addresses a critical gap in the field of novel view synthesis (NVS) from real‑world video: the simultaneous presence of long, unstructured camera trajectories and heavy lossy compression. While recent advances in 3D Gaussian Splatting (3DGS) have demonstrated real‑time rendering and NeRF‑level visual fidelity, they assume clean, short image sequences. In practice, consumer‑captured videos are often thousands of frames long, contain irregular motion, and are stored or streamed using codecs such as JPEG, H.264, or HEVC. These codecs introduce per‑frame quality fluctuations (quantization parameter QP, bitrate variations) that degrade high‑frequency details, break temporal coherence, and destabilize downstream pose estimation and feature matching. Existing unposed‑3DGS pipelines (e.g., LongSplat, CF‑3DGS) treat all frames equally, leading to over‑pruning of good frames and the proliferation of noisy Gaussians from badly compressed frames.

CompSplat proposes a compression‑aware training framework that explicitly models frame‑wise compression characteristics and integrates them into the Gaussian optimization loop. The core contributions are twofold:

-

Quality‑guided Density Control – For each frame t the method extracts codec metadata (QP and bits) and computes two normalized confidence scores, q_qₜ and q_bₜ, weighted by hyper‑parameters λ_q and λ_b. The sum qₜ = q_qₜ + q_bₜ is smoothed with an exponential moving average (EMA) to obtain a temporally stable confidence ¯qₜ. This confidence drives two adaptive mechanisms:

- Scale‑based Pruning – The opacity threshold ω used to discard Gaussians is made a function of ¯qₜ, so low‑quality frames use a higher ω (more aggressive pruning) while high‑quality frames keep a lower ω (preserving fine detail).

- Adaptive Densification – The gradient magnitude threshold θ for adding new Gaussians is also modulated by ¯qₜ. Consequently, high‑confidence frames densify aggressively, whereas low‑confidence frames contribute minimally, preventing noisy density growth.

-

Quality Gap‑aware Masking – Compression creates abrupt PSNR gaps between neighboring frames, which harms keypoint matching and pose refinement. CompSplat computes a per‑pixel mask based on keypoint matching strength and photometric residuals, then multiplies this mask into the photometric loss. The effect is to down‑weight supervision from frames that are visually inconsistent with their neighbors, stabilizing the incremental pose estimation (PnP + photometric refinement) and reducing reprojection error.

The overall pipeline builds on the unposed‑GS framework of LongSplat: (a) incremental pose estimation using correspondence‑guided PnP, (b) local optimization over short temporal windows, and (c) periodic global refinement. The new modules are inserted at each stage, allowing the optimizer to focus computational resources on reliable frames while safely ignoring or pruning unreliable ones.

Experimental validation is performed on compressed versions of three challenging benchmarks: Tanks & Temples, Free, and Hike. Compression levels are varied by adjusting QP (e.g., 22, 28, 34) and bitrate. Metrics include PSNR, SSIM, LPIPS for rendering fidelity, as well as pose RMSE and 3D reconstruction error. Across all settings, CompSplat outperforms prior state‑of‑the‑art NVS methods such as NoPe‑NeRF, LocalRF, and LongSplat. Notably, under severe compression (QP = 34) CompSplat gains >1.2 dB PSNR, reduces LPIPS by ~15 %, and cuts pose RMSE by roughly 30 % compared to the best baseline. Qualitative results show that fine textures and sharp edges are retained in high‑confidence frames, while blurred regions from low‑quality frames do not introduce spurious geometry.

Key insights derived from the study are:

- Explicitly modeling codec‑derived quality metrics is essential for stable long‑sequence optimization.

- Adaptive density control prevents the “over‑pruning” of good frames and the “noise explosion” from bad frames, leading to a more balanced Gaussian distribution.

- Masking based on inter‑frame quality gaps mitigates pose drift caused by inconsistent photometric supervision.

Limitations and future work are acknowledged. The current system focuses on static scenes; extending the framework to dynamic objects would require temporal consistency models for moving Gaussians. Real‑time streaming scenarios, where compression metadata must be extracted on‑the‑fly, are also an open direction. Moreover, the hyper‑parameters λ_q, λ_b, and EMA momentum β may need dataset‑specific tuning, suggesting a possible avenue for learning these weights end‑to‑end.

In summary, CompSplat introduces a principled, compression‑aware adaptation of 3D Gaussian Splatting that enables high‑quality novel view synthesis from long, heavily compressed real‑world videos. By integrating per‑frame confidence scores into both density regulation and photometric supervision, it achieves robust geometry, accurate pose estimation, and superior rendering quality, thereby bridging the gap between academic NVS research and practical deployment on consumer video content.

Comments & Academic Discussion

Loading comments...

Leave a Comment